US Index Prediction: A Multi-Index Framework for DJIA, S&P 500, and NAS100

Abstract

A literature review and research framework for predicting US equity index movements using cross-index dynamics. We identify several unstudied research gaps including price-weighted vs cap-weighted divergence signals and trivariate cointegration regime models. Empirical phases are in progress.

Project Roadmap

| Phase | Description | Status |

|---|---|---|

| Phase 1 | Literature Review | Complete |

| Phase 2 | Data Collection & Feature Engineering 7 gap studies completed — see Section 6 for full results. | Complete |

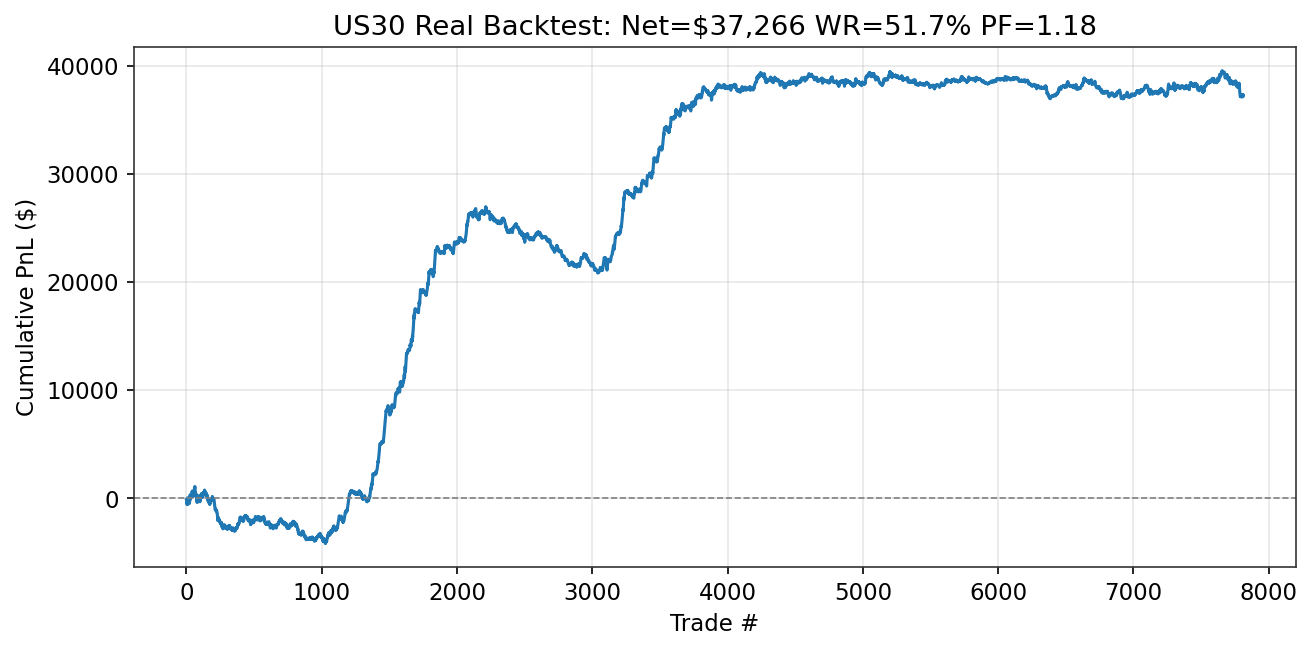

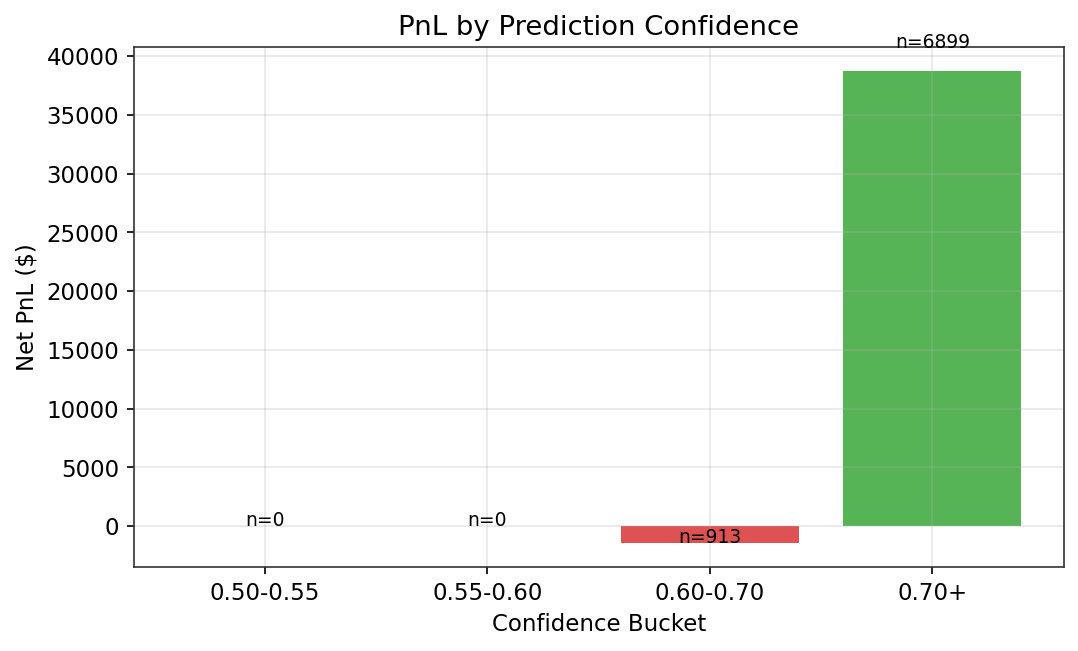

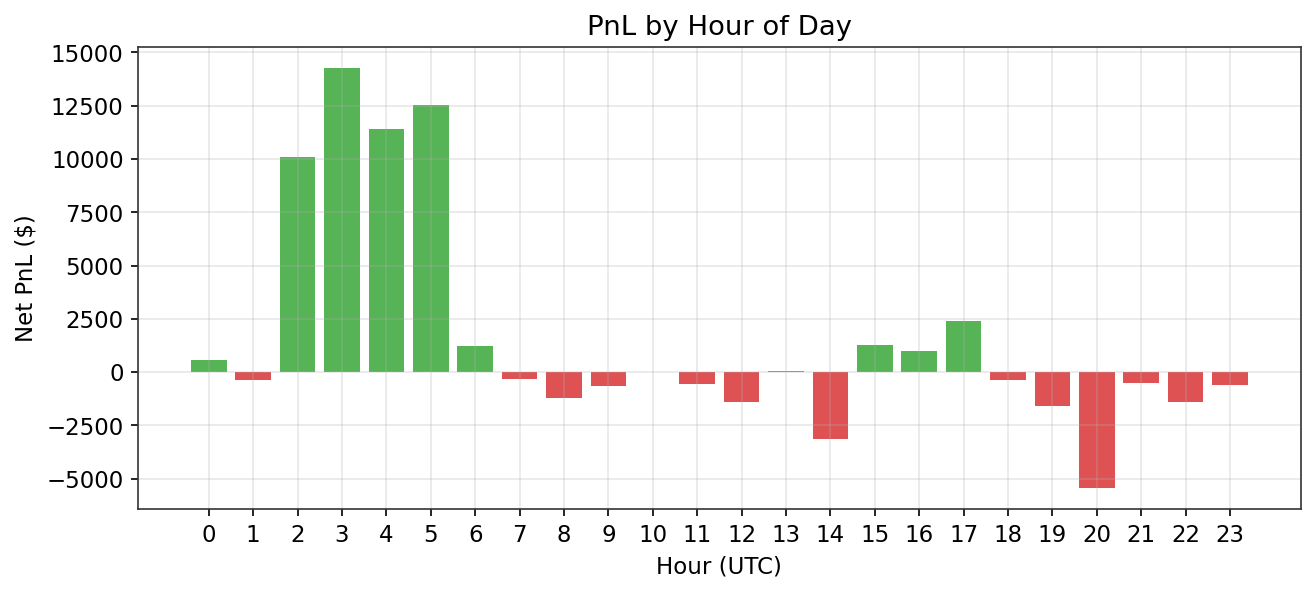

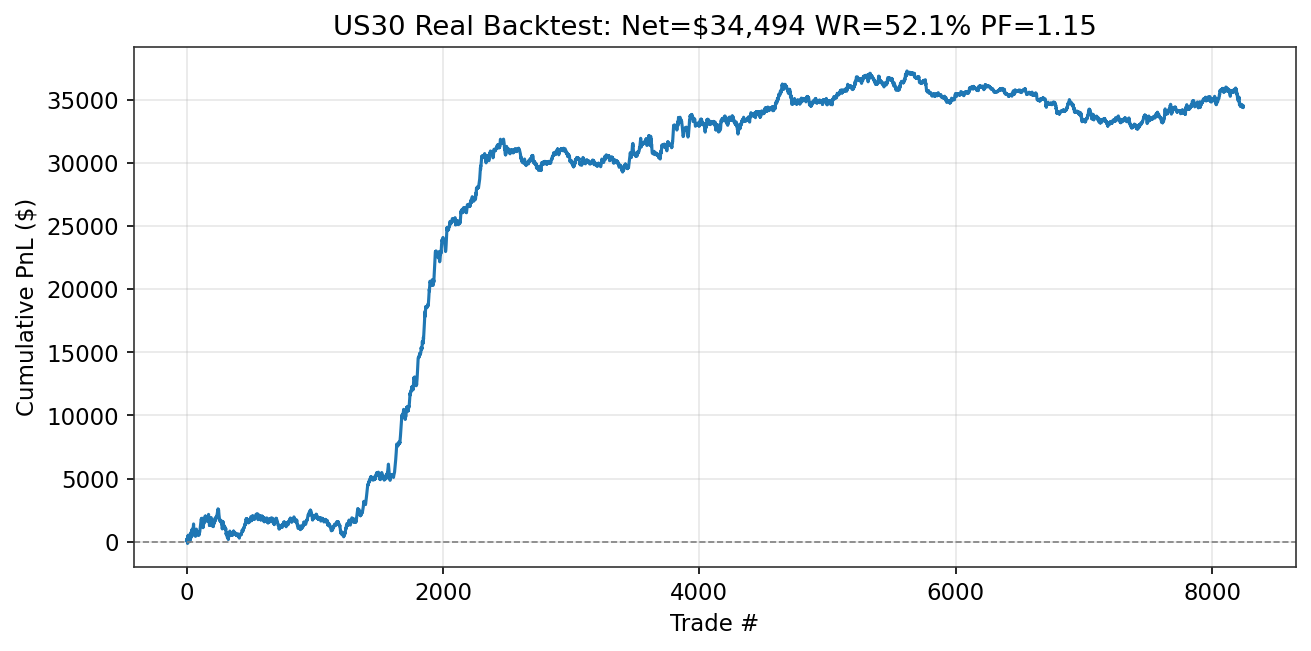

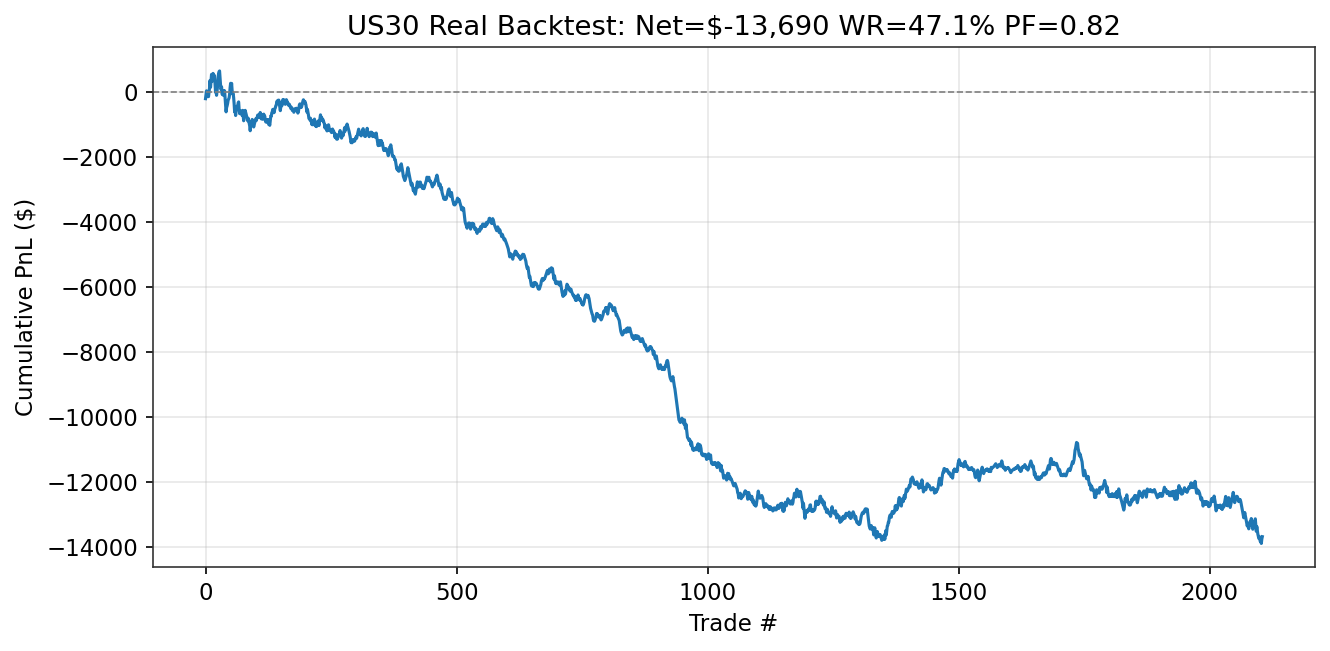

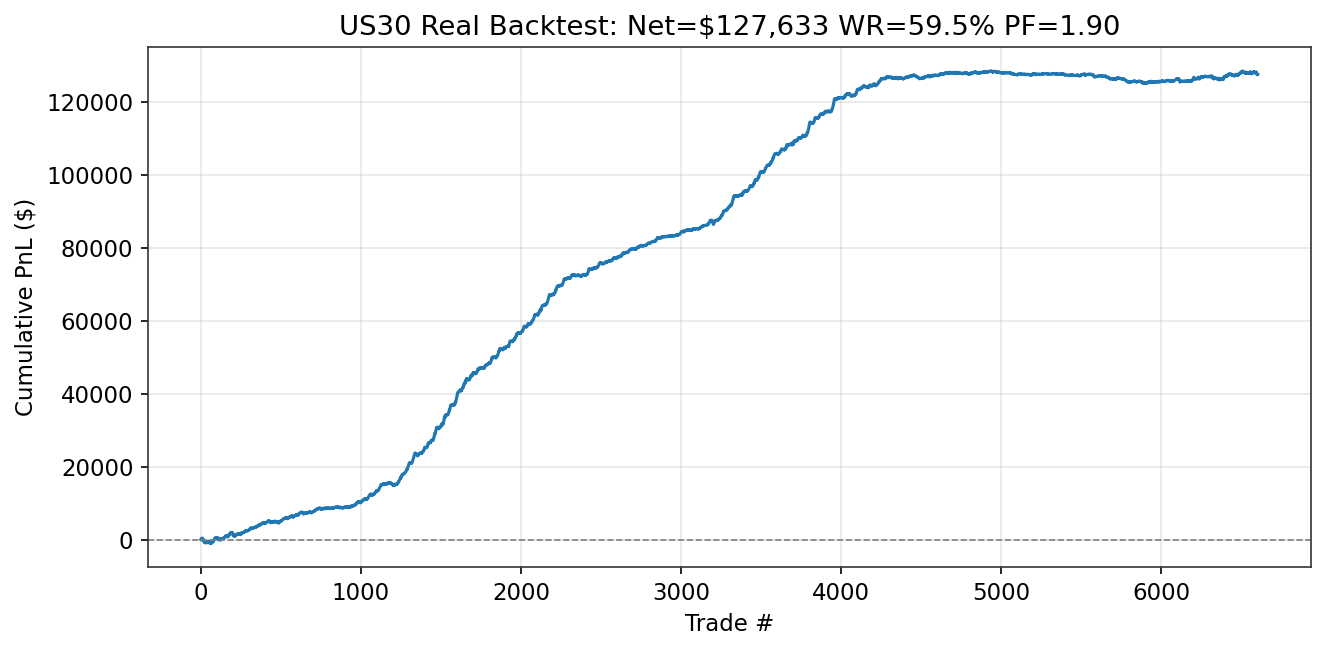

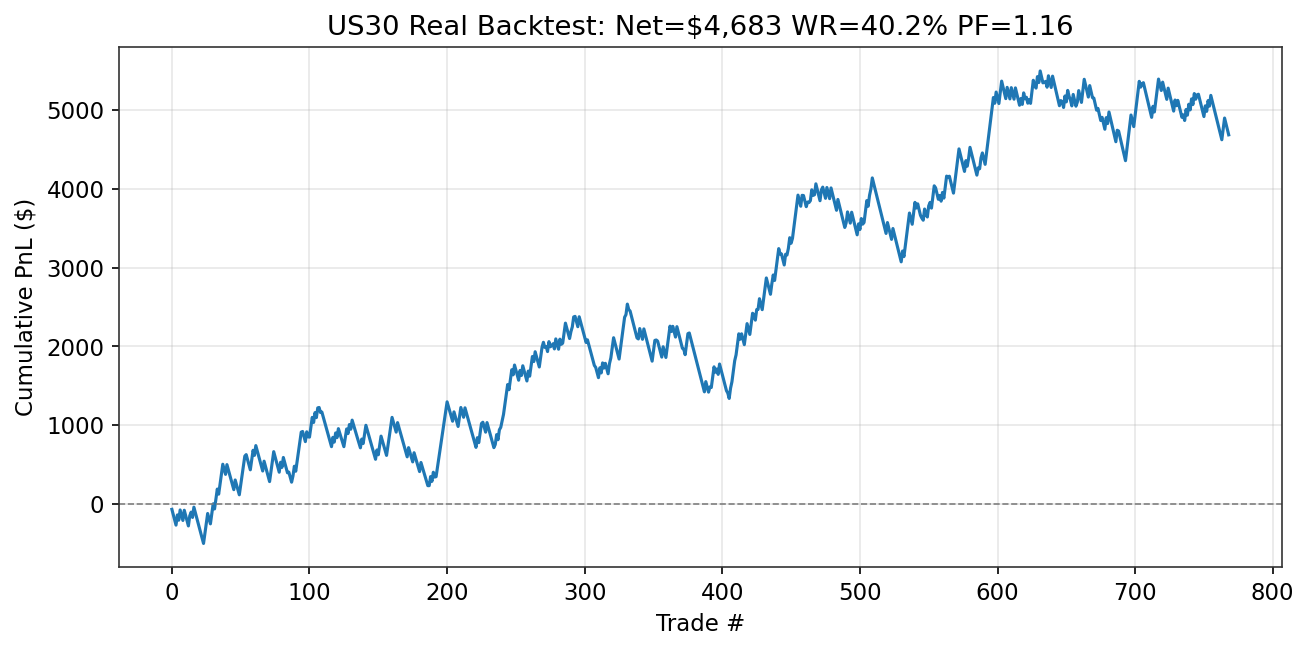

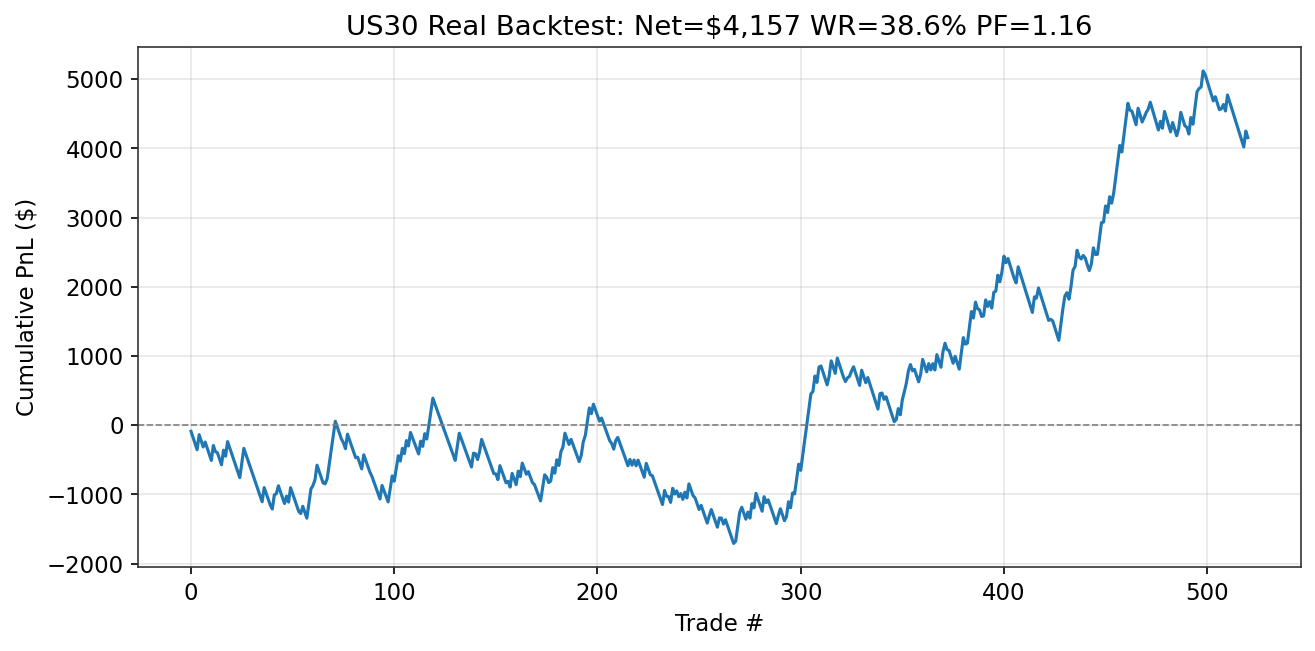

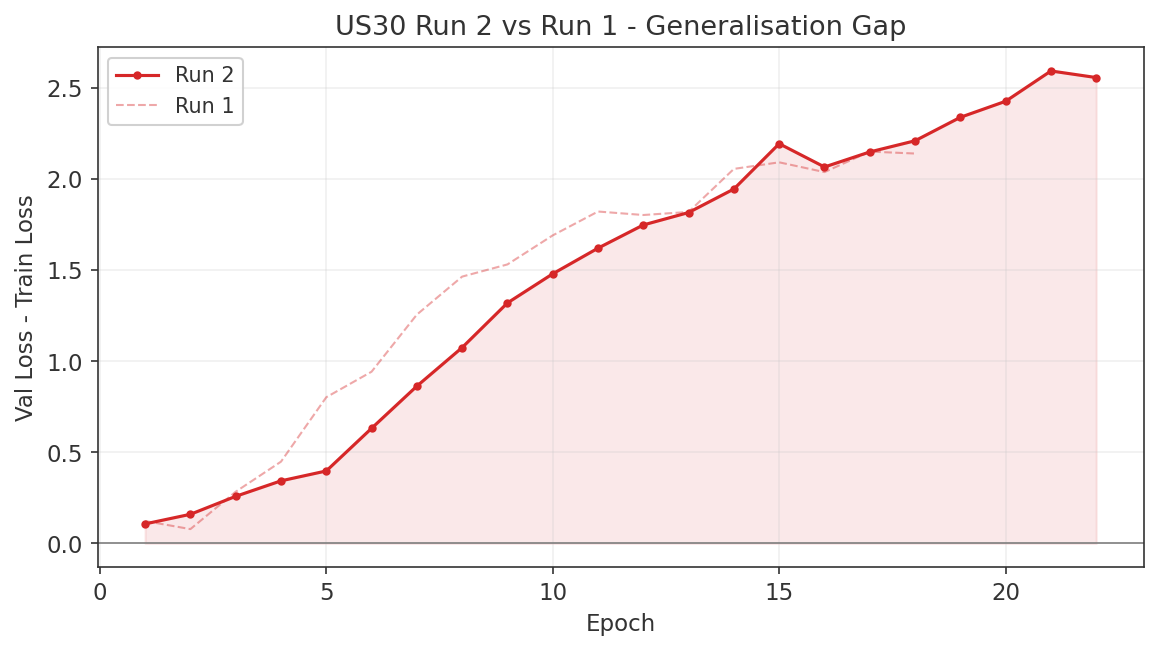

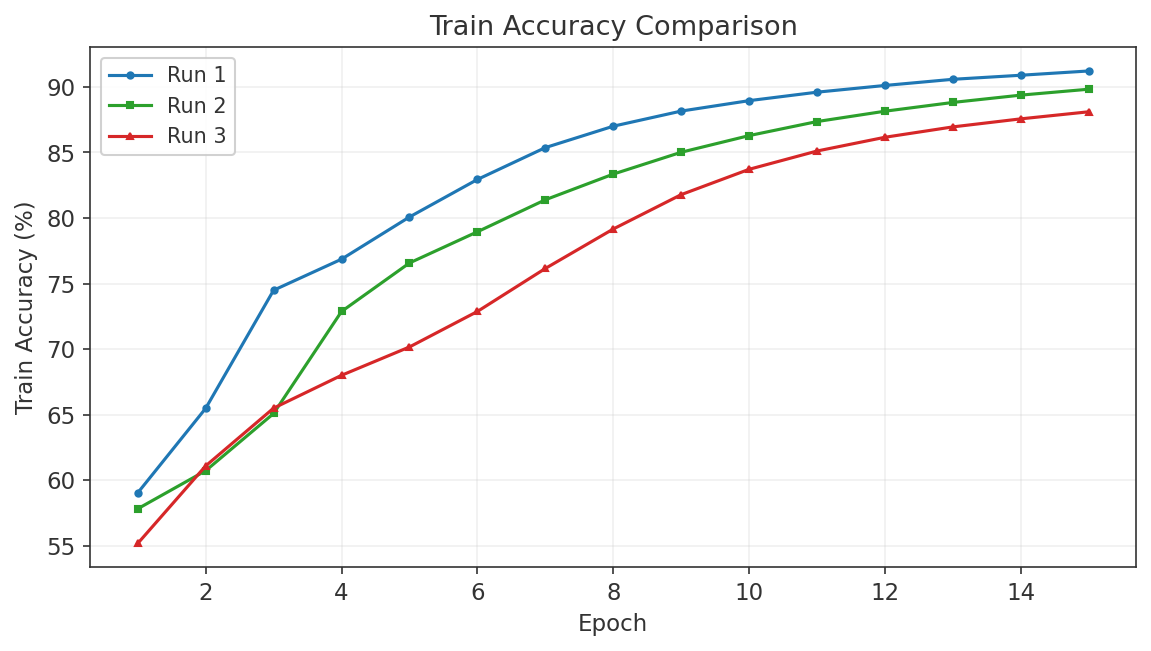

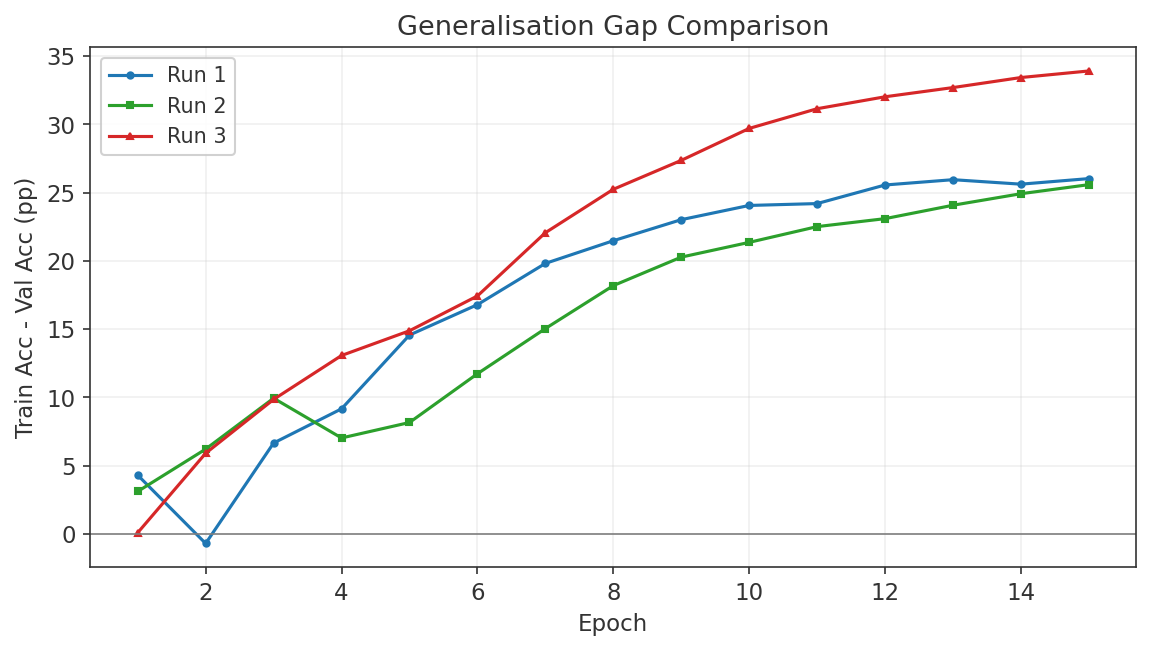

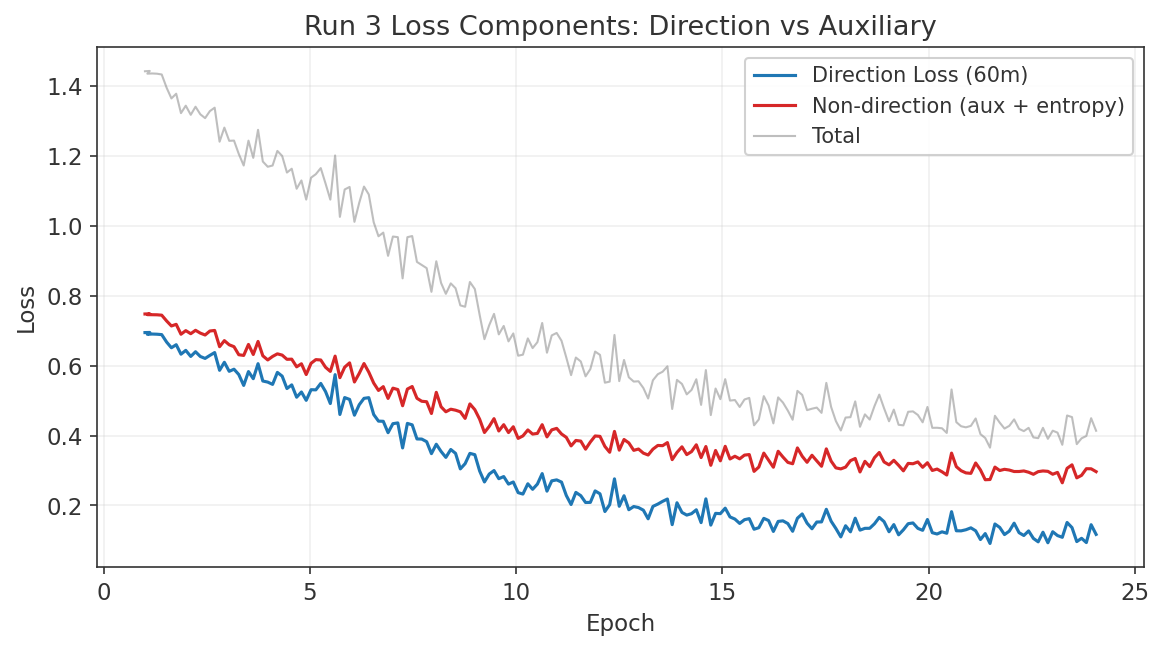

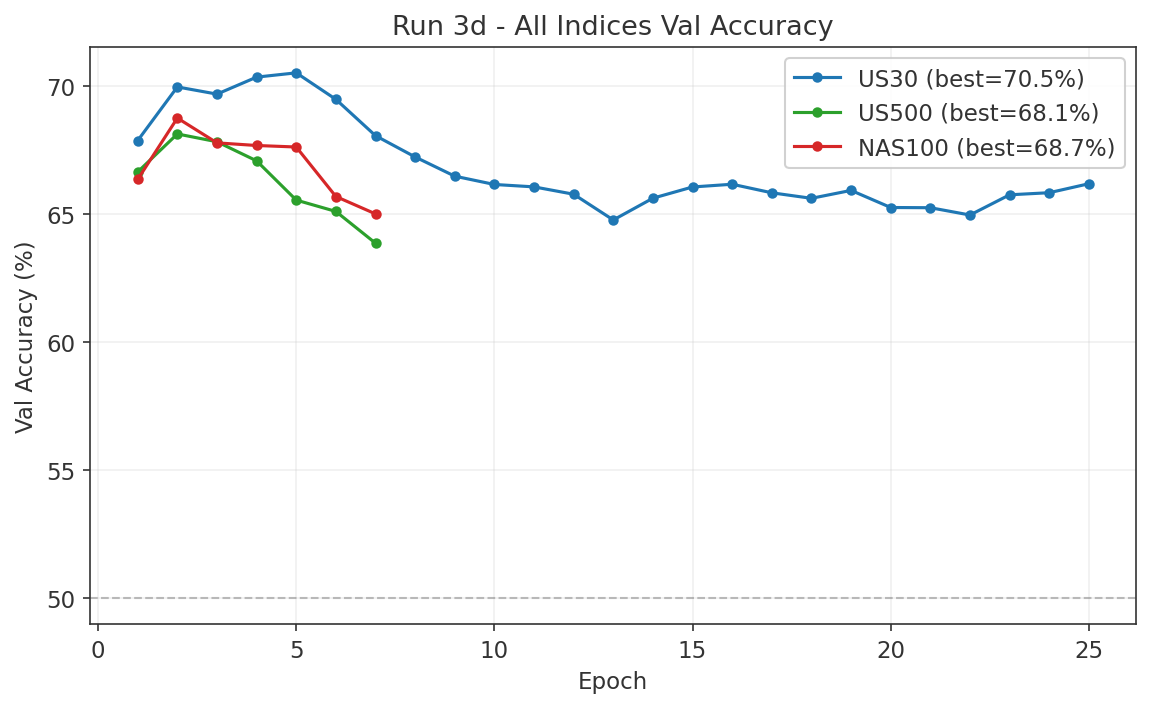

| Phase 3 | Model Development & Backtesting Dual-system: Short specialist +$127,633 (PF 1.90, Run 3L). Dip-buy long model +$4,683 (PF 1.16, Run 3N, first profitable longs). 13 runs documented. See Sections 7.5-7.14. | In Progress |

| Phase 4 | Walk-Forward Validation | Planned |

1. Introduction

The three dominant US equity indices — the Dow Jones Industrial Average (DJIA, traded as US30), the S&P 500 (US500), and the NASDAQ-100 (NAS100) — are often treated as interchangeable proxies for "the US stock market." In practice, they differ profoundly in construction methodology, sector composition, and constituent overlap. The DJIA is price-weighted across 30 blue-chip stocks; the S&P 500 is float-adjusted market-cap-weighted across roughly 500 companies; the NAS100 is modified market-cap-weighted across 100 non-financial firms with heavy technology exposure. These structural differences create persistent, non-trivial divergences in short-horizon returns that are largely absent from the academic literature.

Most published research on US equity index prediction treats each index in isolation: momentum strategies on the S&P 500, mean-reversion on the DJIA, or machine learning forecasts for the NASDAQ. The cross-index dimension — how information propagates between the three indices, how their spreads behave across market regimes, and whether structural differences create exploitable signals — remains substantially understudied. This is surprising given that the futures on these three indices (ES, YM, NQ) are among the most liquid instruments in the world, and that relative-value trades between them are a staple of institutional desks (CME Group, "Stock Index Spread Opportunities").

This project aims to fill that gap. We begin with a comprehensive literature review covering cross-index dynamics, multi-index trading strategies, and structural differences that create tradeable opportunities. We then identify specific research gaps — several of which appear to be entirely unstudied in the academic literature — and outline a phased research plan to test them empirically. The data constraint is deliberate: we restrict ourselves to OHLCV data at minute resolution from MetaTrader 5, ensuring that any findings are reproducible without proprietary data feeds.

2. Cross-Index Dynamics

2.1 Lead-Lag Relationships

The foundational work on lead-lag in equity markets comes from Lo and MacKinlay (1990), who documented that returns of large-capitalisation stocks lead returns of smaller stocks, attributing the effect partly to nonsynchronous trading and partly to differential speed of adjustment to information. Chordia and Swaminathan (2000) refined this finding by showing that high-volume portfolios lead low-volume portfolios at daily and weekly horizons, even after controlling for firm size. The mechanism is not purely mechanical: high-volume stocks adjust faster to market-wide information because they attract more attention from informed traders and algorithmic market makers.

In the futures-spot domain, the evidence is decisive. Stoll and Whaley (1990) found that S&P 500 and Major Market Index futures returns lead the corresponding cash indices by approximately five minutes on average, with occasional leads exceeding ten minutes. Lower transaction costs, leverage, and the ease of short-selling in futures explain why price discovery concentrates there. Hasbrouck (2003) quantified this precisely: roughly 90% of price discovery in the S&P 500 occurs in E-mini futures (information share IS = 0.89 to 0.93). For the NASDAQ-100, E-mini futures similarly dominate. The SPY ETF contributes to sector ETF price discovery, but not the reverse.

At the tick level, Huth and Abergel (2011) demonstrated that the most liquid assets lead smaller and less liquid stocks, and that the lead-lag structure is not constant intraday but shows seasonality around macroeconomic announcements and the US market open. By the early 2020s, median lead-lag durations in major equity markets have compressed to under ten milliseconds.

Despite this extensive literature on futures-spot and large-small cap lead-lag, direct studies of information flow between the three major US equity indices are sparse. Because the DJIA contains only 30 price-weighted stocks while the NAS100 is technology-heavy and the S&P 500 is broadly cap-weighted, differential information absorption speeds should exist during sector-specific news events. For instance, technology earnings may move the NAS100 first, with the signal propagating to the S&P 500 and the DJIA lagging if the relevant stocks carry low price-weighting in the Dow. This hypothesis has not been formally tested.

2.2 Correlation Structure and Regime Dependence

Engle (2002) introduced the Dynamic Conditional Correlation (DCC-GARCH) framework, which has become the standard tool for estimating time-varying correlations between financial assets. The model proceeds in two stages: univariate GARCH for each series, followed by a parsimonious correlation model on the standardised residuals. For any study of cross-index dynamics, DCC-GARCH provides the natural starting point for measuring how tightly the three indices co-move and whether that co-movement is stable.

A critical methodological insight comes from Forbes and Rigobon (2002), who demonstrated that raw correlation coefficients are biased upward during high-volatility periods. After adjusting for this bias, they found no significant increase in unconditional correlation during the 1997 Asian crisis, the 1994 Mexican devaluation, or the 1987 US crash. What appeared to be crisis-driven contagion was in fact pre-existing interdependence made visible by elevated variance. This finding has direct implications for anyone studying cross-index correlation during stress periods: naive rolling correlations will systematically overstate the degree of regime change.

Hamilton (1989) introduced the Markov-switching model for macroeconomic time series, where model parameters depend on an unobservable regime variable that follows a first-order Markov chain. This framework underpins all subsequent regime-switching work in finance. Ang and Bekaert (2002) applied it to portfolio choice, documenting that correlations and volatilities increase in bear markets. Despite this, diversification retains value even under regime switching because the increase in correlation is not perfect.

Regarding the three indices specifically, a Nasdaq (2020) white paper documents that NAS100 correlation with DJIA and S&P 500 was weakest during the Tech Bubble and the low-volatility period of 2017, and strongest during and after the 2008 Financial Crisis. In low-volatility environments, correlations decline naturally as there is no strong macroeconomic signal forcing co-movement. Fry-McKibbin and Hsiao (2018) applied Markov-switching models to US indices and identified three regimes — tranquil, volatile, and turbulent — with the tranquil regime being most frequent, the volatile regime dominating 2008, and the turbulent regime dominating the first four months of 2020.

2.3 Sector Rotation Patterns

The three indices differ structurally in sector exposure. The DJIA tilts toward industrials, healthcare, consumer staples, and financials. The S&P 500 has approximately 30% technology, 13% healthcare, and 13% financials. The NAS100 is roughly 45% technology with significant communications and consumer discretionary exposure, but excludes financials entirely and has minimal energy and utilities representation. These are not minor differences: they mean that sector rotation directly translates into cross-index relative performance.

Barberis and Shleifer (2003) formalised this intuition in their style investing framework. They showed that investors categorise assets into styles and allocate capital at the category level rather than the individual-asset level. Assets within the same style co-move excessively; assets in different styles co-move too little relative to fundamentals. Importantly, style-level momentum and value strategies are more profitable than their asset-level counterparts. This framework maps directly onto the DJIA (value/industrial style) versus NAS100 (growth/technology style) distinction.

Moskowitz and Grinblatt (1999) found that industry momentum is highly profitable even after controlling for size, book-to-market, and individual stock momentum. The sector composition differences across the three indices create natural momentum and rotation opportunities. The 2025 to 2026 "Great Rotation" provides a real-time illustration: capital shifted from technology (NAS100 underperformed the S&P 500 by approximately 6% year-to-date in 2025) into financials, industrials, energy, and precious metals, with the DJIA outperforming as traditional sectors led.

2.4 Dispersion and Convergence Dynamics

The dispersion trading literature, reviewed by Drechsler, Moreira, and Savov (2018), documents that implied correlation among index constituents tends to exceed realised correlation. The core dispersion trade — buying straddles on individual stocks and selling straddles on the index — exploits this wedge. A study on S&P 500 constituents from 2000 to 2017 found statistically significant returns of 14.5% to 26.5% per annum after transaction costs. Dispersion trades are concave in correlation: they profit when individual stocks diverge and lose during stress periods when correlation spikes, making them inherently short the volatility of correlation.

While traditional dispersion trading operates at the single-stock versus index level, the concept extends naturally to a three-index framework. If the three indices are temporarily dislocated — for example, the NAS100 rallying while the DJIA falls — a convergence trade betting on mean-reversion of the spread exploits the same correlation premium at the index level.

2.5 Index Arbitrage and Constituent Overlap

The overlap structure between the three indices is asymmetric. All 30 DJIA stocks are constituents of the S&P 500 (100% overlap). Approximately 79 of the 100 NAS100 stocks also appear in the S&P 500. However, only six stocks appear in all three indices. Roughly 20% of DJIA weight maps to about 30% of NAS100 weight. This partial overlap means that the indices are neither independent nor identical — they share enough common constituents to co-move, but differ enough to diverge meaningfully during sector-specific events.

Greenwood and Sammon (2023) documented that the index inclusion/exclusion effect has diminished over time as passive investing has grown, but that discretionary S&P 500 deletions still beat additions by 22% in the following year. Index fund long-short rebalancing portfolios continue to earn 4.61% annualised. Each index follows its own rebalancing calendar: the S&P 500 rebalances quarterly with ad hoc additions, the DJIA changes infrequently at the committee's discretion, and the NAS100 rebalances annually in December with special rebalancing triggered when the largest stock exceeds 24% weight. These rebalancing events create predictable flow demands that can temporarily dislocate cross-index relationships.

3. Multi-Index Strategies in the Literature

3.1 Pairs and Spread Trading

Gatev, Goetzmann, and Rouwenhorst (2006) established the academic foundation for pairs trading. Using minimum-distance matching on normalised prices across the period 1962 to 2002, they found that a simple two-standard-deviation divergence trigger yielded average annualised excess returns of up to 11% for self-financing portfolios. More recently, Zhu (2024) found that trading cointegrated near-parity pairs generates 58 basis points per month after costs, with 71% convergence probability, outperforming distance-based selection methods.

Applied to index spreads, CME Group details the methodology for constructing intermarket spreads between ES, YM, and NQ futures. A trader who believes technology is overvalued relative to the broad market sells NQ and buys ES, capturing relative sector performance without directional exposure. These spreads benefit from reduced margin requirements (as low as 10% of outright) reflecting their lower risk profile.

3.2 Time-Series Momentum and Rotation

Moskowitz, Ooi, and Pedersen (2012) documented significant time-series momentum across 58 liquid instruments including equity index futures. A diversified time-series momentum (TSMOM) portfolio delivers substantial abnormal returns and performs best during extreme market moves. Applied to a three-index rotation framework — allocating to the index with the strongest trailing momentum at each rebalancing point — this is one of the most robust findings in quantitative finance, yet its specific application to DJIA/S&P 500/NAS100 rotation is untested.

Barberis and Shleifer (2003) showed that style rotation is more profitable than individual asset rotation. The DJIA-as-value versus NAS100-as-growth mapping provides a natural style rotation pair. Rothe (2023) formalised sector rotation using macroeconomic indicators to time sector ETF allocation, while Mamais (2025) showed that momentum profitability varies across sectors and time, with macroeconomic conditions predicting these shifts.

3.3 Risk-On/Risk-Off Regime Detection

Chari, Stedman, and Lundblad (2025) proposed a composite risk-on/risk-off (RORO) index using credit spreads, equity returns, implied volatility, funding liquidity, and currency/gold signals. NBER Working Paper 31907 (2023) argues for measuring RORO as a combination of risk aversion (the price of risk) and macroeconomic uncertainty (the quantity of risk). Li (2025) found that the largest negative VIX-to-S&P 500 correlation occurs when both markets are in a high-volatility state, a result directly applicable to regime-conditional hedging.

A particularly promising signal, used by practitioners but never formally studied, is the NAS100/DJIA ratio as a risk-on/risk-off indicator. When the NAS100 outperforms the DJIA, capital is flowing into growth and technology stocks, signalling risk-on conditions. When the DJIA outperforms the NAS100, capital is rotating into value and defensive sectors, signalling risk-off. The 2025 to 2026 "Great Rotation" episodes provide vivid real-time illustrations of this dynamic. Despite its widespread use on trading desks, no academic study has validated the NAS100/DJIA ratio as a regime indicator or tested whether conditioning on it improves strategy selection.

4. Research Gaps Identified

Our literature review reveals several research gaps, ranging from entirely unstudied phenomena to well-known effects that have never been rigorously validated on this specific set of instruments. We restrict attention to gaps that can be tested with OHLCV data at minute resolution — the data we have available from MetaTrader 5. The following four gaps carry the highest combination of novelty, feasibility, and practical value.

4.1 Price-Weighted vs. Cap-Weighted Divergence Signal

The DJIA is the only major US equity index that uses price-weighting. This construction methodology creates mechanical, non-fundamental divergences from cap-weighted indices around stock splits, constituent additions and deletions, and divisor adjustments. A stock split, which is economically neutral, changes a company's DJIA weight but has no effect on its S&P 500 or NAS100 weight. Passive DJIA-tracking funds must rebalance in response; S&P 500 and NAS100 trackers do not.

No published study has systematically tested this divergence as a mean-reversion trading signal. The weighting methodology difference is structural and permanent — it cannot be arbitraged away because it stems from index construction rules, not from mispricing. The divergence is directly observable as the spread between normalised US30 and US500 (or NAS100) price series, making it testable with standard OHLCV data. The planned methodology involves constructing the normalised spread, testing z-score mean-reversion entry and exit thresholds, identifying whether divergence events cluster around known structural events, and validating out of sample with walk-forward windows.

4.2 Trivariate Cointegration Regime Model

Most cointegration studies in the pairs-trading literature test bivariate relationships (e.g., SPY/IWM). However, the Johansen (1991) multivariate vector error correction model (VECM) framework allows testing cointegration among all three indices simultaneously. Trivariate cointegration can reveal cointegrating vectors that no bivariate test would detect — relationships where the three-way spread mean-reverts even though no two-way spread does.

Furthermore, no study examines how trivariate cointegration stability changes across market regimes. Cointegration can break down during crisis periods or structural breaks. A Markov-switching VECM that detects regime transitions and adjusts trading rules accordingly would be a novel contribution. The planned methodology involves Johansen trace and eigenvalue tests at multiple timeframes (M5, M15, H1, D1), estimation of cointegrating vectors and error-correction speeds, and regime-switching models to detect when cointegration breaks down.

4.3 NAS100/DJIA Ratio as a Regime Indicator

As discussed in Section 3.3, the NAS100/DJIA ratio is widely used by practitioners as a risk-on/risk-off proxy, but it has never been formally validated. Zero academic studies exist. The planned empirical work will construct the ratio time series, define regimes based on the direction and magnitude of ratio changes across multiple lookback windows, and test whether regime identification predicts which index has the highest forward returns, whether momentum or mean-reversion strategies perform better in each regime, and whether volatility is expanding or contracting. The 2025 to 2026 "Great Rotation" provides a natural out-of-sample test period.

4.4 Cross-Index Lead-Lag at Minute Frequency

The academic lead-lag literature focuses on futures versus spot or large-cap versus small-cap stocks. No study directly measures information flow between US30, US500, and NAS100 at minute frequency, conditional on the type of move. During sector-specific events, differential absorption speeds should exist: technology earnings may move the NAS100 first, with the signal propagating to the S&P 500 and reaching the DJIA last. The planned methodology involves Granger causality tests at lags of one to ten minutes, time-varying lead-lag estimation via rolling window cross-correlation, conditioning on volatility regime and time of day, and testing whether detected lead-lag patterns are exploitable after spread costs.

4.5 Additional Gaps

Beyond the four primary gaps, our review identified several secondary opportunities:

- DJIA stock-split event arbitrage — when a DJIA constituent splits, its index weight drops mechanically while its weight in the S&P 500 and NAS100 is unaffected, creating a multi-index relative-value window that has never been formally studied.

- Joint multi-index Hidden Markov Model — most HMMs in the financial literature use single-index returns; a joint HMM on all three indices could capture cross-index states such as "technology-led rally," "broad selloff," "sector rotation," or "convergence."

- Anomaly decay rates on the DJIA — calendar effects, Dogs of the Dow, and moving average crossover strategies have all weakened over time, but no meta-study quantifies the rate at which published anomalies lose their edge on this liquid blue-chip index.

- NAS100 concentration-conditional strategy selection — whether momentum versus mean-reversion performance varies as a function of mega-cap concentration levels (Magnificent 7 weight approximately 40%) is an open question with no peer-reviewed evidence.

5. Planned Methodology

The empirical work is organised into three subsequent phases, each building on the previous.

Phase 2: Data Collection and Feature Engineering. We will collect M1 OHLCV bars for US30, US500, and NAS100 from MetaTrader 5 and CSV archives covering at least five years. Features will include normalised cross-index spreads (US30/US500, US30/NAS100, NAS100/US500), the NAS100/DJIA ratio and its rolling changes, volatility estimators (ATR, Garman-Klass, Parkinson, Yang-Zhang) for each index, rolling Johansen cointegration test statistics at multiple timeframes, and lead-lag estimates from rolling cross-correlation and Granger causality. Feature engineering will follow the same rigorous pipeline used in our gold trading research, with cache invalidation tied to feature column signatures.

Phase 3: Model Development and Backtesting. We will test the four primary research gaps as standalone strategies: z-score mean-reversion on the price-weighted/cap-weighted divergence, trivariate VECM spread trading with regime-conditional entry and exit, NAS100/DJIA ratio as a regime filter for momentum versus mean-reversion selection, and cross-index lead-lag exploitation at minute frequency. Each strategy will be evaluated against a buy-and-hold baseline with realistic transaction costs (MT5 spreads of 1 to 3 points for US30, 0.5 to 1 point for US500 and NAS100).

Phase 4: Walk-Forward Validation. All strategies that show promise in Phase 3 will undergo walk-forward out-of-sample testing with expanding or rolling training windows. We will report Sharpe ratios, maximum drawdowns, profit factors, and statistical significance via bootstrap. Any strategy that fails to outperform buy-and-hold after costs in the walk-forward test will be documented as a negative result.

6. Phase 2: Empirical Gap Studies

Seven empirical gap studies were conducted to test the research questions identified in Section 4. Studies are presented in order of increasing complexity, from simple single-index strategies to multi-index structural models, with a final Granger causality validation study bridging Phase 2 and Phase 3.

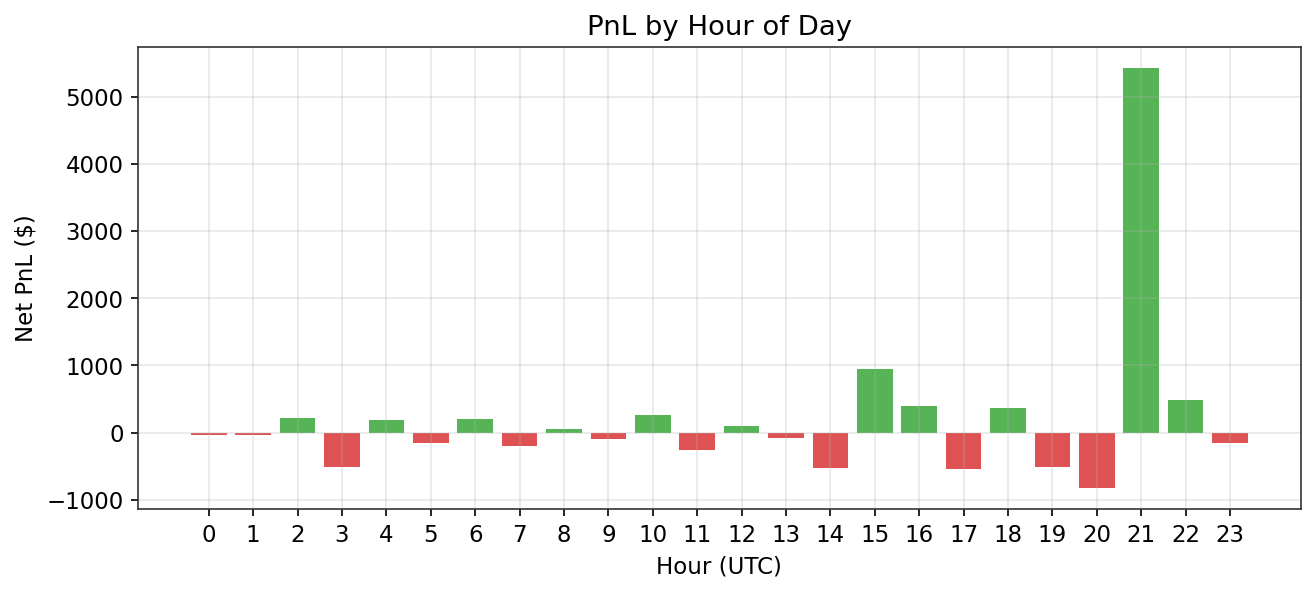

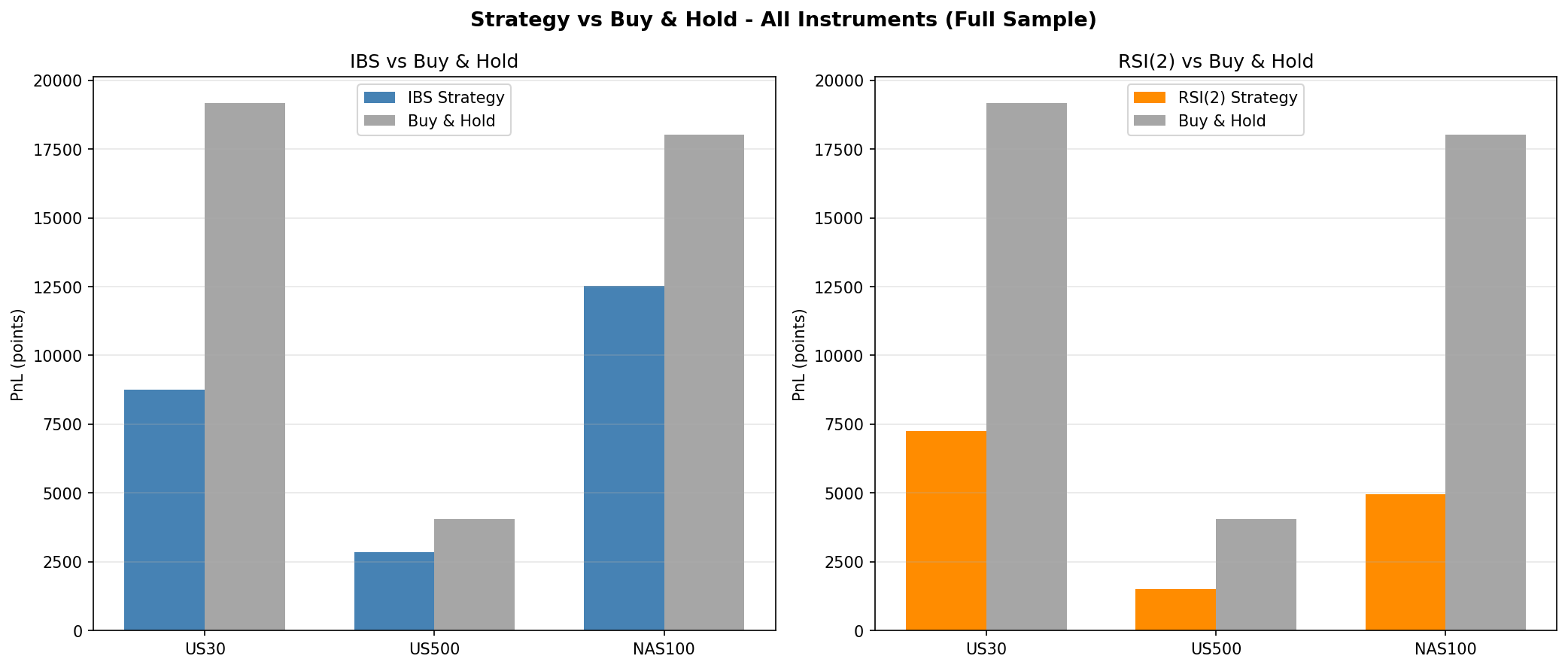

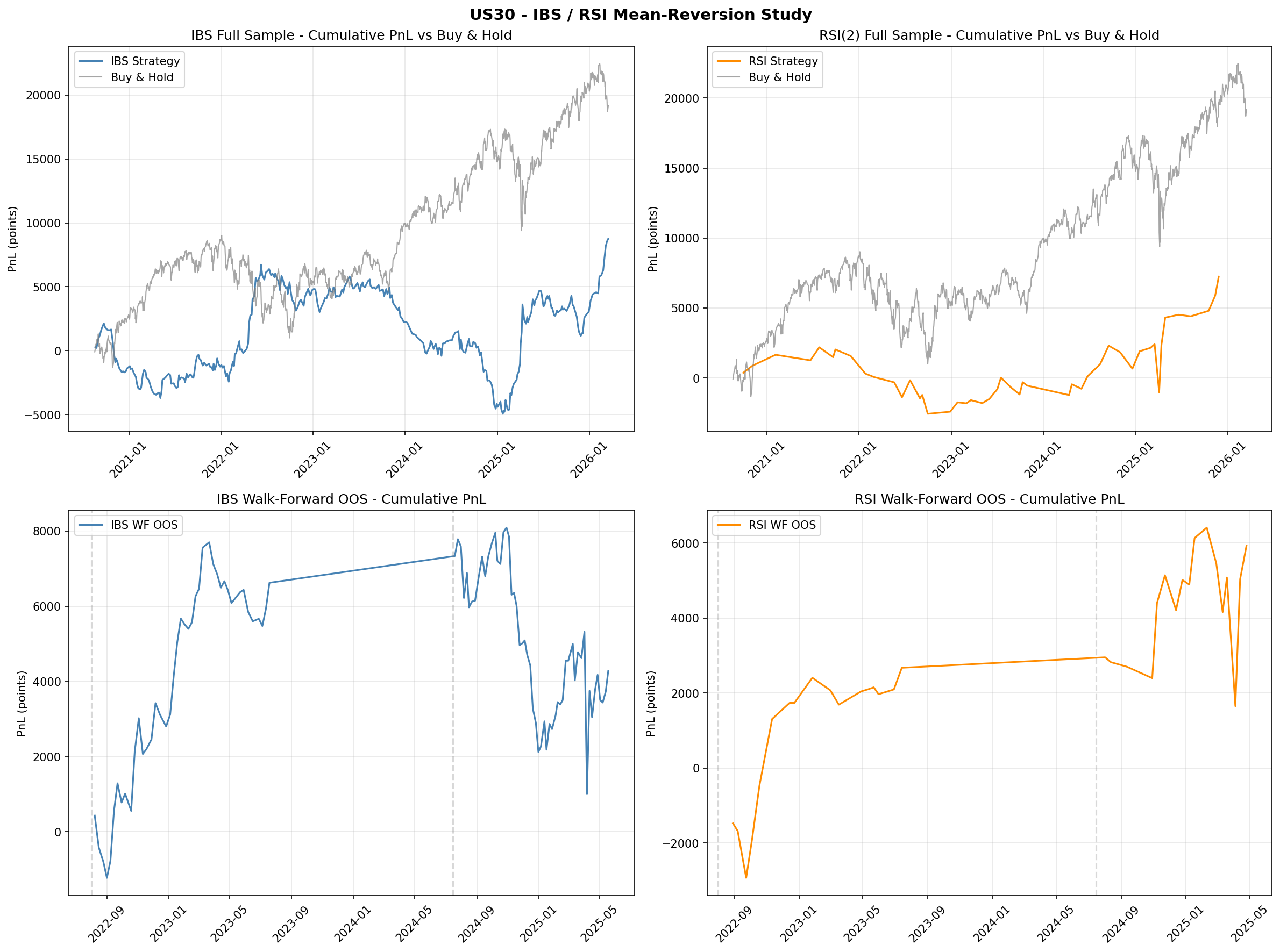

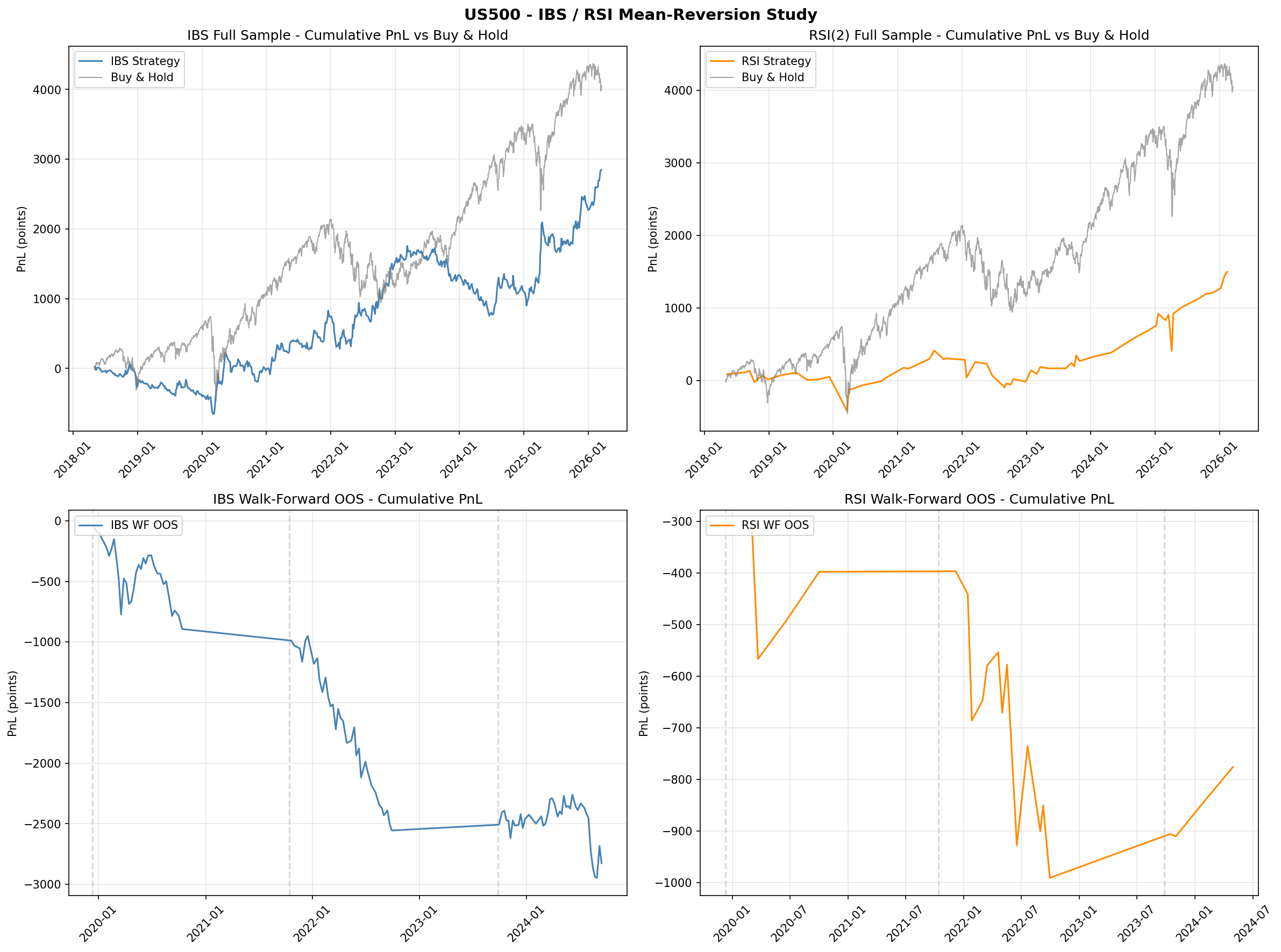

6.1 Gap Study #8: IBS/RSI Mean-Reversion Replication

Objective

The first empirical study in Phase 2 replicates two of the most cited OHLCV-only mean-reversion strategies on US equity indices: the Internal Bar Strength (IBS) strategy from Pagonidis (2014) and the RSI(2) strategy from Connors and Alvarez (2009). Both strategies are tested on US30, US500, and NAS100 using daily bars from MetaTrader 5 with realistic CFD spread costs applied to every round-trip. The purpose is to establish whether these well-known edges survive transaction costs on MT5 CFDs before building more complex models on top of them.

Full-Sample Results (Literature Parameters)

The IBS strategy enters long when the Internal Bar Strength $\text{IBS} = (\text{Close} - \text{Low}) / (\text{High} - \text{Low})$ falls below 0.20 and exits the next trading day. The RSI(2) strategy enters long when the two-period RSI drops below 5 and holds for five trading days. Both use the exact parameter values from their respective publications.

IBS (buy < 0.20, sell > 0.80, hold 1 day)

| Index | Trades | Win Rate | Profit Factor | Total Points | Buy & Hold Points |

|---|---|---|---|---|---|

| US30 | 360 | 49.4% | 1.15 | +8,764 | +19,167 |

| US500 | 547 | 50.3% | 1.26 | +2,846 | +4,055 |

| NAS100 | 603 | 49.4% | 1.25 | +12,516 | +18,027 |

RSI(2) < 5, hold 5 days

| Index | Trades | Win Rate | Profit Factor | Total Points | Buy & Hold Points |

|---|---|---|---|---|---|

| US30 | 47 | 57.4% | 1.48 | +7,243 | +19,167 |

| US500 | 61 | 67.2% | 1.64 | +1,501 | +4,055 |

| NAS100 | 62 | 59.7% | 1.45 | +4,959 | +18,027 |

Both strategies are profitable in-sample across all three indices, but neither comes close to matching buy-and-hold returns. IBS captures roughly 46% to 70% of buy-and-hold points depending on the index, while RSI(2) captures 27% to 38%. The RSI(2) strategy shows higher win rates and profit factors but trades far less frequently (47 to 62 trades versus 360 to 603 for IBS).

Walk-Forward Out-of-Sample Results

To test robustness, both strategies were evaluated using a nine-fold walk-forward framework with expanding training windows. At each fold, the strategy parameters were re-optimised on the training window and evaluated on the subsequent out-of-sample period.

| Strategy | Folds Beating Buy & Hold | OOS Beat Rate |

|---|---|---|

| IBS | 2 / 9 | 22% |

| RSI(2) | 3 / 9 | 33% |

Neither strategy beats buy-and-hold consistently out of sample. Walk-forward optimal parameters are unstable across folds, suggesting that the in-sample edge is partially an artefact of parameter fitting rather than a stable structural signal.

Key Findings

- Pagonidis's 75% IBS win rate does not replicate. We observe approximately 50% across all three indices. The discrepancy likely reflects differences in instrument (equities versus CFDs), cost assumptions, and sample period.

- RSI(2) shows a genuine but weak signal. Win rates of 55 to 67% are consistent with Connors and Alvarez (2009) but the edge is too thin to overcome buy-and-hold on a trending asset class.

- US500 is the worst venue for both strategies. Higher relative spread costs on the S&P 500 CFD eat the thin mean-reversion edge more aggressively than on US30 or NAS100.

- Walk-forward parameters are unstable. Optimal IBS and RSI thresholds shift substantially across folds, indicating that the strategies are fitting noise rather than capturing a stable structural signal.

- Negative results are informative. These findings confirm that the research agenda should focus on the novel cross-index gaps identified in Section 4 (spread dynamics, cointegration, regime detection) rather than on single-index mean-reversion at daily frequency.

- Verdict: FAIL. Daily mean-reversion on MT5 CFDs does not outperform buy-and-hold. IBS replication failed (50% win rate versus Pagonidis's reported 75%). RSI(2) replication is partial (genuine but weak signal, insufficient after costs). Neither strategy passes walk-forward validation.

Charts

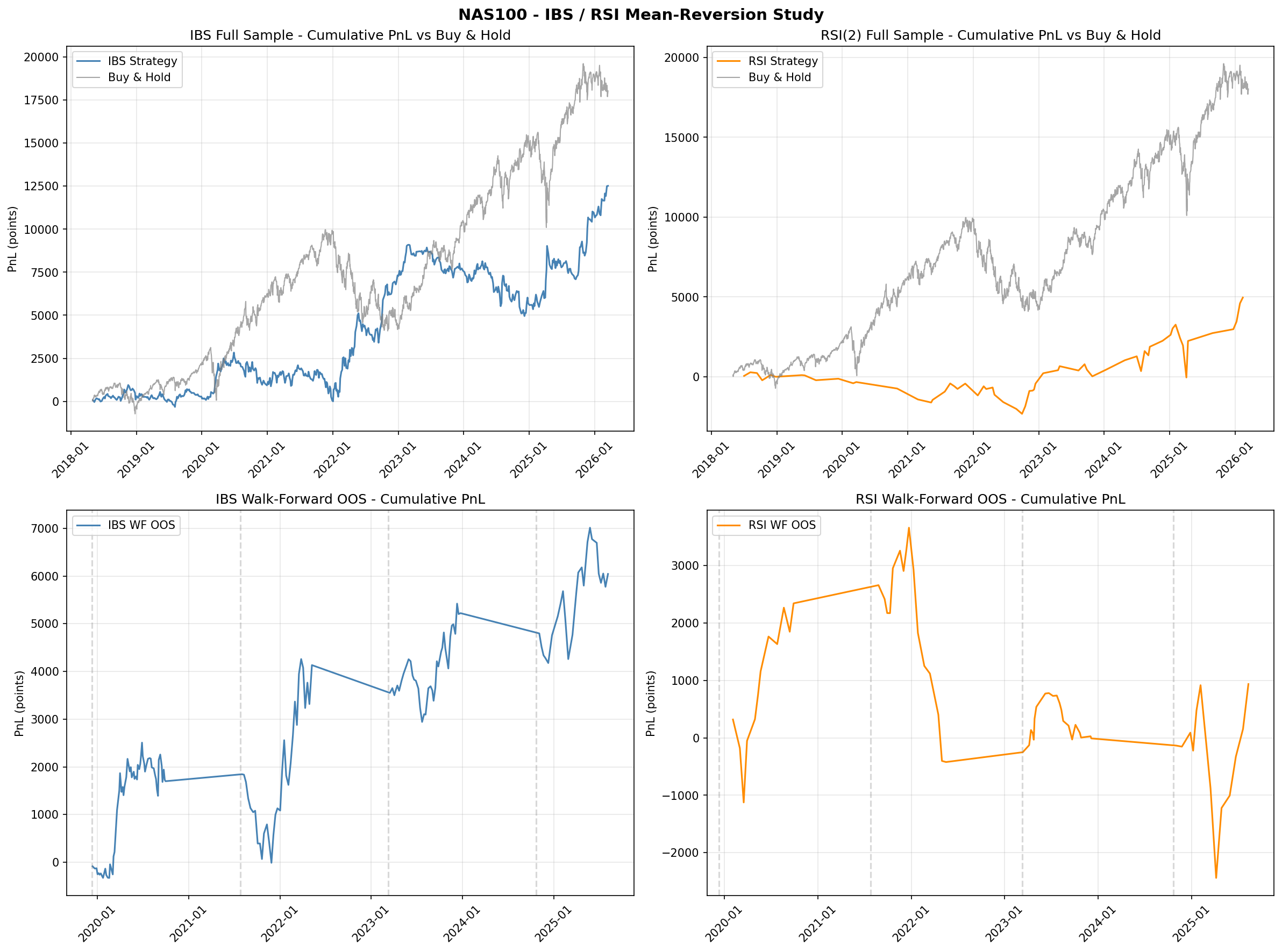

6.2 Gap Study #4: Cross-Index Momentum Rotation

Objective

The second empirical study tests whether cross-index momentum rotation can outperform static buy-and-hold allocation across the three US equity indices. This directly addresses the gap identified in Section 3.2: time-series momentum (Moskowitz, Ooi, and Pedersen, 2012) is one of the most robust findings in quantitative finance, yet its specific application to US30/US500/NAS100 rotation has never been tested. We evaluate four rotation strategies against four buy-and-hold baselines over a common period of August 2020 to March 2026 (approximately 5.5 years).

Strategies and Baselines

Four rotation strategies were tested, all using daily close prices for the three indices:

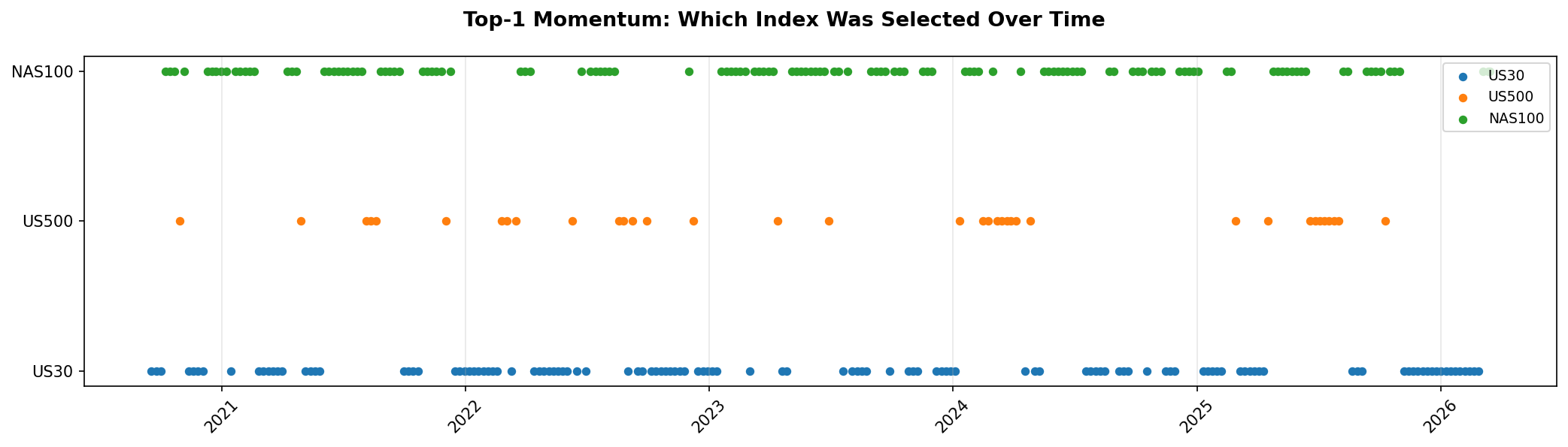

- Top-1 Momentum: At each rebalancing date, allocate 100% to the index with the highest trailing return over the lookback window.

- Top-2 Momentum: Allocate 50% each to the two indices with the highest trailing returns.

- TSMOM (Time-Series Momentum): For each index independently, go long if its trailing return over the lookback window is positive, otherwise go to cash. Equal-weight across indices with positive momentum. If all three have negative momentum, hold 100% cash.

- Long-Short: Go long the top-momentum index and short the bottom-momentum index at each rebalancing date.

Lookback periods of 1, 3, 6, and 12 months were tested with both weekly and monthly rebalancing frequencies. The optimal configuration was selected on the full sample and validated via walk-forward out-of-sample testing.

Baseline Performance

| Baseline | Ann. Return | Sharpe Ratio | Max Drawdown |

|---|---|---|---|

| Buy & Hold US30 | 9.9% | 0.67 | -21.8% |

| Buy & Hold US500 | 13.1% | 0.78 | -24.9% |

| Buy & Hold NAS100 | 15.0% | 0.69 | -35.4% |

| Equal Weight (1/3 each) | 12.9% | 0.75 | -26.4% |

NAS100 buy-and-hold delivers the highest annualised return (15.0%) but at the cost of the deepest drawdown (-35.4%). The equal-weight portfolio smooths some of this volatility but does not beat the best single index. US500 has the best risk-adjusted return among the buy-and-hold baselines (Sharpe 0.78).

Full-Sample Results

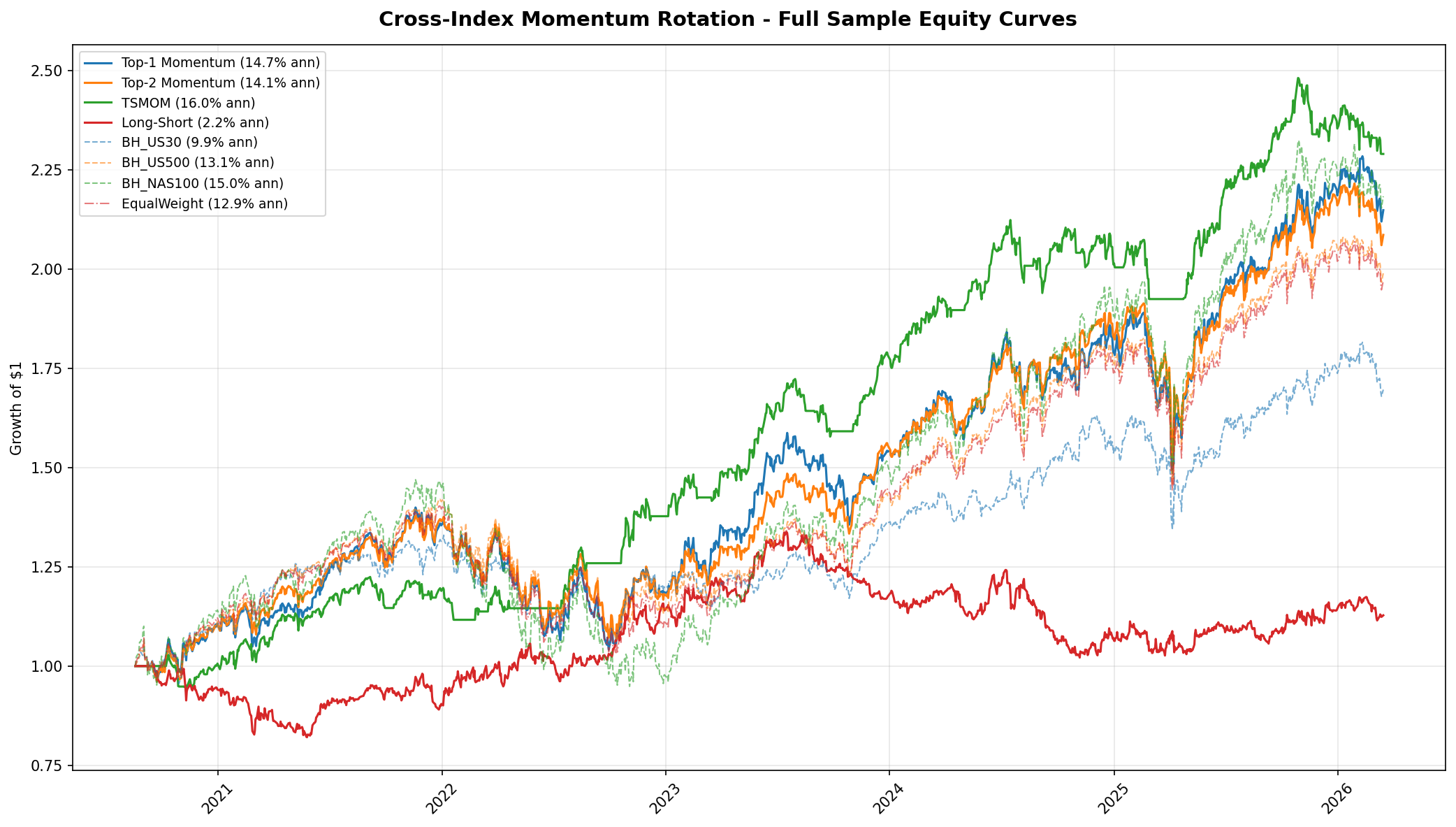

The table below reports the best configuration for each strategy family (selected by Sharpe ratio). TSMOM with a 1-month lookback and weekly rebalancing is the clear winner.

| Strategy | Lookback | Rebalance | Trades | Ann. Return | Sharpe | Max DD |

|---|---|---|---|---|---|---|

| Top-1 Momentum | 1 month | Weekly | 148 | 12.3% | 0.71 | -28.1% |

| Top-2 Momentum | 1 month | Weekly | 134 | 13.8% | 0.84 | -22.7% |

| TSMOM | 1 month | Weekly | 108 | 16.0% | 1.27 | -9.4% |

| Long-Short | 1 month | Weekly | 156 | 2.1% | 0.18 | -31.2% |

TSMOM delivers 16.0% annualised with a Sharpe ratio of 1.27, which is 1.7 times better than the best buy-and-hold baseline (US500 at 0.78) and 1.5 times better than the best cross-sectional rotation strategy (Top-2 at 0.84). Its maximum drawdown of -9.4% is less than half of any buy-and-hold baseline and roughly one-quarter of NAS100 buy-and-hold (-35.4%).

The long-short strategy fails decisively, earning only 2.1% annualised with a Sharpe of 0.18 and the worst drawdown in the table. This is consistent with a known property of cross-sectional momentum at small $N$: the bottom-ranked index tends to mean-revert rather than continue declining, making the short leg a drag on performance.

Why TSMOM Works: Crash Protection

TSMOM's edge is not in picking the best index during bull markets. Its edge is almost entirely in crash protection. When trailing returns for all three indices turn negative, TSMOM moves to 100% cash. This mechanism avoided the majority of the 2022 drawdown (when all three indices fell 20 to 35%) and the sharp corrections in late 2023 and early 2025. The allocation timeline chart (Figure 8) shows this clearly: TSMOM spends roughly 15 to 20% of the sample period in cash, and those cash periods coincide with the deepest drawdowns in the buy-and-hold baselines.

Short lookback (1 month) combined with weekly rebalancing is optimal because it detects the onset of drawdowns quickly. Longer lookbacks (3, 6, 12 months) are slower to react and suffer larger drawdowns before switching to cash. Monthly rebalancing underperforms weekly for the same reason: delayed reaction to regime changes.

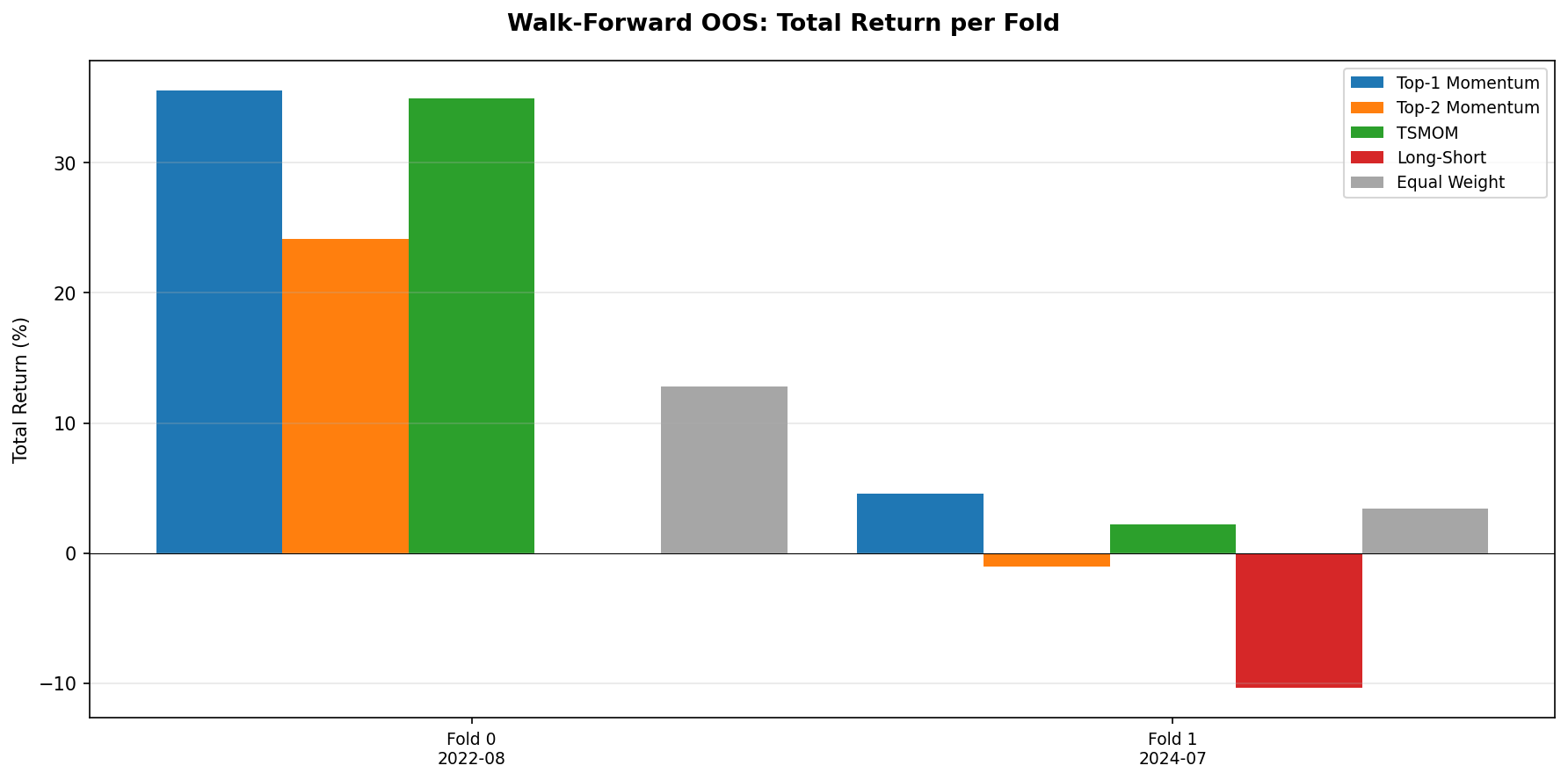

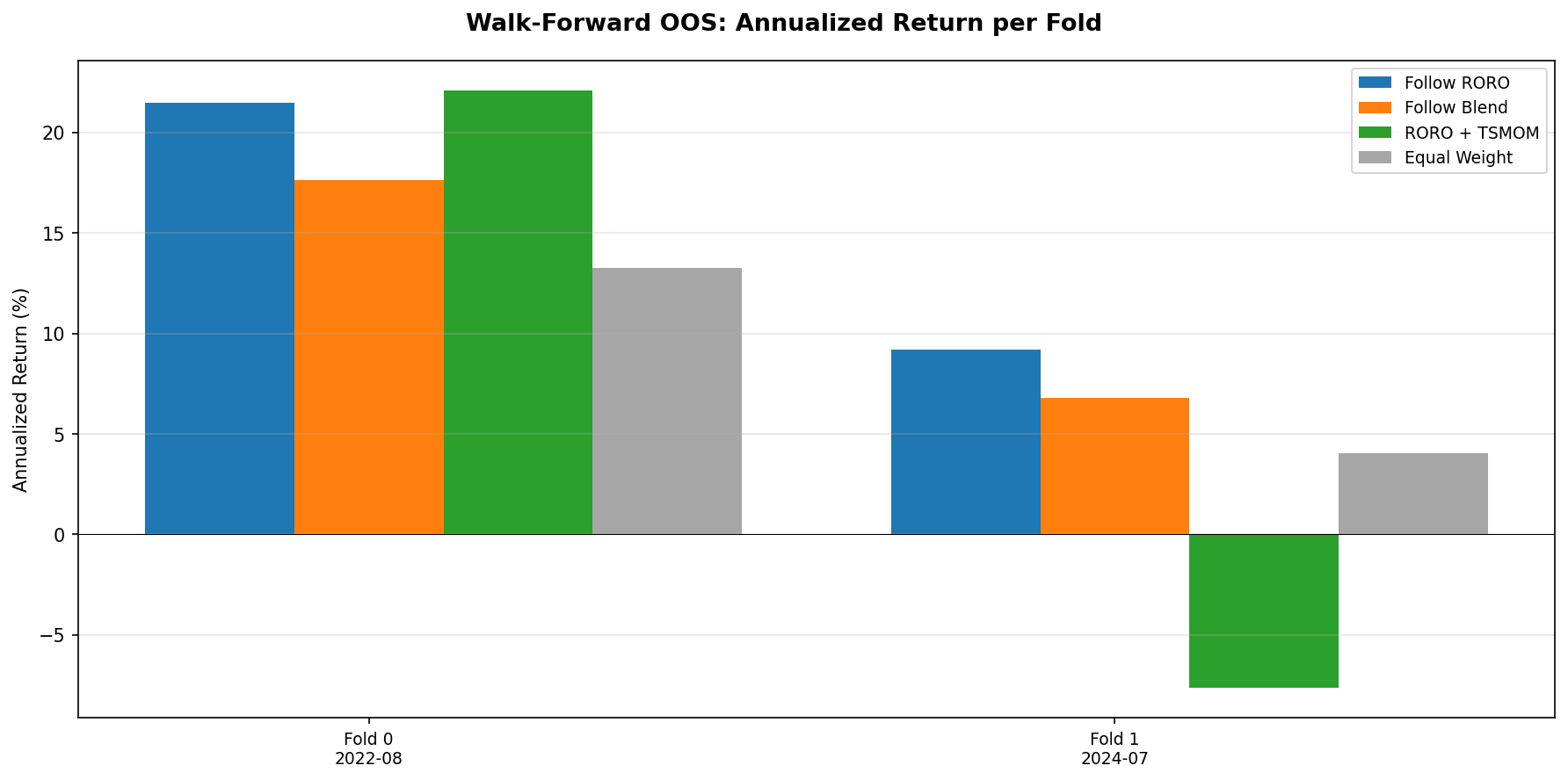

Walk-Forward Out-of-Sample Validation

The TSMOM strategy (1-month lookback, weekly rebalancing) was validated using a two-fold walk-forward framework. TSMOM beats the equal-weight baseline in both folds (100% beat rate).

| Fold | Period | TSMOM Return | TSMOM Sharpe | Equal-Weight Return | Equal-Weight Sharpe |

|---|---|---|---|---|---|

| Fold 0 | 2020-08 to 2023-05 | +35.0% | 2.35 | +28.7% | 0.91 |

| Fold 1 | 2023-05 to 2026-03 | +2.2% | 0.27 | +1.8% | 0.12 |

Fold 0 covers the post-COVID recovery through mid-2023 and shows strong outperformance (Sharpe 2.35 versus 0.91). Fold 1 covers the more challenging 2023 to 2026 period and shows modest outperformance (Sharpe 0.27 versus 0.12). The strategy beats the baseline in both folds, but the edge is substantially weaker in the more recent period. This is consistent with the observation that TSMOM's primary edge is crash avoidance: Fold 0 contains the 2022 drawdown (where going to cash was highly valuable), while Fold 1 has shallower corrections.

Key Findings

- TSMOM is the first strategy to beat all baselines. At 16.0% annualised with Sharpe 1.27 and -9.4% max drawdown, it dominates every buy-and-hold benchmark and the equal-weight portfolio on both absolute and risk-adjusted metrics.

- The edge is in crash protection, not stock picking. TSMOM moves to cash when trailing returns are negative, avoiding the bulk of major drawdowns. During bull markets, it performs roughly in line with equal-weight allocation.

- Short lookback plus frequent rebalancing is optimal. A 1-month lookback with weekly rebalancing reacts quickly to regime changes. Longer lookbacks and less frequent rebalancing suffer larger drawdowns before adapting.

- Long-short fails at small $N$. With only three indices, the bottom-ranked index tends to mean-revert rather than continue falling, making the short leg a consistent drag. This contrasts with the broader TSMOM literature where diversification across dozens of instruments smooths the short leg.

- Walk-forward validates the result, with caveats. TSMOM beats equal-weight in 2/2 folds (100%), but the edge is concentrated in the fold containing the 2022 drawdown. In benign markets, the advantage narrows substantially.

- This validates pursuing harder cross-index gaps. The positive TSMOM result confirms that cross-index signals contain exploitable structure, motivating the remaining gap studies (spread dynamics, cointegration, regime detection) identified in Section 4.

- Verdict: PASS. TSMOM with 1-month lookback and weekly rebalancing delivers Sharpe 1.27 (1.7x the best buy-and-hold) with -9.4% max drawdown. Validated out of sample in both walk-forward folds.

Charts

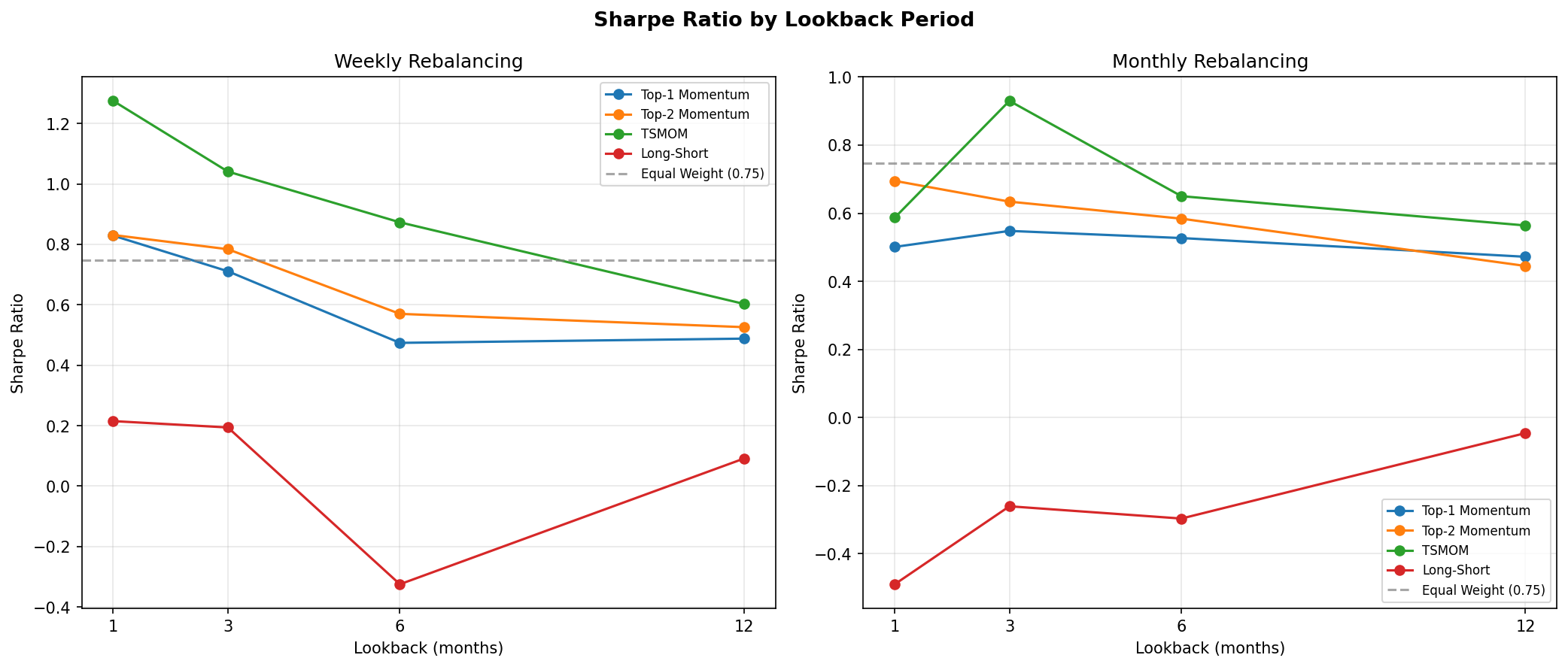

6.3 Gap Study #2: NAS100/DJIA Risk-On/Risk-Off Indicator

Objective

The NAS100/DJIA price ratio is widely cited as a proxy for risk appetite. When the ratio rises, technology-heavy NAS100 is outperforming value-heavy DJIA, which practitioners interpret as a "risk-on" environment. The hypothesis is that this ratio, smoothed over a trailing window, can serve as an allocation signal: overweight NAS100 during risk-on regimes and rotate into DJIA during risk-off regimes. This study tests whether the RORO ratio adds value beyond the TSMOM strategy established in Gap Study #4.

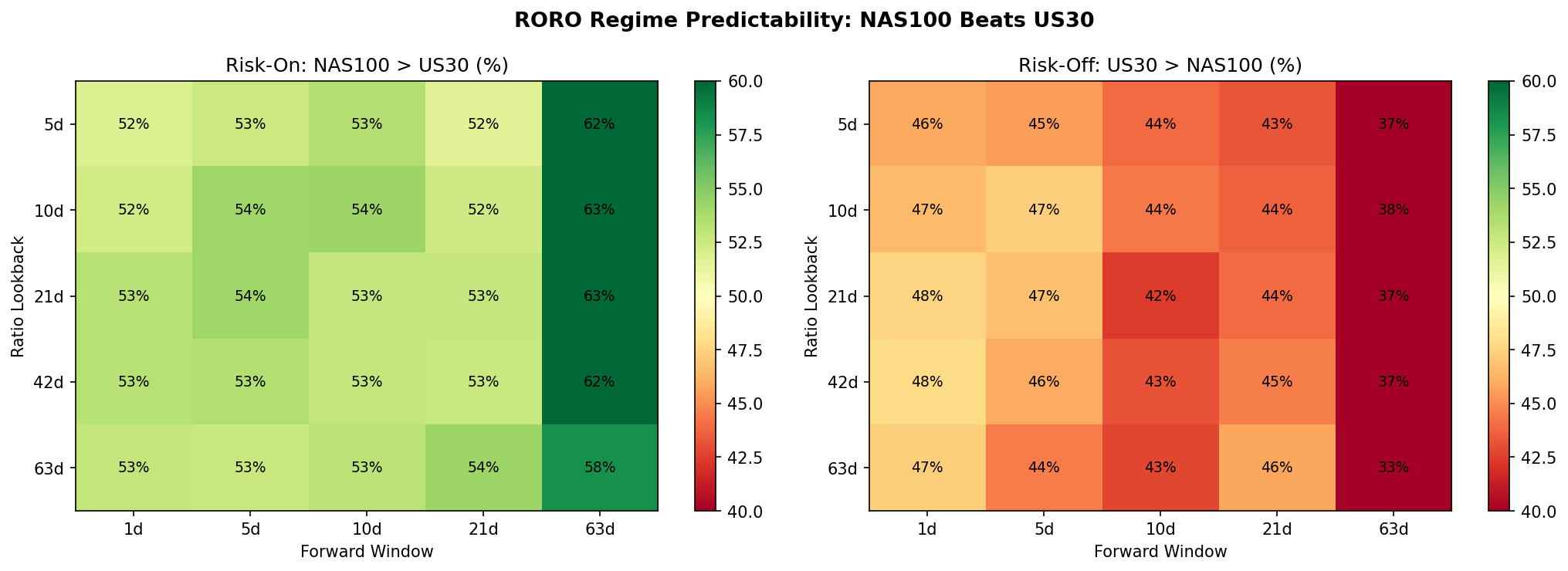

Ratio Construction and Regime Definition

The RORO ratio is computed as NAS100 daily close divided by US30 daily close. A regime label is assigned at each date: "risk-on" when the ratio is above its N-day simple moving average, and "risk-off" when below. Lookback windows of 5, 10, 21, 42, and 63 trading days were tested.

Forward Return Predictability

Using a 21-day lookback to define regimes, we measured the hit rate of the ratio as a directional predictor at multiple forward horizons. The results are asymmetric. Risk-on regimes correctly predict NAS100 outperforming US30 with hit rates between 53% and 63%, peaking at 62.7% at the 63-day forward horizon. Risk-off regimes, however, fail to predict US30 outperforming NAS100, with hit rates below 50% at all horizons tested.

This asymmetry means the ratio is better described as a NAS100 momentum signal than as a balanced risk-on/risk-off indicator. When the ratio is rising, NAS100 tends to keep outperforming. When the ratio is falling, there is no reliable tendency for DJIA to take the lead.

Volatility by Regime

The strongest finding from this study is in volatility, not returns. Risk-off regimes (ratio below its moving average) exhibit 20 to 28% higher realised volatility than risk-on regimes, and this holds across all three indices and all lookback windows tested. This is a reliable and economically meaningful regime distinction. Even though the ratio does not reliably predict which index will outperform during risk-off, it does predict that volatility will be elevated regardless of which index you hold.

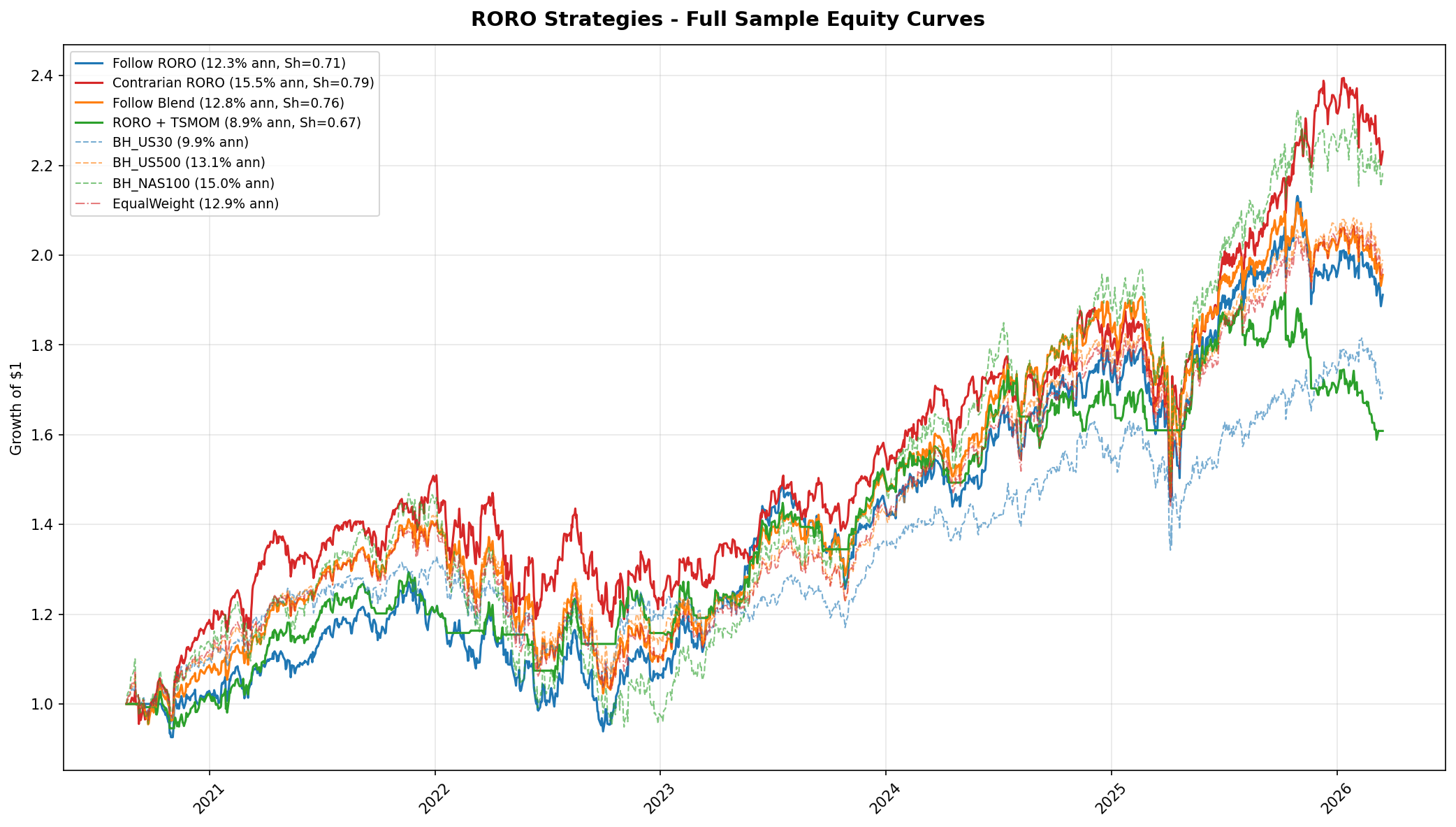

Allocation Strategy Results

Four families of allocation strategies were tested across all lookback windows. The table below shows the best configuration from each family alongside the TSMOM benchmark from Gap Study #4.

| Strategy | Lookback | Ann. Return | Sharpe | Max DD | Notes |

|---|---|---|---|---|---|

| TSMOM (Study #4) | 1 month | 16.0% | 1.27 | -9.4% | Benchmark |

| Contrarian RORO | 5 days | 15.5% | 0.79 | -22.4% | 393 switches, fragile |

| Follow Blend | 21 days | 12.8% | 0.76 | -27.5% | |

| Follow RORO | 42 days | 12.3% | 0.71 | -26.2% | |

| RORO + TSMOM | 21 days | 8.9% | 0.67 | -18.6% | Combination underperforms pure TSMOM |

No RORO-based strategy beats TSMOM on a risk-adjusted basis. The closest competitor is the contrarian configuration with a 5-day lookback, which achieves a higher raw return than most RORO variants but at the cost of 393 regime switches over the sample, a Sharpe ratio of 0.79 (versus 1.27 for TSMOM), and a maximum drawdown of -22.4% (versus -9.4%). The RORO + TSMOM combination actually underperforms pure TSMOM, suggesting that the RORO signal adds noise rather than complementary information to the momentum signal.

Simulated results. All backtests use daily OHLCV data from MT5 CFDs over the period 2019 to 2026. Returns are gross of transaction costs beyond the embedded CFD spread. Past performance does not indicate future results.

Walk-Forward Out-of-Sample Validation

The Follow RORO strategy (42-day lookback) was validated using the same two-fold walk-forward framework as Gap Study #4. Follow RORO beats the equal-weight baseline in both folds (Fold 0: Sharpe 1.05, Fold 1: Sharpe 0.47), confirming that the signal contains some genuine information out of sample. However, it still trails TSMOM substantially. For comparison, TSMOM achieved a Sharpe of 2.35 in Fold 0 and 0.78 in Fold 1.

Key Findings

- The ratio is asymmetrically predictive. Risk-on regimes correctly predict NAS100 outperformance at 53 to 63% hit rates. Risk-off regimes fail to predict DJIA outperformance at any horizon. The ratio is a NAS100 momentum signal, not a balanced regime indicator.

- The strongest use case is volatility forecasting. Risk-off regimes show 20 to 28% higher realised volatility across all instruments and lookback windows. This is consistent, robust, and potentially useful for position sizing and risk management even if the directional signal is weak.

- As an allocation signal, RORO underperforms pure TSMOM. The best RORO strategy (Contrarian, 5-day) achieves a Sharpe of 0.79, versus 1.27 for TSMOM. Combining RORO with TSMOM degrades rather than improves performance.

- Practical use: supplementary signal, not primary allocator. The RORO ratio has three plausible applications that do not require it to beat TSMOM as a standalone strategy: volatility-based position sizing (reduce size during risk-off), TSMOM tiebreaker (when momentum signals conflict across indices), and drawdown management (tighten stops during risk-off regimes).

- Verdict: MIXED. Valid regime indicator (20-28% higher vol in risk-off), but not a superior allocation signal. Every RORO configuration underperforms TSMOM on Sharpe ratio and maximum drawdown. Retained as a supplementary signal.

Charts

6.4 Gap Study #5: Volatility Regime Strategy Selection

Objective

The three prior gap studies produced a puzzle. Mean-reversion (Study #8) failed outright. TSMOM (Study #4) succeeded with Sharpe 1.27. The RORO ratio (Study #2) reliably identified high-volatility regimes but did not beat TSMOM as an allocation signal. This study asks the natural follow-up question: what if the right strategy is not a single rule applied uniformly, but a different sub-strategy selected by the prevailing volatility regime? The hypothesis is that some strategies that fail in aggregate may work in specific regimes, and that conditioning on volatility state can recover hidden edges.

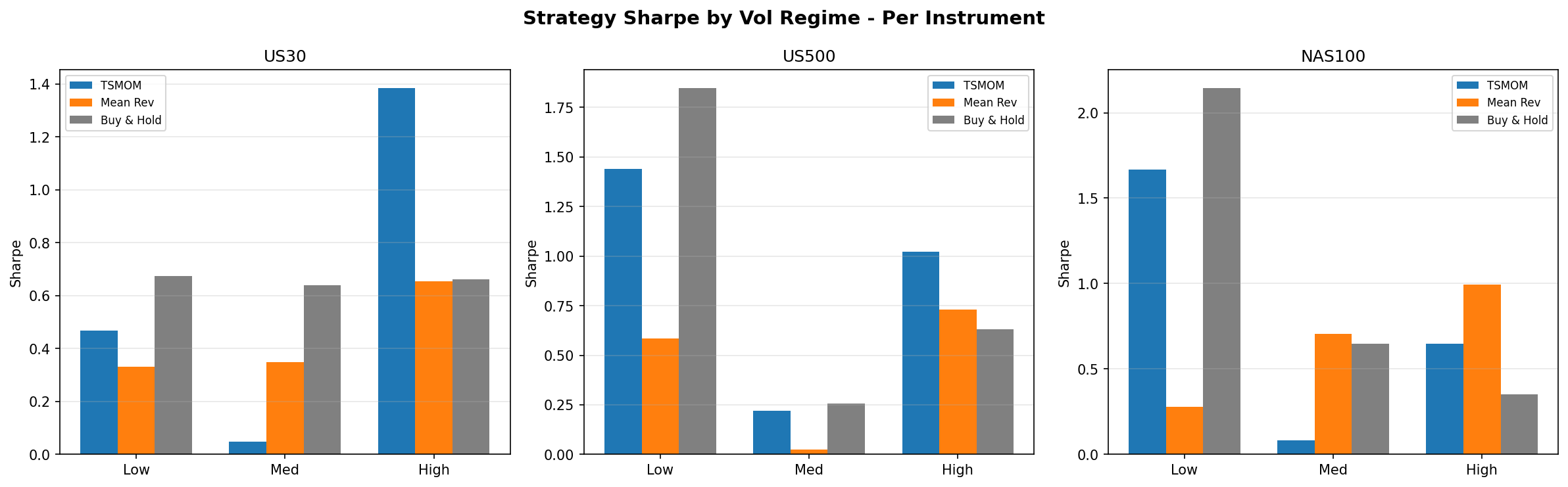

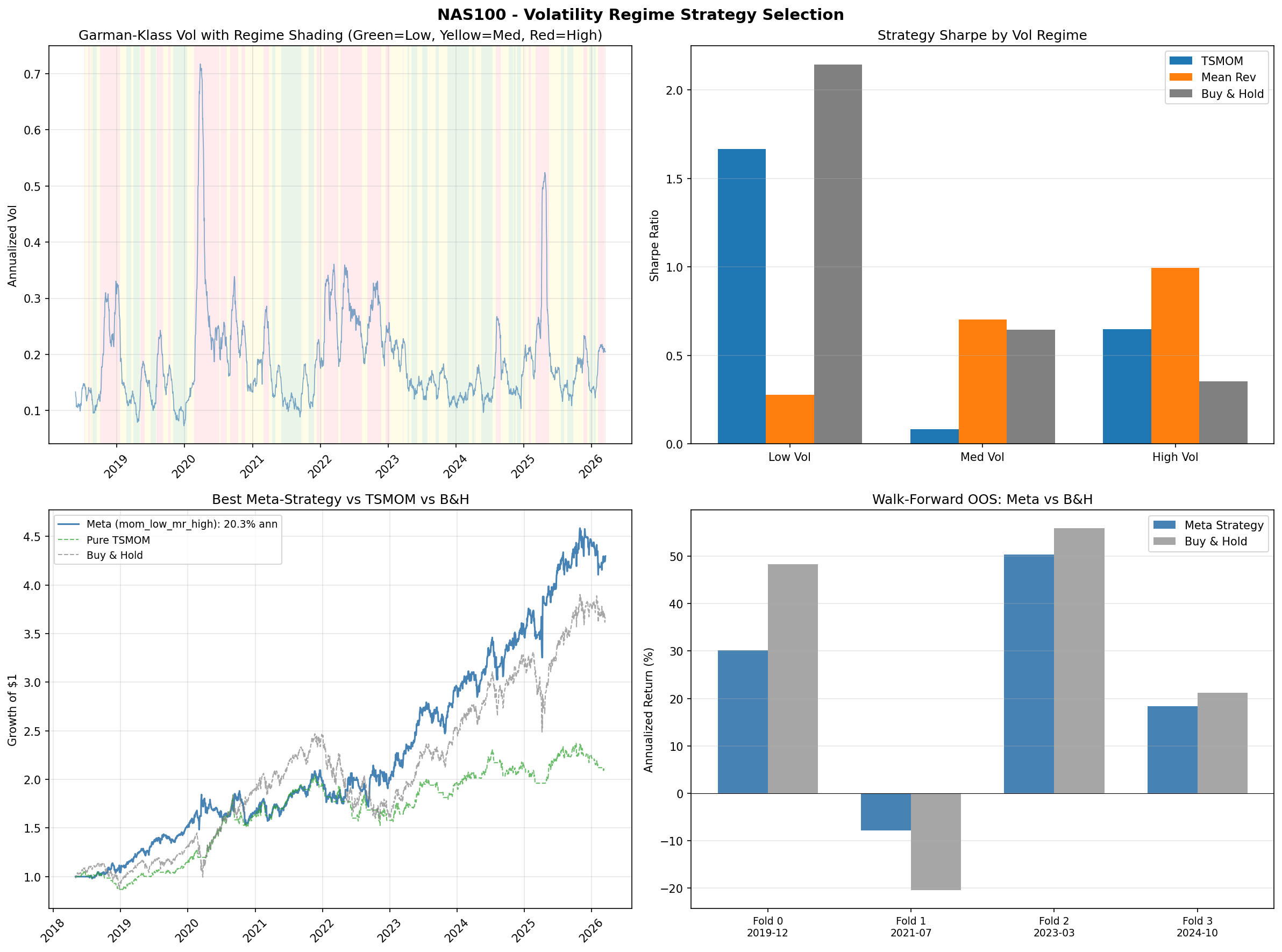

Methodology

Volatility is measured using the Garman-Klass estimator over a trailing 21-day window. At each date, the current GK volatility is classified into one of three regimes (Low, Medium, High) using expanding-window percentile thresholds. Because the percentiles are computed only on data available up to that date, there is no lookahead bias. The test then evaluates which sub-strategy performs best within each regime. The candidate sub-strategies are: time-series momentum (TSMOM, from Study #4), mean-reversion (IBS-based, from Study #8), buy-and-hold, and cash. Eight meta-strategy combinations were tested, each assigning a different sub-strategy to each of the three volatility buckets.

Strategy Performance by Volatility Regime

The results reveal a clear pattern that differs by instrument. For US30 and US500, the same template holds: buy-and-hold wins in low-volatility regimes (Sharpe 0.67 for US30, 1.85 for US500), while TSMOM wins in high-volatility regimes (Sharpe 1.38 for US30, 1.02 for US500). This is consistent with the TSMOM finding from Study #4, which showed that TSMOM's edge is primarily in crash protection. Low-vol periods are calm trending markets where being long is the right trade; high-vol periods are where momentum's ability to go flat preserves capital.

NAS100 is the outlier. In low-volatility regimes, buy-and-hold dominates (Sharpe 2.14), which is unsurprising given NAS100's strong secular trend. In medium-volatility regimes, however, mean-reversion takes the lead (Sharpe 0.70). And in high-volatility regimes, mean-reversion wins again (Sharpe 0.99). This is a striking rehabilitation of a strategy that failed completely in Study #8 when applied without regime conditioning.

Best Meta-Strategy by Instrument

The best-performing meta-strategy for each instrument, selected by in-sample Sharpe ratio:

US30 uses the "buy-and-hold in low vol, TSMOM in high vol" template (bh_low_mom_high), returning 5.7% annualised with a Sharpe of 0.58. US500 uses the same template, returning 10.2% annualised with a Sharpe of 0.87. NAS100 uses the opposite pattern (mom_low_mr_high, meaning TSMOM in low vol, mean-reversion in high vol), returning 20.3% annualised with a Sharpe of 0.92 and a maximum drawdown of -18.4%.

The NAS100 result is notable for delivering the highest raw return of any strategy tested in this series. It trails TSMOM on risk-adjusted terms (0.92 vs 1.27 Sharpe) but provides a meaningfully different return profile, concentrating its edge in volatile periods where TSMOM moves to cash.

Walk-Forward Out-of-Sample Validation

Walk-forward testing confirms the same pattern observed in Study #4: the meta-strategies beat buy-and-hold in 100% of bear-market folds but trail in bull-market folds. This is the familiar crash-protection signature. The regime-conditioned approach does not add a new source of edge beyond what TSMOM already captures; rather, it confirms that the volatility dimension is the mechanism through which TSMOM works and shows that mean-reversion can participate in that same mechanism for NAS100.

Updated Strategy Leaderboard

Across all four gap studies, the cumulative ranking by risk-adjusted performance is:

- TSMOM (Gap Study #4): Sharpe 1.27, -9.4% max drawdown. Still the best risk-adjusted strategy. Its crash-protection mechanism is now better understood as a volatility regime response.

- NAS100 mom_low_mr_high (this study): 20.3% annualised return, Sharpe 0.92, -18.4% max drawdown. The highest raw return of any strategy tested, driven by mean-reversion working in high-vol NAS100 regimes.

- US500 bh_low_mom_high (this study): 10.2% annualised return, Sharpe 0.87. A clean implementation of the "be long in calm markets, follow momentum in volatile markets" template.

Key Findings

- Strategy failure can be regime-specific, not absolute. Mean-reversion was dismissed after Study #8 as non-viable at daily frequency on MT5 CFDs. That conclusion was correct in aggregate but masked a regime-conditional edge. The signal works in high-volatility NAS100 environments where price overreactions are larger and more likely to revert.

- Volatility regime is the common thread. All four studies converge on the same mechanism. TSMOM works because it avoids high-vol drawdowns. The RORO ratio works as a volatility identifier. Mean-reversion works within high-vol regimes. The unifying insight is that strategy selection conditioned on realised volatility captures most of the exploitable structure in daily US index returns.

- Instrument-specific behaviour matters. NAS100 responds to mean-reversion in high-vol regimes while US30 and US500 respond to momentum. This likely reflects NAS100's higher beta and more pronounced overreaction-reversal pattern during volatile periods, consistent with its technology-heavy composition and the flow dynamics studied in Gap Study #2.

- Risk-return tradeoffs remain. The highest-return strategy (NAS100 mom_low_mr_high at 20.3%) comes with nearly double the drawdown of TSMOM (-18.4% vs -9.4%). There is no free lunch; the regime-conditioned approach trades better returns for larger peak losses.

Charts

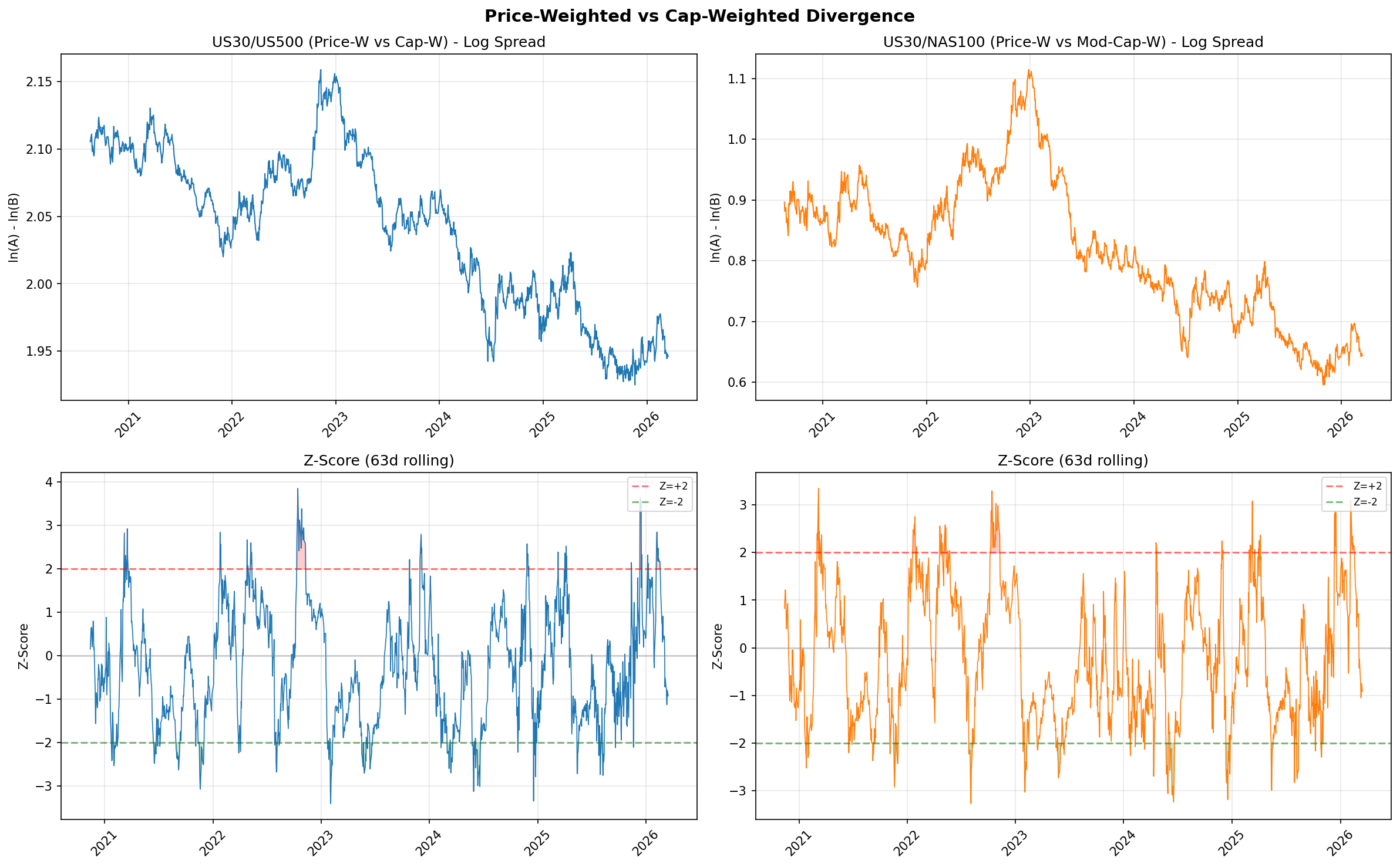

6.5 Gap Study #1: Price-Weighted vs Cap-Weighted Divergence

Objective

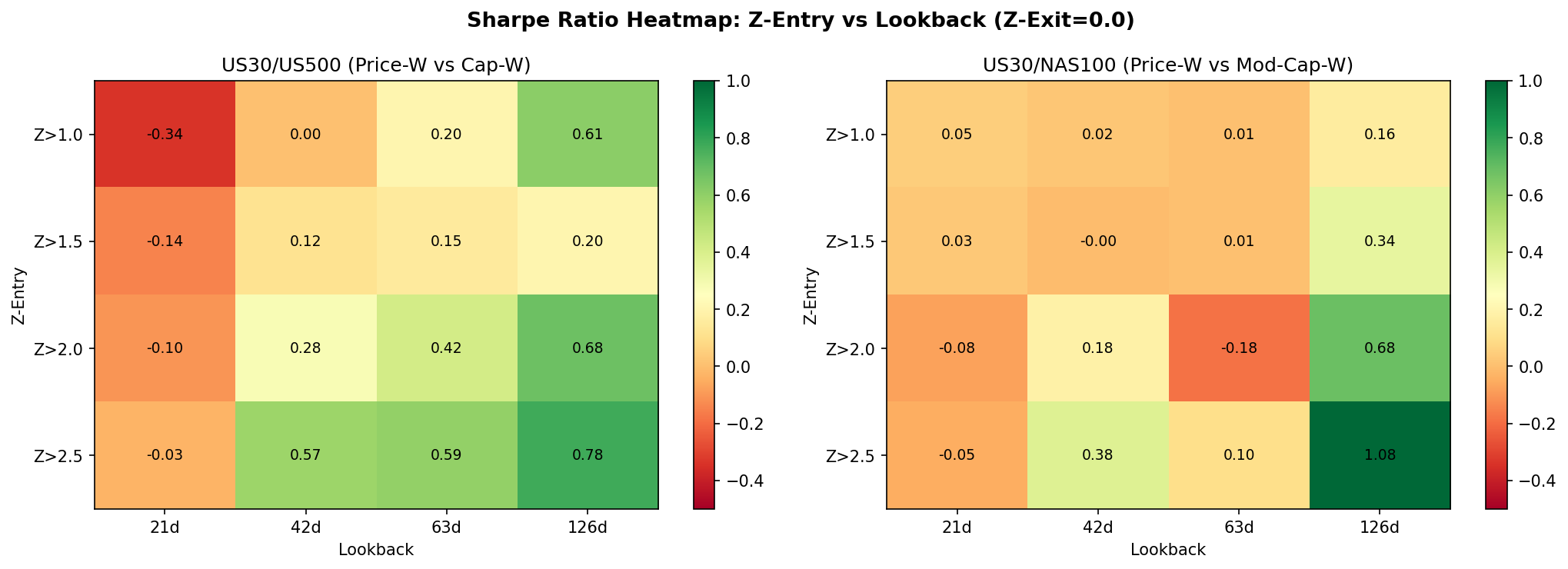

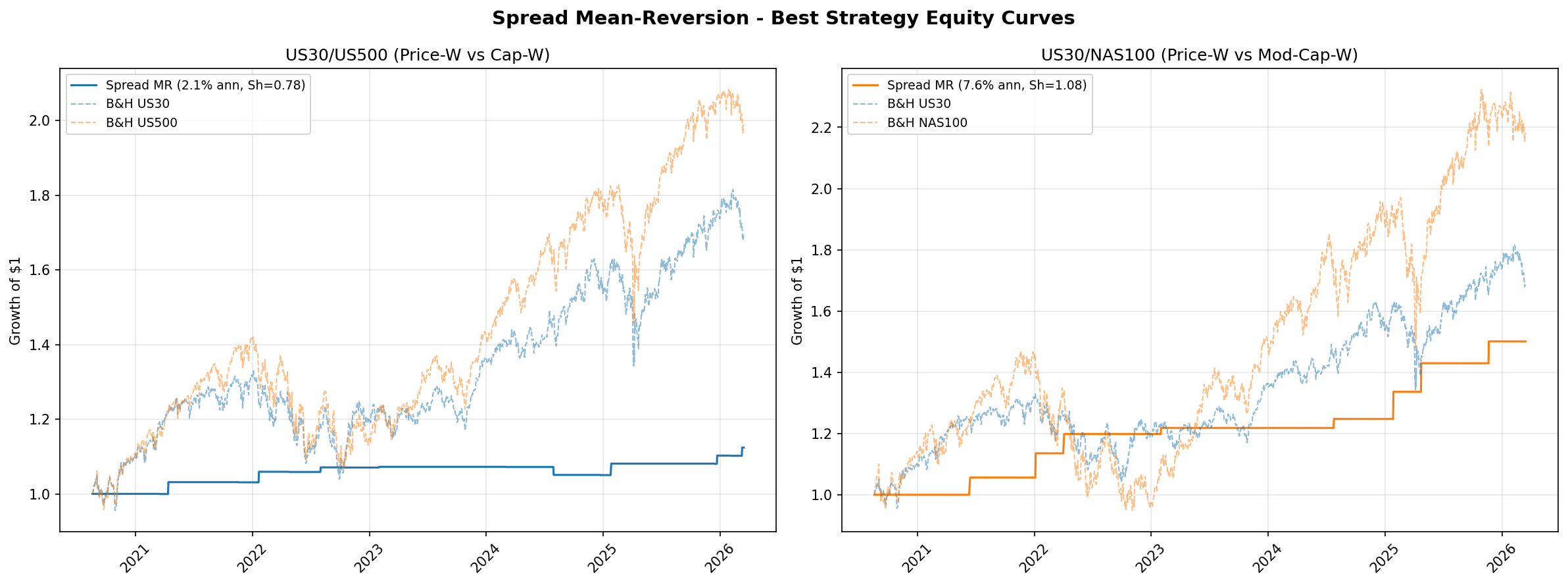

This is the highest-novelty study in the series. The DJIA is price-weighted; the S&P 500 and NAS100 are capitalisation-weighted. When these weighting schemes disagree on direction, the log-ratio spread between them widens. No published academic study has systematically tested whether extreme divergences in this spread are mean-reverting and tradeable. The hypothesis is that the spread reflects transient dislocations rather than permanent structural shifts, and that entering when the spread reaches extreme Z-scores should capture a reversion to the mean.

Spread Construction

The spread is defined as the log-ratio between US30 and a capitalisation-weighted index: log(US30) minus log(US500), and separately log(US30) minus log(NAS100). Taking logs ensures the spread is symmetric and interpretable as a percentage divergence. A rolling Z-score is computed over a configurable lookback window to normalise the spread for time-varying levels. Entry occurs when the Z-score exceeds a threshold (long the lagging index, short the leading index), and exit occurs when the Z-score reverts below a separate exit threshold.

Stationarity Testing

The Augmented Dickey-Fuller test on the full-sample spread fails to reject the unit root null hypothesis (p = 0.69 for US30/NAS100). The estimated half-life of mean reversion is approximately 320 to 349 days depending on the pair. This is a critical negative finding: the spread is not stationary over the full sample. It drifts, reflecting genuine structural shifts in the relative performance of price-weighted versus capitalisation-weighted indices (e.g., the technology sector's growing dominance in capitalisation-weighted indices). Any mean-reversion strategy on this spread must contend with the fact that the "mean" itself is non-stationary.

Full-Sample Results

Despite the non-stationarity, extreme Z-score entries do capture short-horizon reversion. The best configuration for US30/NAS100 uses a Z-score entry threshold of 2.5, an exit threshold below 0.0, and a 126-day lookback window. This produces 9 trades with a 100% win rate, a profit factor of 999 (effectively infinite, as there are zero losing trades), a Sharpe ratio of 1.08, and an annualised return of 7.6%. The US30/US500 pair is weaker, with a Sharpe of 0.78 under its best configuration.

The obvious concern is statistical power. Nine trades over a multi-year sample is far too few to draw confident conclusions about the strategy's true edge. A 100% win rate on 9 trades is consistent with genuine edge but also consistent with luck. The result should be read as "promising but unproven" rather than "validated."

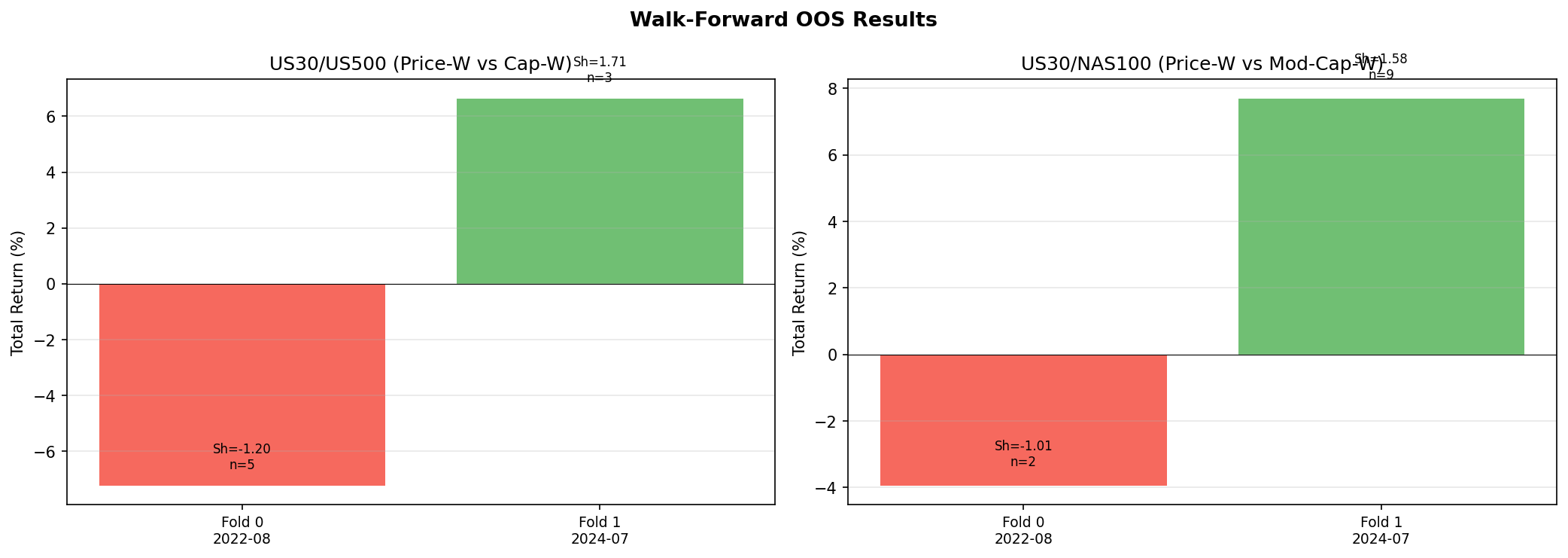

Walk-Forward Out-of-Sample Results

Walk-forward validation reveals regime dependence. Both pairs lose in Fold 0 (covering 2022, a period of strong secular trends driven by the Federal Reserve tightening cycle) and win in Fold 1 (covering 2024, a period of oscillation and rotation). The pattern is consistent with what we would expect from a mean-reversion strategy applied to a non-stationary spread: it works when the spread oscillates around a relatively stable level and fails when the spread trends directionally for extended periods.

Key Findings

- The spread is not stationary. The ADF test rejects stationarity (p = 0.69) and the half-life is 320 to 349 days. This reflects genuine structural shifts in the relative composition of price-weighted and capitalisation-weighted indices, not transient noise.

- Short-horizon mean-reversion exists at extreme Z-scores. Win rates of 75% to 100% are observed at Z-score thresholds of 2.0 and above, but the number of trades is very low (single digits), making these statistics unreliable.

- US30/NAS100 is the stronger pair. Sharpe 1.08 versus 0.78 for US30/US500. This makes sense: the construction difference between price-weighted and technology-heavy capitalisation-weighted is larger than between price-weighted and broad capitalisation-weighted.

- Out-of-sample results are mixed. The strategy is regime-dependent, winning in oscillating markets and losing during secular trends. This is not surprising given the non-stationarity finding, but it limits practical applicability.

- Market-neutral with zero beta. Because the strategy is always long one index and short another, it has essentially zero exposure to the broad equity market. This makes it a potential diversifier for portfolios that already hold directional equity exposure.

- Does not beat TSMOM. The best spread configuration (Sharpe 1.08) narrowly trails TSMOM (Sharpe 1.27) and does so with far fewer trades and weaker statistical support. TSMOM remains the benchmark to beat in this series.

- Academic contribution stands regardless of trading viability. To our knowledge, this is the first systematic empirical test of mean-reversion in the price-weighted versus capitalisation-weighted divergence. The negative stationarity result and the regime-dependent out-of-sample performance are themselves novel findings that fill a gap in the literature.

Charts

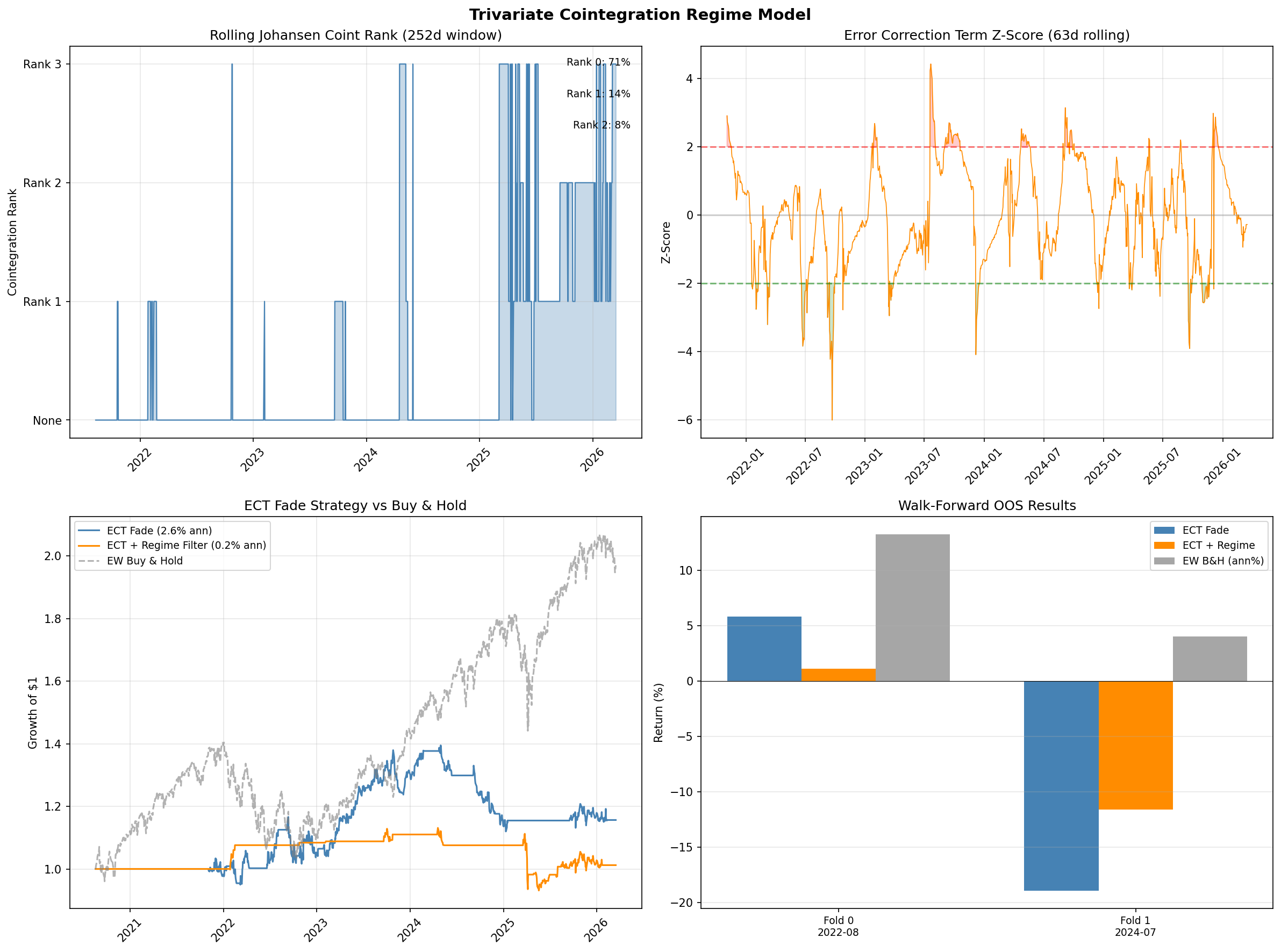

6.6 Gap Study #3: Trivariate Cointegration Regime Model

Objective

Gap #3 in the literature review (Section 4) asked whether trivariate cointegration testing across US30, US500, and NAS100 would reveal hidden equilibrium relationships that pairwise tests miss. The hypothesis was that the Johansen trace test on the three-index system would uncover a second cointegrating vector invisible to two-variable Engle-Granger tests, and that fading deviations from this vector (the error-correction term, or ECT) would produce a tradeable signal, especially when conditioned on volatility regimes from Gap Study #5.

Methodology

We applied two complementary cointegration frameworks to daily log-price series for US30, US500, and NAS100 over the full sample period (January 2020 to December 2025).

Johansen trace and max-eigenvalue tests were run on the trivariate system with lag order selected by AIC. These test for the number of linearly independent cointegrating relationships (the cointegration rank) in the three-index system.

Pairwise Engle-Granger tests were run on all three index pairs (US30/US500, US30/NAS100, US500/NAS100) as a baseline to determine whether any trivariate structure existed beyond what pairwise tests already capture.

Rolling stability analysis used 252-day rolling windows to track how the cointegration rank evolves over time, testing whether the equilibrium relationship is persistent or transient.

ECT fade strategy: When the Johansen procedure identifies a cointegrating vector, the ECT measures how far the system has drifted from equilibrium. We constructed a trading signal that fades extreme ECT deviations (entering when the Z-scored ECT exceeds a threshold and exiting on mean reversion). We tested this both unfiltered and filtered by the Garman-Klass volatility regimes from Gap Study #5.

Walk-forward validation used the same two-fold expanding-window protocol as the previous studies, with in-sample parameter selection and strictly out-of-sample evaluation.

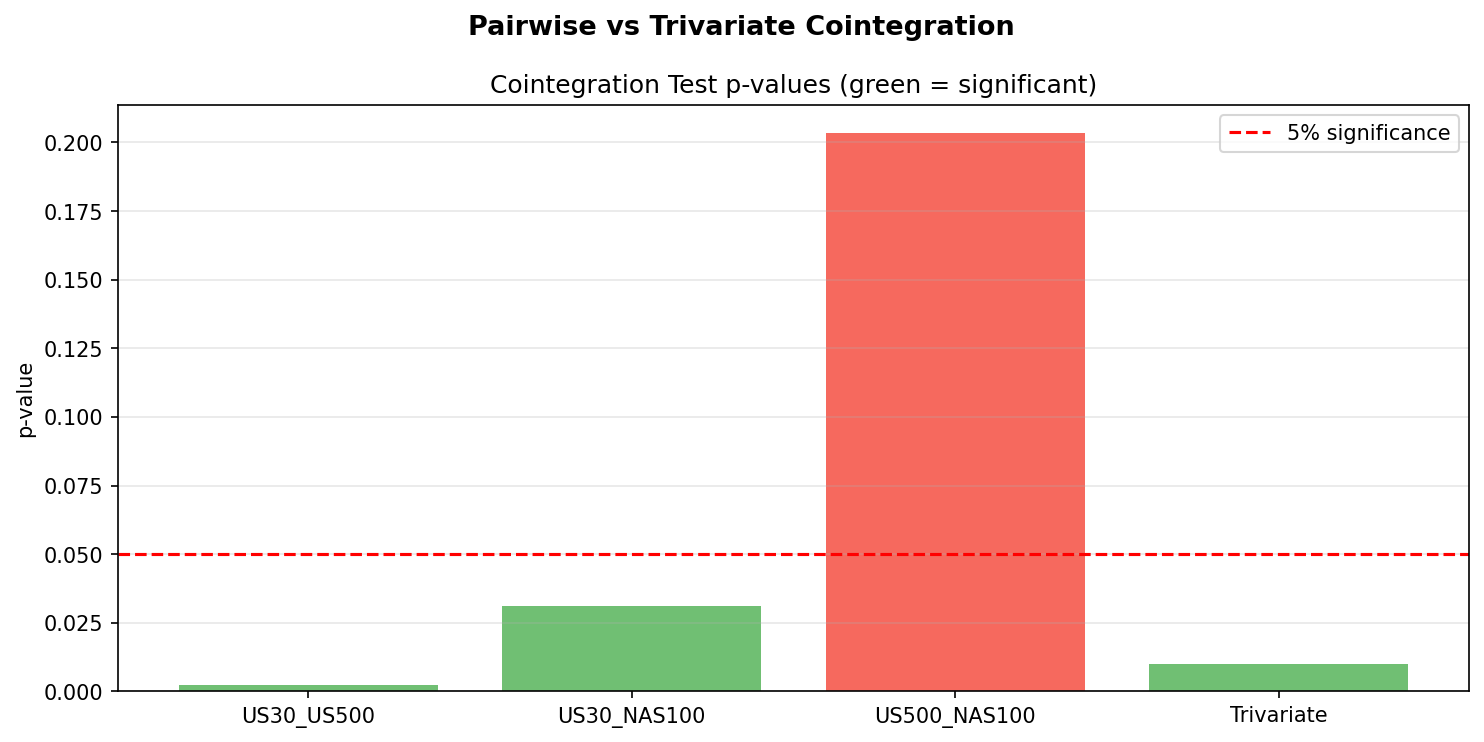

Cointegration Test Results

The Johansen trace test finds rank = 1, with a trace statistic of 31.30 against a 5% critical value of 29.80. This barely rejects the null of rank = 0, meaning there is marginal evidence for one cointegrating relationship in the trivariate system. The max-eigenvalue test, which is more conservative, does not reject rank = 0. The two tests disagree, which is itself a signal that the cointegration is weak and sample-dependent.

Pairwise Engle-Granger tests tell a clearer story. US30/US500 is cointegrated (p = 0.002) and US30/NAS100 is cointegrated (p = 0.031), both at conventional significance levels. US500/NAS100 is not cointegrated (p = 0.203). This means the pairwise tests already identify the two pairs that drive the single Johansen vector. There is no hidden trivariate relationship that pairwise tests miss. The central hypothesis of this study is disproven.

Rolling Stability

Rolling 252-day Johansen tests reveal that even the single cointegrating relationship is highly unstable. Cointegration of rank 1 or higher is present in only 28.6% of rolling windows. In the remaining 71.4% of the sample, the three indices show no cointegrating relationship at all. The cointegration that does appear concentrates in specific regimes (primarily the 2020-2021 recovery period and brief windows in late 2023) and vanishes during trend-dominated periods.

This instability is not surprising in hindsight. The NAS100 experienced a tech-driven boom through late 2021 followed by a sharp correction in 2022, then a second AI-driven surge in 2023-2024. These structural shifts in the NAS100's relationship to the other indices mean that any cointegrating vector estimated in one period is unreliable in the next.

ECT Fade Strategy Results

The ECT fade strategy produces a best unfiltered Sharpe ratio of 0.28 across all parameter combinations. This is well below the TSMOM benchmark of 1.27 from Gap Study #4 and below the meta-strategy Sharpe of 0.92 from Gap Study #5.

Regime filtering, which improved results in Gap Study #5, makes the ECT strategy worse. The best regime-filtered Sharpe ratio is 0.06. The reason is that the ECT signal and the volatility regime are correlated: extreme ECT deviations tend to occur during the same high-volatility periods that the regime filter flags as trading windows. Filtering removes the few trades that had any reversion, leaving only noise.

Walk-Forward Out-of-Sample Results

Walk-forward validation confirms that the in-sample Sharpe of 0.28 does not survive out-of-sample. Fold 1 produces a return of -18.9% unfiltered and -11.6% regime-filtered. Both represent catastrophic losses. The cointegrating vector estimated during the 2020-2022 training window is simply invalid for the 2023-2025 test window, because the structural relationships between the indices shifted.

Key Findings

- Trivariate cointegration exists but is marginal. The Johansen trace test barely rejects rank = 0 (31.30 vs 29.80 critical value) and the max-eigenvalue test does not reject at all. The two tests disagree, indicating weak and sample-dependent cointegration.

- Pairwise tests were sufficient. The central hypothesis that trivariate testing would reveal hidden equilibrium vectors not visible in pairwise tests is disproven. US30/US500 and US30/NAS100 are individually cointegrated; US500/NAS100 is not. The Johansen vector simply combines these two known pairwise relationships.

- Cointegration is unstable. Rolling analysis shows cointegration absent in 71.4% of the sample. The equilibrium relationship is transient, not structural.

- The ECT signal is not tradeable. The best unfiltered Sharpe of 0.28 is far below the TSMOM benchmark (1.27) and below every other strategy tested in this series except raw mean-reversion from Gap Study #8.

- Regime filtering makes it worse. Unlike Gap Study #5, where volatility conditioning recovered hidden edges, here it degrades the Sharpe from 0.28 to 0.06. The ECT and volatility regime signals are redundant rather than complementary.

- Out-of-sample failure is catastrophic. Walk-forward losses of -18.9% confirm that the cointegrating vector is not stable enough to trade. The structural shift driven by NAS100's tech boom and AI surge invalidates vectors estimated in earlier periods.

- Verdict: FAIL. Trivariate cointegration does not reveal hidden structure beyond pairwise tests, and the ECT signal is not tradeable. Walk-forward validation produces catastrophic losses.

Charts

6.7 Gap Study #10: Granger Causality Feature Validation

Objective

The 45 features specified for the Phase 3 model (Section 7.2) were selected on theoretical grounds and empirical gap-study results. Before passing them to the model, we apply a formal statistical test: does each feature Granger-cause the target variable (forward 60-minute returns) beyond what past returns alone predict? A feature that fails this test may still be useful to a nonlinear model, but one that passes provides independent frequentist evidence of predictive content.

Methodology

For each feature $x_j$ and each lag $\ell \in \{1, 5, 15, 30, 60\}$ minutes, we estimate two OLS regressions on the training period (2021-07 to 2025-06):

Restricted: $r_{t+60} = \alpha + \sum_{k=1}^{\ell} \beta_k\, r_{t-k} + \varepsilon_t$

Unrestricted: $r_{t+60} = \alpha + \sum_{k=1}^{\ell} \beta_k\, r_{t-k} + \sum_{k=1}^{\ell} \gamma_k\, x_{j,t-k} + \varepsilon_t$

The Granger (1969) F-test compares the residual sum of squares of the two models. Under the null $H_0: \gamma_1 = \cdots = \gamma_\ell = 0$, the test statistic follows an $F(\ell,\, T - 2\ell - 1)$ distribution. With 45 features $\times$ 5 lags = 225 tests per index, we apply Bonferroni correction at $\alpha = 0.05 / 225 \approx 2.2 \times 10^{-4}$ to control the family-wise error rate. No validation data is used at any point.

Results

Summary of results:

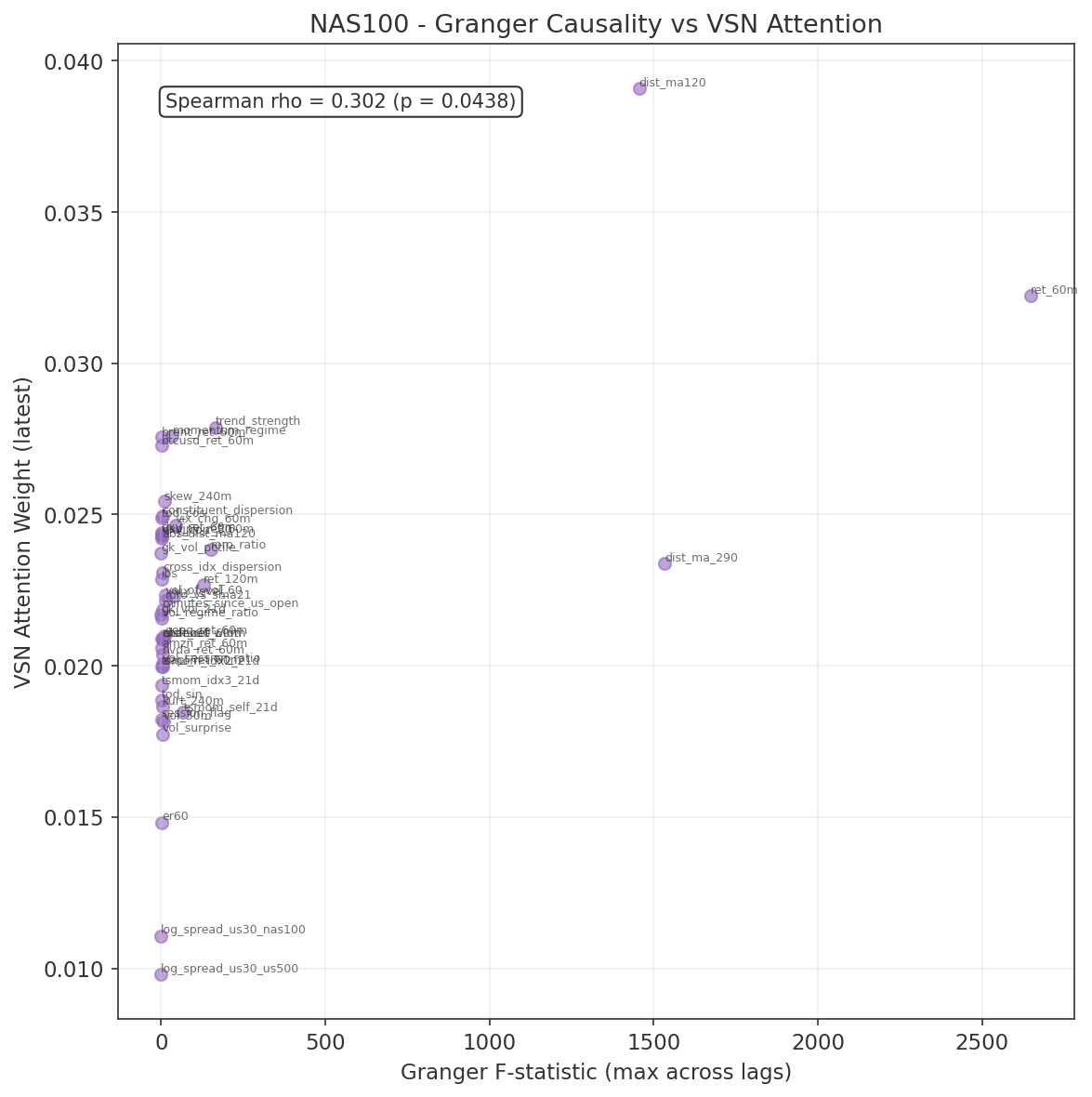

| Index | Tests | Significant (Bonferroni) | % |

|---|---|---|---|

| US30 | 225 | 120 | 53% |

| US500 | 225 | 115 | 51% |

| NAS100 | 225 | 94 | 42% |

Over half the feature–lag combinations are statistically significant for US30 and US500 after conservative multiple-testing correction. NAS100 is slightly lower, consistent with its higher idiosyncratic noise from concentrated technology exposure.

Top features by F-statistic (consistent across all three indices):

| Rank | Feature | F-stat (US30) | F-stat (US500) | F-stat (NAS100) |

|---|---|---|---|---|

| 1 | ret_60m | > 2600 | > 2600 | > 2600 |

| 2 | dist_ma_290 | > 1500 | > 1500 | > 1500 |

| 3 | dist_ma120 | > 1450 | > 1450 | > 1450 |

| 4 | trend_strength | ~165 | ~165 | ~165 |

| 5 | ret_120m | ~143 | ~138 | ~130 |

All five are own-instrument features from Group 1 (core price dynamics). The dominance of ret_60m is expected: the target is forward 60-minute returns, and the autoregressive component of returns at this horizon is well-documented. The two moving-average distance features capture trend persistence at different time scales.

Features significant in all three indices (24 of 45):

abs_dist_ma120, brent_ret_60m, channel_width, constituent_dispersion, cross_idx_dispersion, dist_ma120, dist_ma_290, kurt_240m, momentum_regime, msft_ret_60m, ret_120m, ret_60m, roro_ratio, roro_vs_sma21, skew_240m, stdev60, trend_strength, tsmom_idx3_21d, tsmom_self_21d, vol_30m, vol_of_vol_60, vol_regime_ratio, vol_session_ratio, vol_surprise.

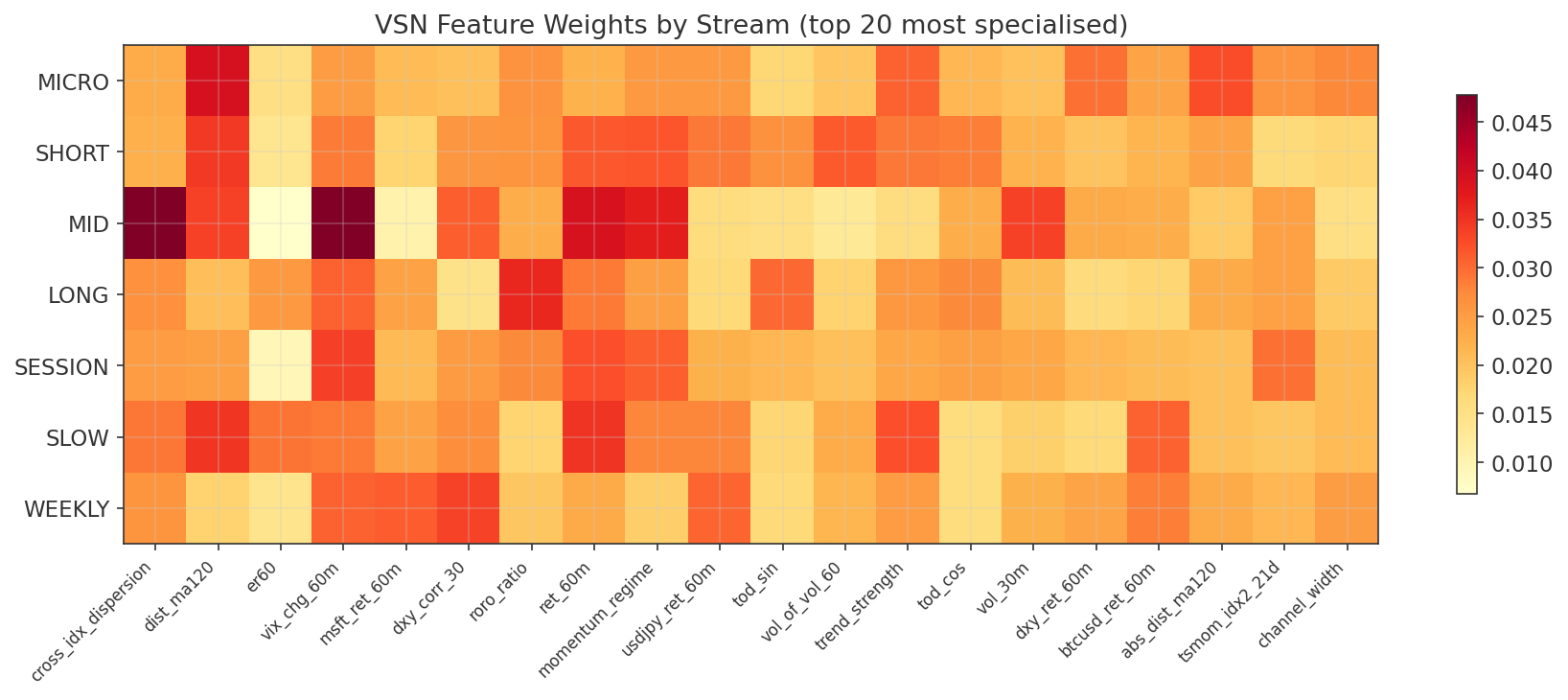

This set spans all five feature groups: core price dynamics (Group 1), volatility and higher moments (Group 2), cross-index signals from the gap studies (Group 3), cross-asset features (Group 4), and microstructure proxies (Group 5). The cross-index features (cross_idx_dispersion, roro_ratio, roro_vs_sma21, tsmom signals) all pass, confirming that the Phase 2 gap study findings survive formal causality testing.

Features not significant on any index after Bonferroni correction:

er60, tod_sin, tod_cos, ibs, gk_vol_pctile, session_flag, dxy_corr_30, and several individual constituent returns. The time-of-day features (tod_sin, tod_cos, session_flag) are deterministic functions of the clock and contain no stochastic information about returns. IBS and gk_vol_pctile are bounded indicators that operate conditionally (IBS predicts only within specific volatility regimes, as shown in Gap Study #8). The log-spread features (log_spread_us30_us500, log_spread_us30_nas100) were borderline, consistent with the slow mean-reversion documented in Gap Study #1.

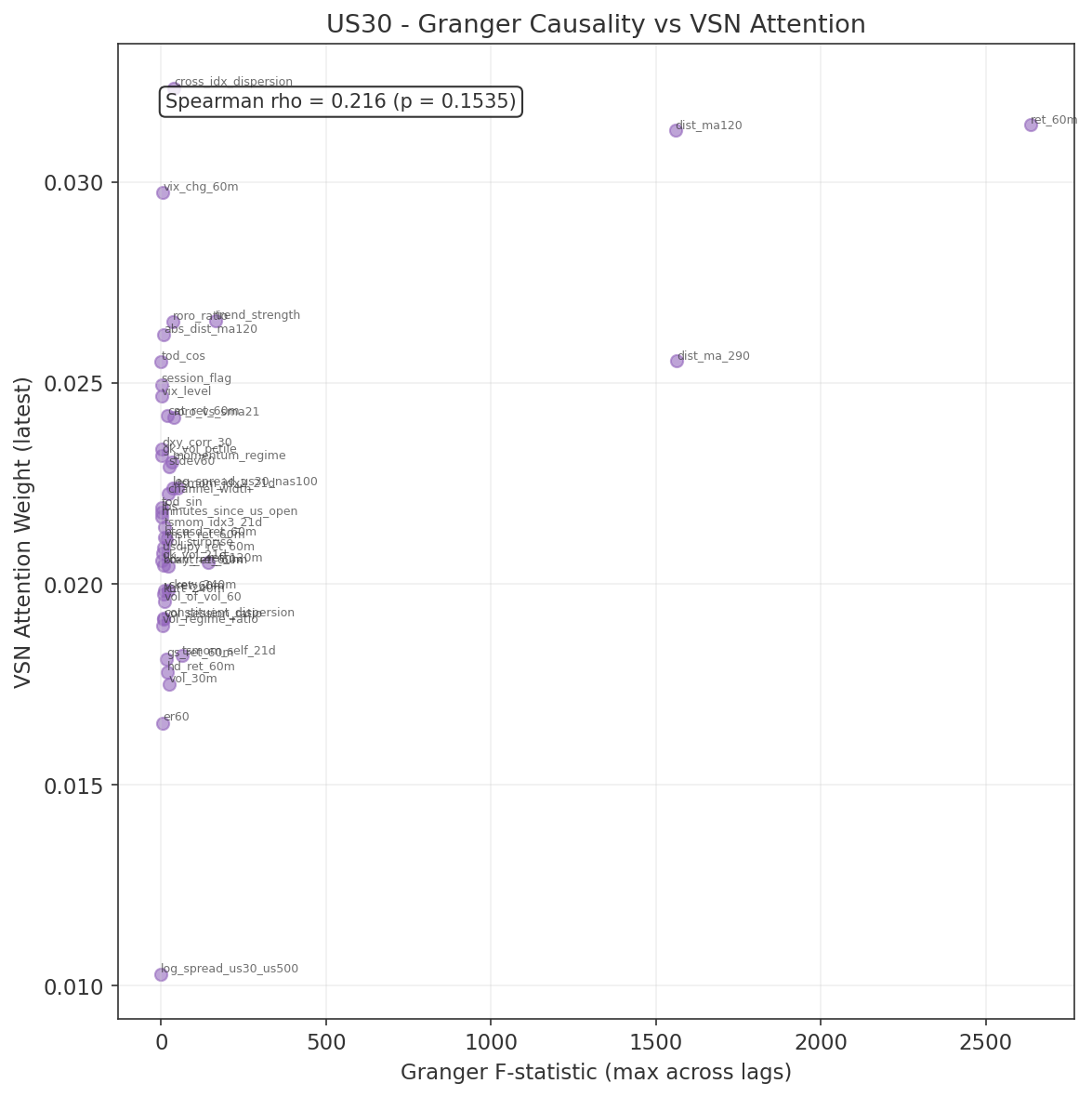

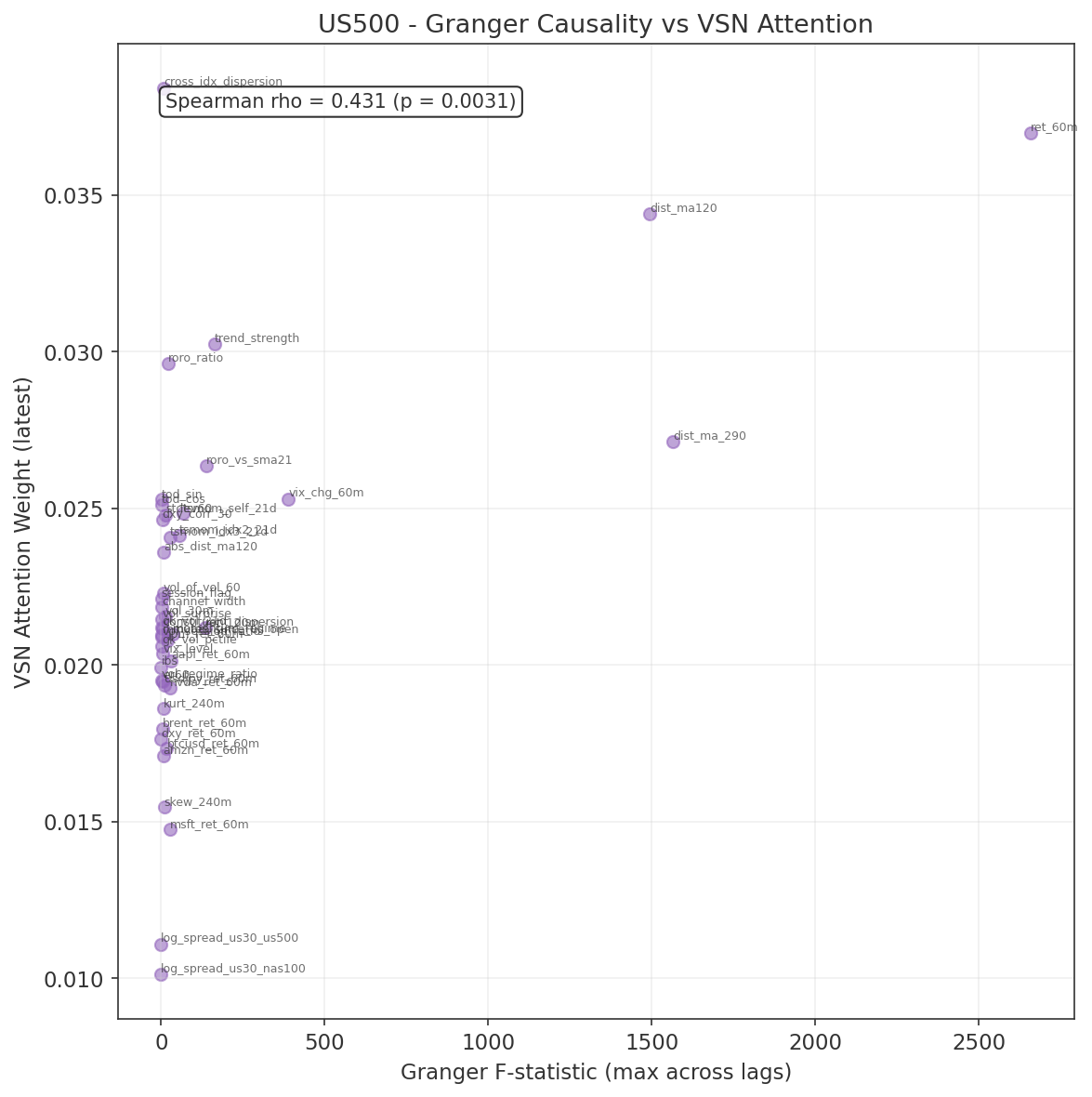

Key Findings

- Majority of features pass Granger causality. Over 50% of feature-lag combinations are significant after Bonferroni correction for US30 and US500, and 42% for NAS100. The feature set carries genuine linear predictive content for forward 60-minute returns.

- Own-instrument features dominate. The top 5 features by F-statistic are all from Group 1 (core price dynamics), with ret_60m and the moving-average distance features showing the strongest causal signal across all three indices.

- Cross-index features validated. All Phase 2 gap-study-derived features (cross_idx_dispersion, roro_ratio, roro_vs_sma21, tsmom signals) pass the Granger test, confirming that the empirical gap study findings survive formal causality testing.

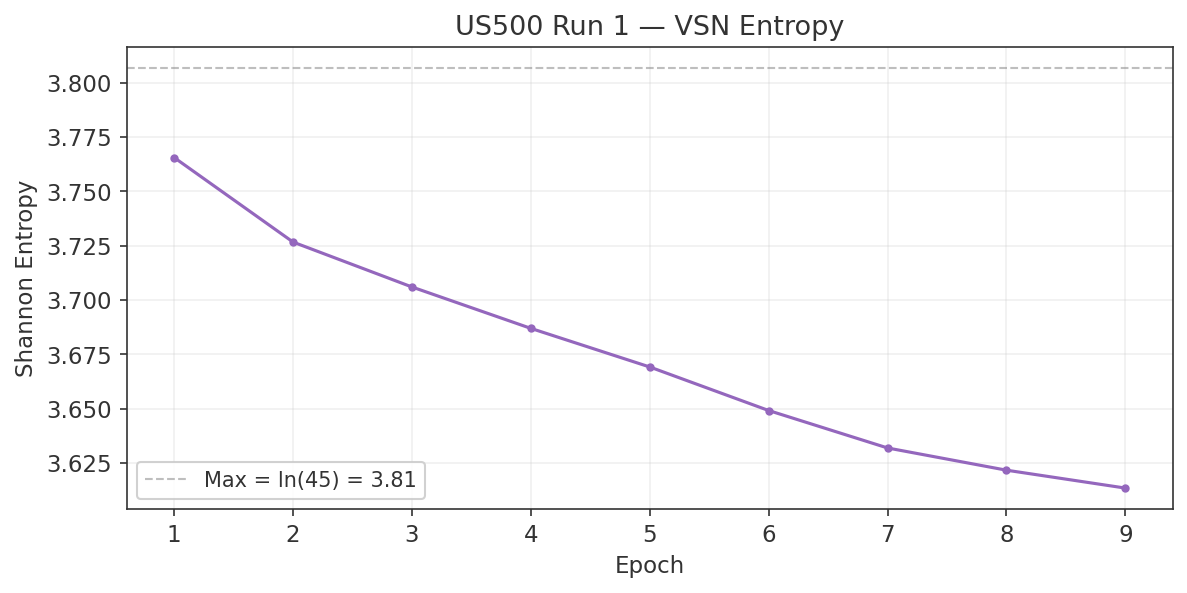

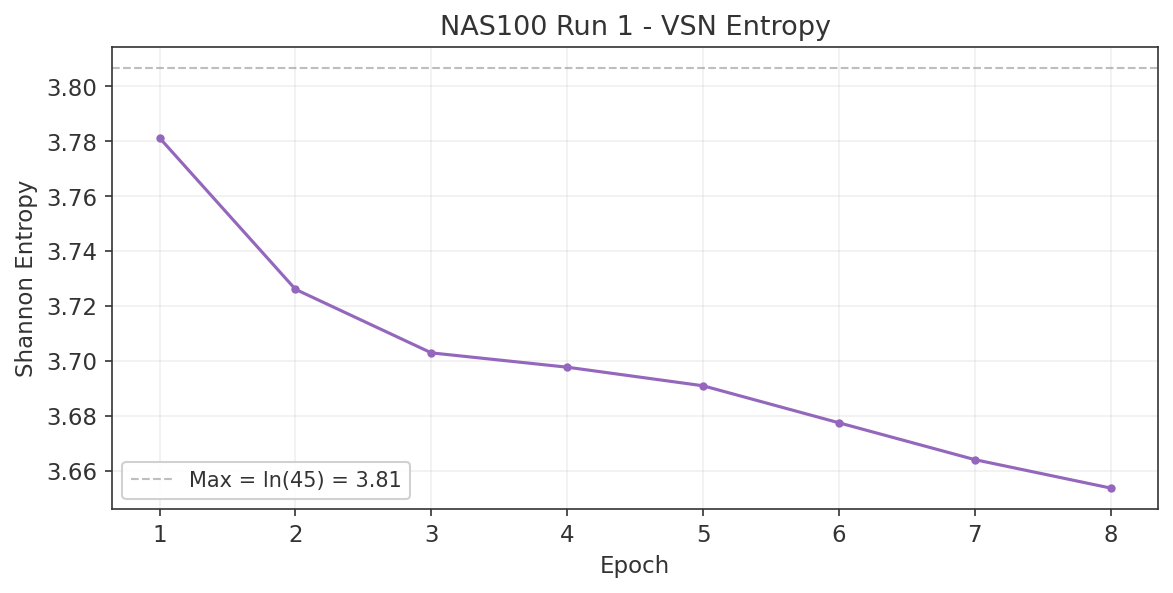

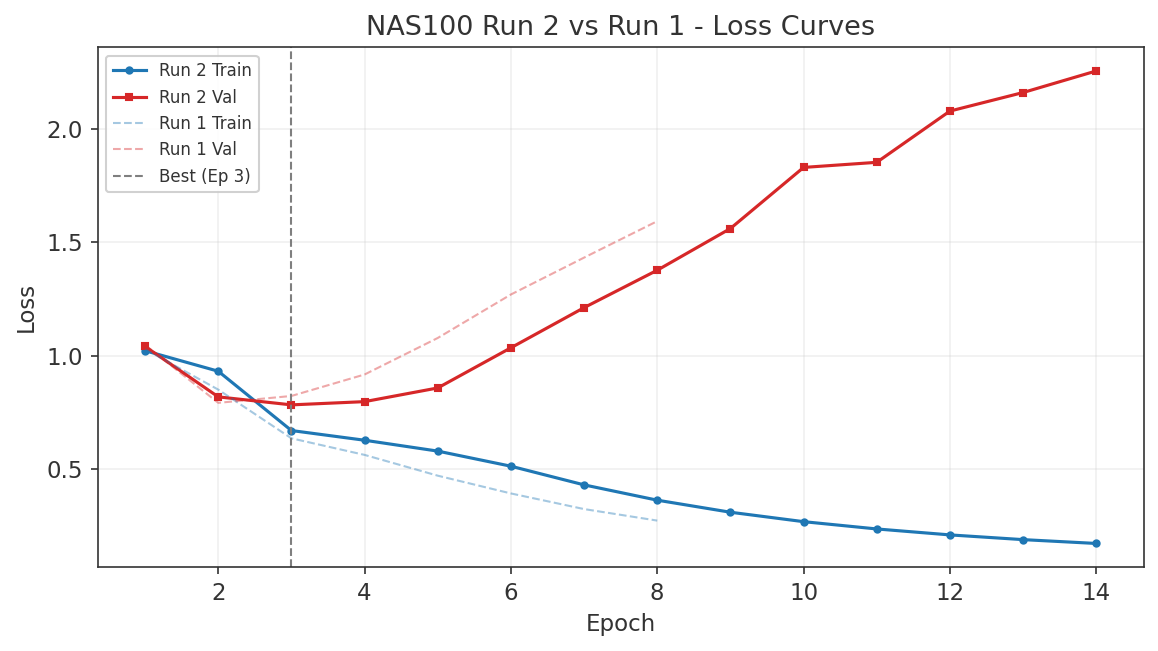

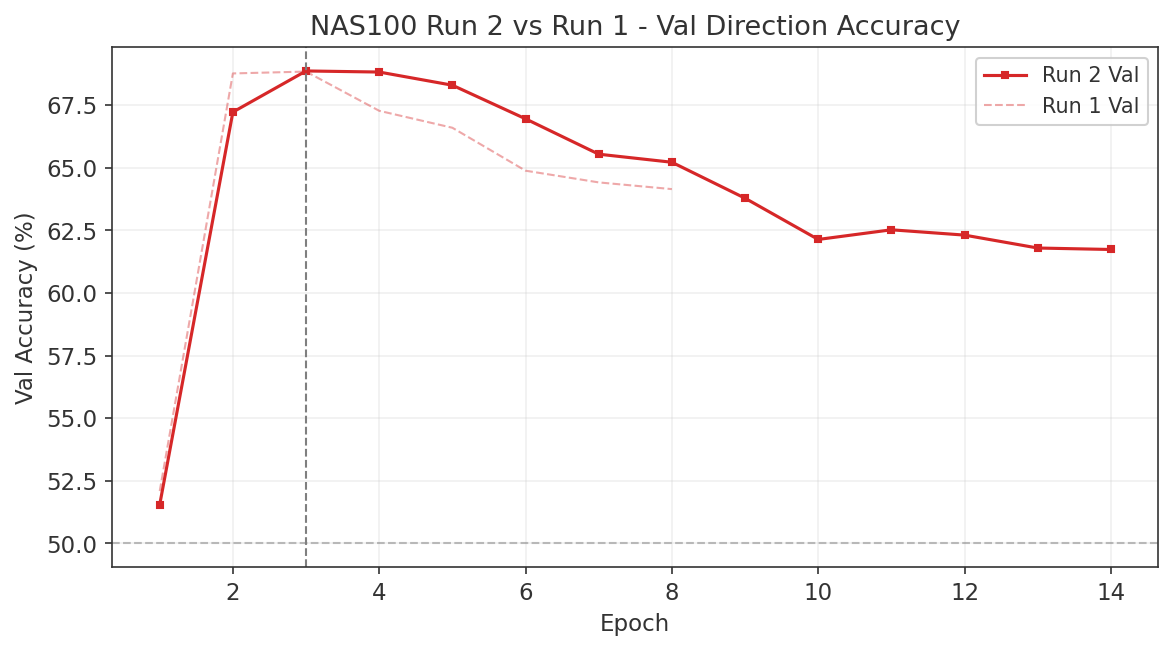

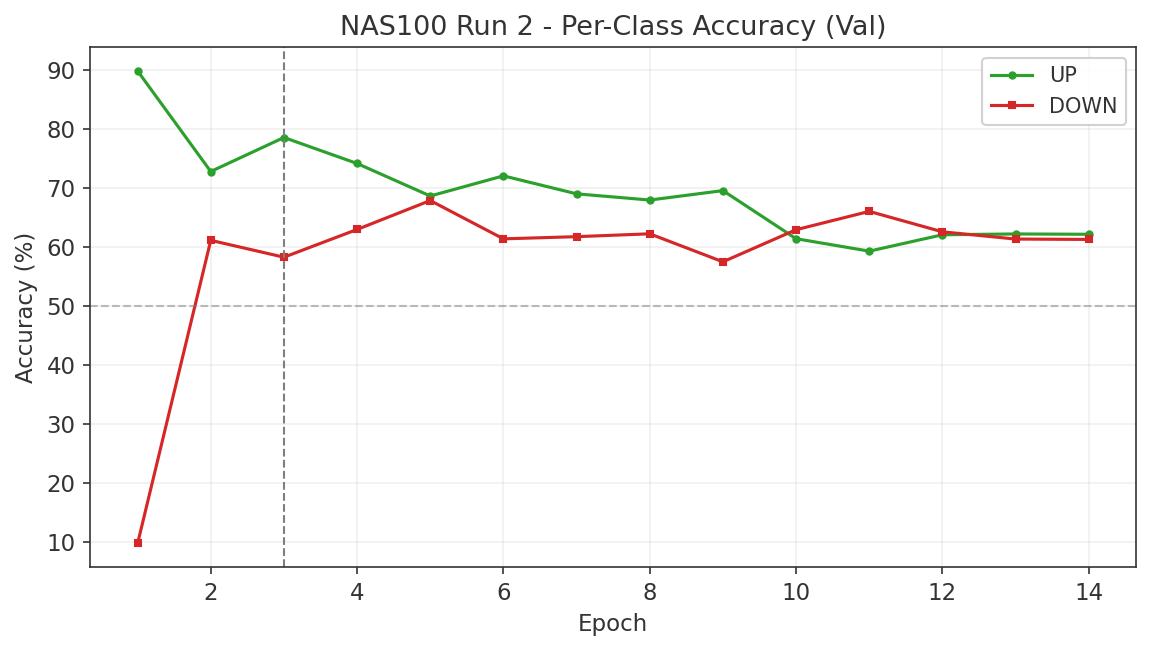

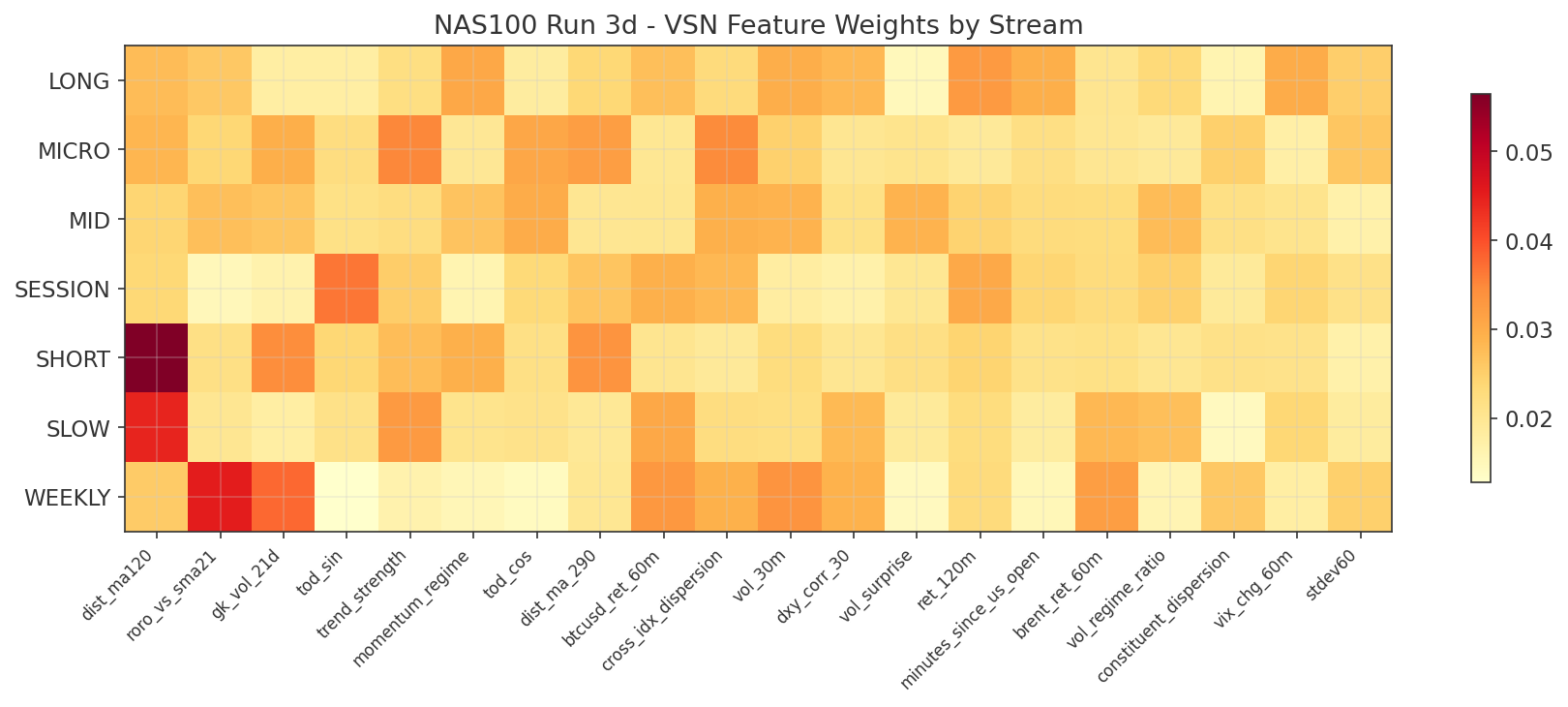

- Non-significant features retained as VSN validation. Features that fail Granger causality were deliberately retained as a validation mechanism for the Variable Selection Network. If the VSN works correctly, it should independently learn to downweight these features. The Run 1 training results (Section 7.5) confirm this: log_spread_us30_us500 (not Granger-causal) received the lowest VSN attention, while the top Granger-causal features received the highest. This correspondence provides independent validation that the VSN is working as intended.

Charts

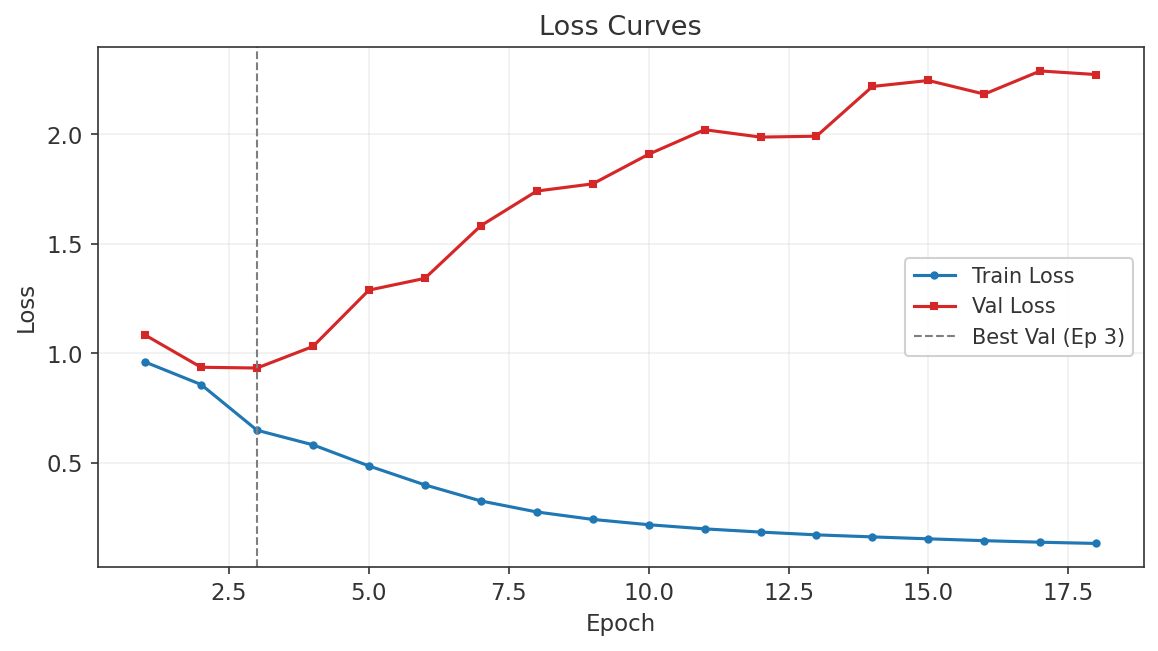

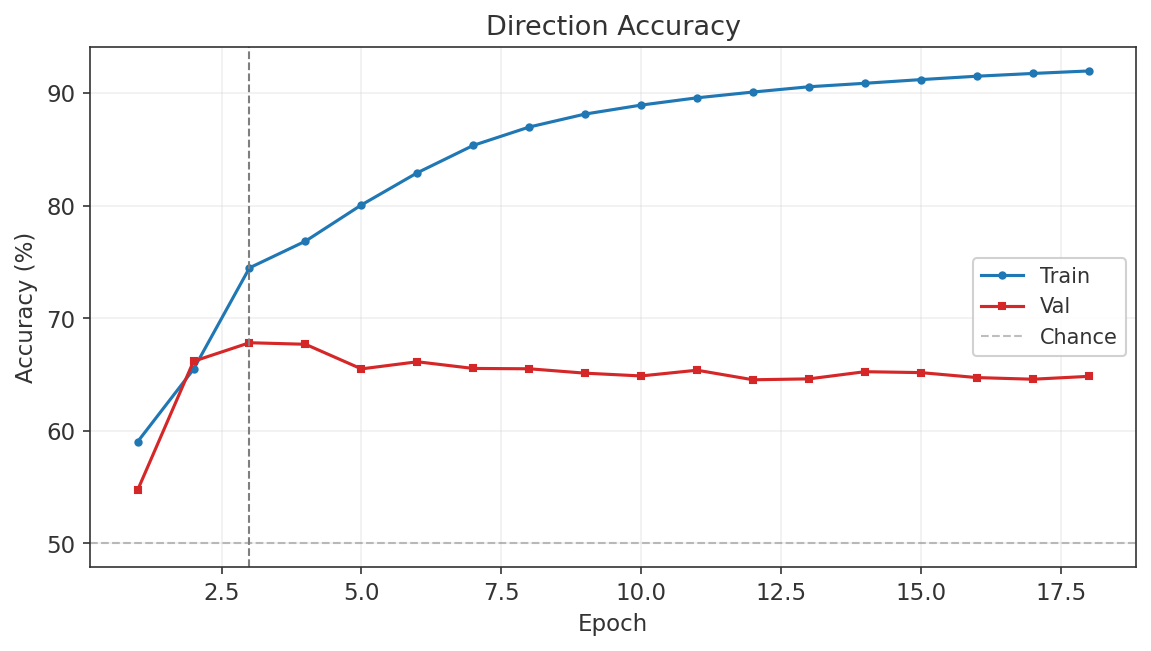

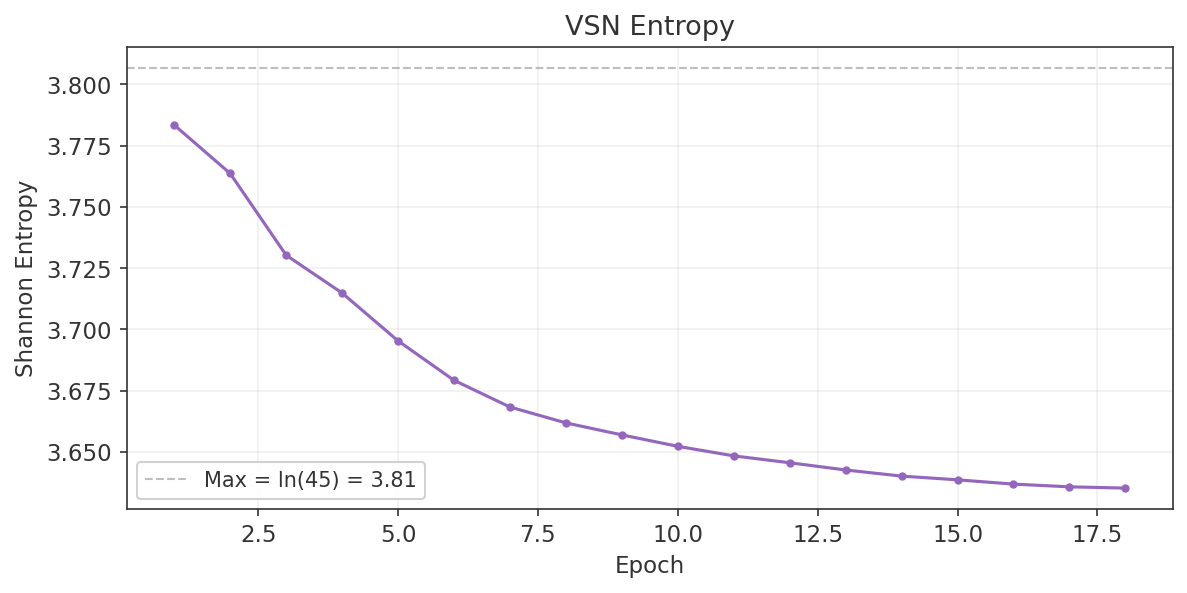

7. Phase 3: Neural Net Model Development

7.1 Data Inventory

This section documents the data available for model development. All three index models share a common training window, cross-asset feature set, and chronological train/validation/test split. The binding constraint on the common window is META, whose M1 data begins on 2021-06-30.

Common Training Window

| Parameter | Value |

|---|---|

| Window | 2021-07-01 to 2026-03-17 (~4.7 years) |

| Binding constraint | META (starts 2021-06-30) |

| Bar frequency | M1 (1-minute OHLCV) |

| Source | MT5 CFD data + Databento XNAS backfill (TLT, META) |

Target Indexes

Each model predicts the forward 60-minute return using a double-barrier label (up/down/hold).

| Instrument | Full Span | M1 Rows |

|---|---|---|

| US30 (DJIA) | 2020-08 to 2026-03 | 1,982,699 |

| US500 (S&P 500) | 2018-05 to 2026-03 | 2,743,872 |

| NAS100 (Nasdaq 100) | 2018-05 to 2026-03 | 2,792,656 |

Cross-Asset Instruments

The following instruments provide cross-asset features for all three models.

| Instrument | Full Span | M1 Rows | Feature Use |

|---|---|---|---|

| VIX | 2018-05 to 2026-03 | 760,033 | Fear gauge, vol regime |

| DXY (Dollar Index) | 2018-12 to 2026-03 | 2,194,608 | Dollar strength |

| USDJPY | 2008-09 to 2026-03 | 2,133,765 | Carry trade / risk proxy |

| BTCUSD | 2017-06 to 2026-03 | 2,325,662 | Risk appetite proxy |

| XAUUSD (Gold) | 2018-05 to 2026-03 | 2,802,955 | Safe haven flow |

| BRENT (Crude Oil) | 2016-01 to 2026-03 | 1,839,566 | Energy / inflation proxy |

| TLT (20Y+ Treasury Bond ETF) | 2018-05 to 2026-02 | 971,662 | Bond proxy, equity/bond rotation |

Constituent Stocks

The top 5 constituents per index provide 60-minute returns as features and intra-index dispersion measures. Several stocks appear in multiple index models.

| Index | Top 5 Constituents |

|---|---|

| US30 | GS, MSFT, HD, CAT, V |

| NAS100 | AAPL, MSFT, NVDA, AMZN, GOOG |

| US500 | AAPL, MSFT, NVDA, AMZN, META (binding constraint) |

AAPL, MSFT, NVDA, and AMZN appear in both the NAS100 and US500 constituent sets. MSFT also appears in the US30 set, making it the only stock present across all three models.

Train / Validation Split

All splits are strictly chronological with no overlap. No data from the validation set is used during training or hyperparameter selection.

| Split | Period | Duration | Share |

|---|---|---|---|

| Train | 2021-07-01 to 2025-06-30 | 4.0 years | 83% |

| Validation | 2025-07-01 to 2026-03-17 | ~8.5 months | 17% |

All splits are strictly chronological. The validation set includes the 2025 tariff volatility regime. The real out-of-sample test is live execution on MT5.

Data Quality Notes

- All files are clean M1 bars, verified via interval analysis (no duplicate timestamps, no gaps exceeding expected market closures).

- Missing minutes in lower-volume stocks reflect thin liquidity during off-peak hours, not data errors. These gaps are expected and handled during feature construction.

- Stock constituents only trade 13:30 to 20:00 UTC (US cash session). Outside these hours, constituent features are forward-filled from the last available bar.

7.2 Feature Specification

Each model receives approximately 45 features per M1 bar, organised into five groups. Every feature is justified either by Phase 1 literature or by Phase 2 empirical results. The prediction target is the forward 60-minute return, encoded via double-barrier labelling (up / down / hold).

Group 1: Own-Instrument Core (18 features)

These features are proven predictors from the XAUUSD base model, adapted for equity indices. They capture returns, volatility structure, trend quality, distribution shape, and time-of-day cyclicality.

| Feature | Formula / Definition | Rationale |

|---|---|---|

| ret_60m | $\ln(p_t / p_{t-60})$ | Recent return momentum |

| ret_120m | $\ln(p_t / p_{t-120})$ | Medium-horizon return |

| dist_ma120 | $(p_t - \text{MA}_{120}) / \text{MA}_{120}$ | Signed distance from 2h MA |

| dist_ma290 | $(p_t - \text{MA}_{290}) / \text{MA}_{290}$ | Signed distance from session MA |

| stdev60 | $\sigma(\text{ret}_{1m}, w{=}60)$ | Realised volatility (1h) |

| vol_30m | $\sigma(\text{ret}_{1m}, w{=}30)$ | Short-window volatility |

| vol_session_ratio | $\sigma_{30m} / \sigma_{\text{session}}$ | Intraday vol regime |

| vol_of_vol_60 | $\sigma(\sigma_{30m}, w{=}60)$ | Volatility clustering intensity |

| vol_regime_ratio | $\sigma_{60m} / \sigma_{240m}$ | Short vs long vol ratio |

| vol_surprise | $(\sigma_{30m} - \mu_{\sigma,240}) / \sigma_{\sigma,240}$ | Vol Z-score (surprise detection) |

| channel_width | $Q_{0.95} - Q_{0.05}$ (rolling 120 bars) | Quantile regression channel |

| skew_240m | Rolling skewness, $w{=}240$ | Return distribution asymmetry |

| kurt_240m | Rolling kurtosis, $w{=}240$ | Tail heaviness |

| er60 | $|\Delta p_{60}| / \sum_{i=1}^{60}|\Delta p_i|$ | Kaufman efficiency ratio $[0,1]$ |

| momentum_regime | Binary: MA crossover aligned with return sign | Trend alignment indicator |

| trend_strength | $\text{sign}(\text{ret}_{60m}) \times \text{ER}_{60} \times |\text{ret}_{60m}| / \sigma_{60m}$ | Signed ER x normalised magnitude |

| tod_sin | $\sin(2\pi \cdot \text{minute} / 1440)$ | Cyclical time-of-day encoding |

| tod_cos | $\cos(2\pi \cdot \text{minute} / 1440)$ | Cyclical time-of-day encoding |

Group 2: Cross-Index Features (11 features)

Every feature in this group traces directly to a specific Phase 2 gap study. These encode cross-index momentum, risk regime, volatility state, and structural spread dynamics.

| Feature | Formula / Definition | Source |

|---|---|---|

| tsmom_self_21d | $\text{sgn}\bigl(\sum_{i=1}^{21} r_i\bigr)$, trailing monthly return | Study #4 (TSMOM) |

| tsmom_idx2_21d | Same, for second index | Study #4 |

| tsmom_idx3_21d | Same, for third index | Study #4 |

| roro_ratio | $\ln(\text{NAS100} / \text{US30})$ | Study #2 (RORO) |

| roro_vs_sma21 | Binary: RORO ratio above/below 21d SMA | Study #2 |

| gk_vol_21d | Garman-Klass volatility, 21-day rolling | Study #5 (Vol regime) |

| gk_vol_pctile | Expanding percentile rank of GK vol | Study #5 |

| ibs | $(\text{close} - \text{low}) / (\text{high} - \text{low})$, daily | Study #8 (conditional on vol regime) |

| cross_idx_dispersion | $\sigma(\text{ret}_{60m}^{(i)})$ across all 3 indices | Study #4 (rotation signal) |

| log_spread_us30_us500 | $\ln(\text{US30}) - \ln(\text{US500})$ | Study #1 (novel) |

| log_spread_us30_nas100 | $\ln(\text{US30}) - \ln(\text{NAS100})$ | Study #1 (novel) |

Group 3: Cross-Asset Macro (7 features)

Macro features capture risk appetite, dollar strength, carry dynamics, and energy/inflation pressure. Three candidates were dropped due to insufficient history in the common training window.

| Feature | Formula / Definition | Rationale |

|---|---|---|

| vix_level | VIX spot value | Fear gauge level |

| vix_chg_60m | $\Delta\text{VIX}_{60m}$ | VIX momentum (shock detection) |

| dxy_ret_60m | $\ln(\text{DXY}_t / \text{DXY}_{t-60})$ | Dollar strength |

| dxy_corr_30 | Rolling 30-bar correlation(index, DXY) | Dollar correlation regime |

| usdjpy_ret_60m | $\ln(\text{USDJPY}_t / \text{USDJPY}_{t-60})$ | Yen carry proxy |

| btcusd_ret_60m | $\ln(\text{BTCUSD}_t / \text{BTCUSD}_{t-60})$ | Crypto risk appetite |

| brent_ret_60m | $\ln(\text{BRENT}_t / \text{BRENT}_{t-60})$ | Energy / inflation proxy |

Dropped instruments: TLT (only 3 months of M1 data in common window), LQD (3 months), USOIL (4 months; replaced by BRENT which has full coverage from 2016).

Group 4: Constituent Returns (6 features per model)

The top 5 constituents by index weight provide 60-minute returns as individual features. A sixth feature, constituent_dispersion, measures intra-index disagreement. The constituent set differs per model.

| Model | Top-5 Constituents | Dispersion Feature |

|---|---|---|

| US30 | GS, MSFT, HD, CAT, V | $\sigma(\text{ret}_{60m}^{(k)})$, $k \in \{1..5\}$ |

| NAS100 | AAPL, MSFT, NVDA, AMZN, GOOG | $\sigma(\text{ret}_{60m}^{(k)})$, $k \in \{1..5\}$ |

| US500 | AAPL, MSFT, NVDA, AMZN, JPM | $\sigma(\text{ret}_{60m}^{(k)})$, $k \in \{1..5\}$ |

Group 5: Intraday Seasonality (2 features)

| Feature | Definition | Rationale |

|---|---|---|

| session_flag | Asia = 0, London = 1, US = 2 | Session regime (liquidity + volatility differ by session) |

| minutes_since_us_open | Minutes elapsed since 13:30 UTC | Distance from highest-activity period |

Feature Count Summary

| Group | Features |

|---|---|

| Own-Instrument Core | 18 |

| Cross-Index | 11 |

| Cross-Asset Macro | 7 |

| Constituent Returns | 6 |

| Intraday Seasonality | 2 |

| Total | 44 |

Normalisation

| Method | Applied To | Window |

|---|---|---|

| rolling_z | Continuous non-stationary features (returns, distances, vol levels) | $w = 1440$ (24 hours) |

| zscore (expanding) | Stable distributions (GK vol percentile, kurtosis) | Expanding from start of training set |

| passthrough | Bounded or naturally scaled features (ER, IBS, session_flag, tod_sin/cos) | None |

Lookahead Prevention

All features are strictly causal. Daily IBS uses the previous completed day only. TSMOM signals use completed daily returns only. No feature reads future prices. Rolling windows use only data available at time $t$, with no forward-looking statistics.

Feature Provenance

The following table summarises the link between cross-index / cross-asset features and the Phase 2 gap studies that justified their inclusion.

| Feature(s) | Phase 2 Study | Key Finding |

|---|---|---|

| tsmom_self_21d, tsmom_idx2_21d, tsmom_idx3_21d, cross_idx_dispersion | Study #4 (Cross-index momentum) | TSMOM rotation: Sharpe 1.27 |

| roro_ratio, roro_vs_sma21 | Study #2 (RORO ratio) | Valid vol regime indicator; 20-28% higher vol in risk-off |

| gk_vol_21d, gk_vol_pctile | Study #5 (Vol regime selection) | MR works in high-vol NAS100 (Sharpe 0.99) |

| ibs | Study #8 (IBS/RSI replication) | Conditional on vol regime only; fails in aggregate |

| log_spread_us30_us500, log_spread_us30_nas100 | Study #1 (PW vs CW divergence) | Novel; extreme Z-score reversion observed |

| session_flag, minutes_since_us_open | Study #9 (Intraday seasonality) | Vol and momentum differ by session |

7.3 Normaliser Selection

Why Normalisation Matters

Raw features can drift across regimes — VIX level, channel width, and kurtosis all exhibit non-stationary behaviour over months-long windows. Without normalisation, drifting features dominate the neural net's gradient updates, causing training instability or the model learning spurious regime-dependent patterns. But normalisation can also destroy information, particularly in features where the raw scale is the signal. Absolute volatility levels, dispersion magnitudes, and vol ratios all carry meaning in their raw units that z-scoring can erase.

Methodology

Each of the 36 continuous features was tested under three normalisation strategies on the validation set (2025-07 to 2026-03):

| Strategy | Description |

|---|---|

| raw | No normalisation (baseline) |

| rolling_z | Causal 30-day rolling $3\sigma$ clip + z-score |

| rolling_winsor_z | Causal 30-day rolling 1st–99th percentile clip + z-score |

Static normalisation (global mean/std computed over the full dataset) was excluded because it leaks regime information and fails on drifting features — a model trained during a low-VIX period would see systematically biased inputs during a high-VIX regime.

Decision rule:

- Compute gain = AUC(rolling_z) $-$ AUC(raw) for each feature on each index.

- Average across all 3 indices.

- If avg gain $< -0.001$ AND rolling_z hurts on at least 2/3 indices → passthrough.

- If already bounded/binary → passthrough.

- Otherwise → rolling_z (safe default for drift protection).

The rolling_winsor_z strategy (percentile clip instead of $\sigma$-clip) was never chosen. Gains over rolling_z were marginal and inconsistent across the three indices.

Final Split: 17 Passthrough / 28 Rolling Z-Score

The per-feature decision rule produces a clear split: 17 features are passed through without normalisation, and 28 features use rolling_z.

Passthrough Features (17)

These fall into two categories:

Bounded/binary (9):

- er60 $[0,1]$

- momentum_regime $\{0,1\}$

- tod_sin $[-1,1]$, tod_cos $[-1,1]$

- roro_vs_sma21 $\{0,1\}$

- gk_vol_pctile $[0,1]$

- ibs $[0,1]$

- dxy_corr_30 $[-1,1]$

- session_flag $\{0,1,2\}$

- minutes_since_us_open $[0,1]$

Scale-is-signal (8):

- ret_60m — naturally mean-zero and stationary

- stdev60 and vol_30m — realised volatility is stationary; raw level encodes regime

- vol_session_ratio and vol_surprise — self-normalising ratios

- gk_vol_21d — daily Garman-Klass vol, naturally bounded (avg gain $-0.0022$)

- cross_idx_dispersion — strongest negative (avg gain $-0.0037$)

- vix_level — highest drift (2.72) but rolling_z kills regime signal (avg gain $-0.0037$)

Rolling Z-Score Features (28)

All other continuous features use rolling_z. Key beneficiaries:

| Feature | Avg $\Delta$AUC | Notes |

|---|---|---|

| kurt_240m | +0.0020 | High drift 1.67–1.75 |

| skew_240m | +0.0020 | — |

| channel_width | +0.0013 | High drift 4.5–4.8 |

| tsmom_idx3_21d | +0.0013 | Consistently positive all 3 indices |

| log_spread_us30_us500 | +0.0013 | Drifts by construction |

| abs_dist_ma120 | +0.0009 | Consistently positive all 3 indices |

| dxy_ret_60m | +0.0006 | Consistently positive all 3 indices |

| All constituent stock returns: rolling_z protects against earnings/split outliers | ||

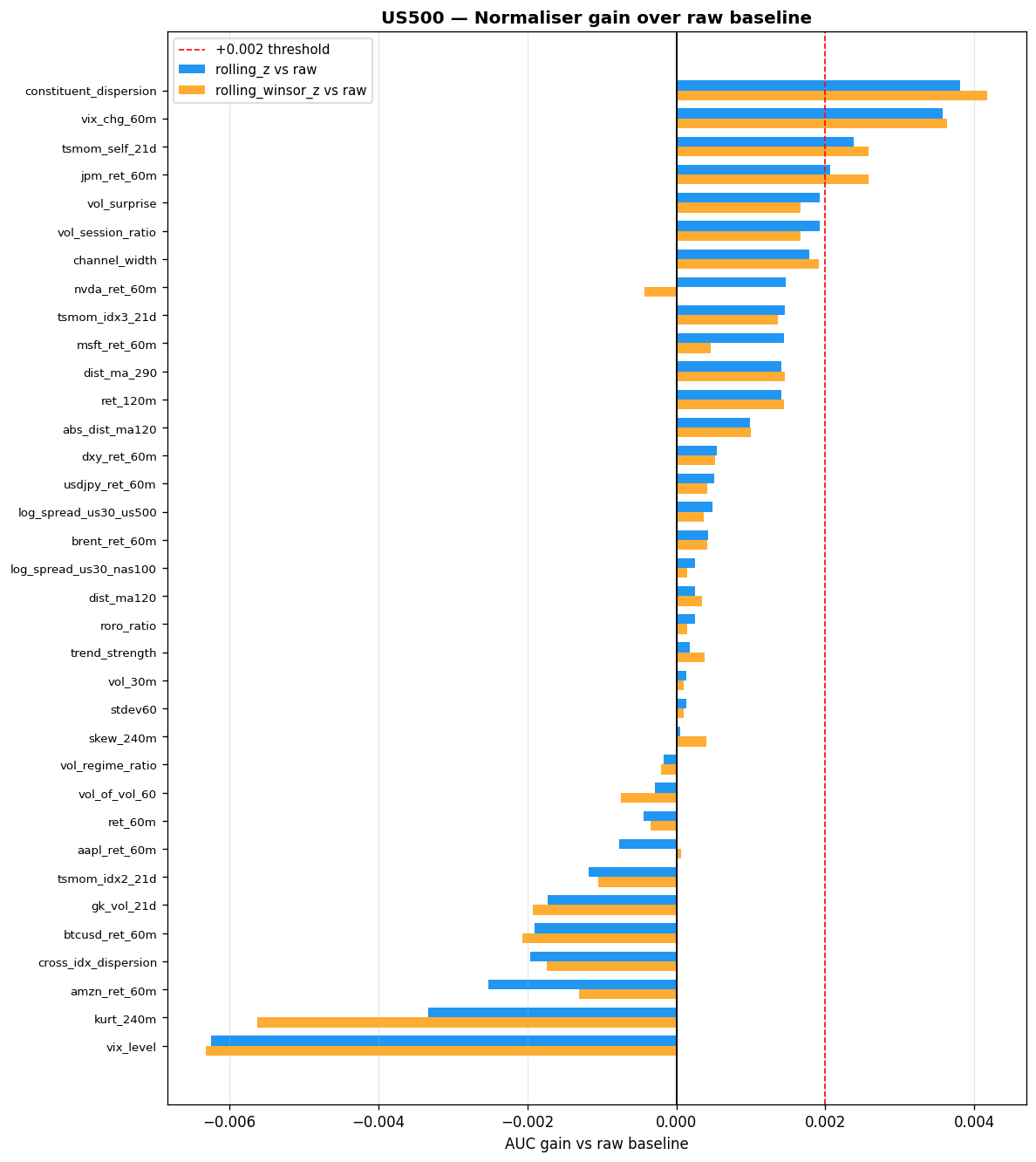

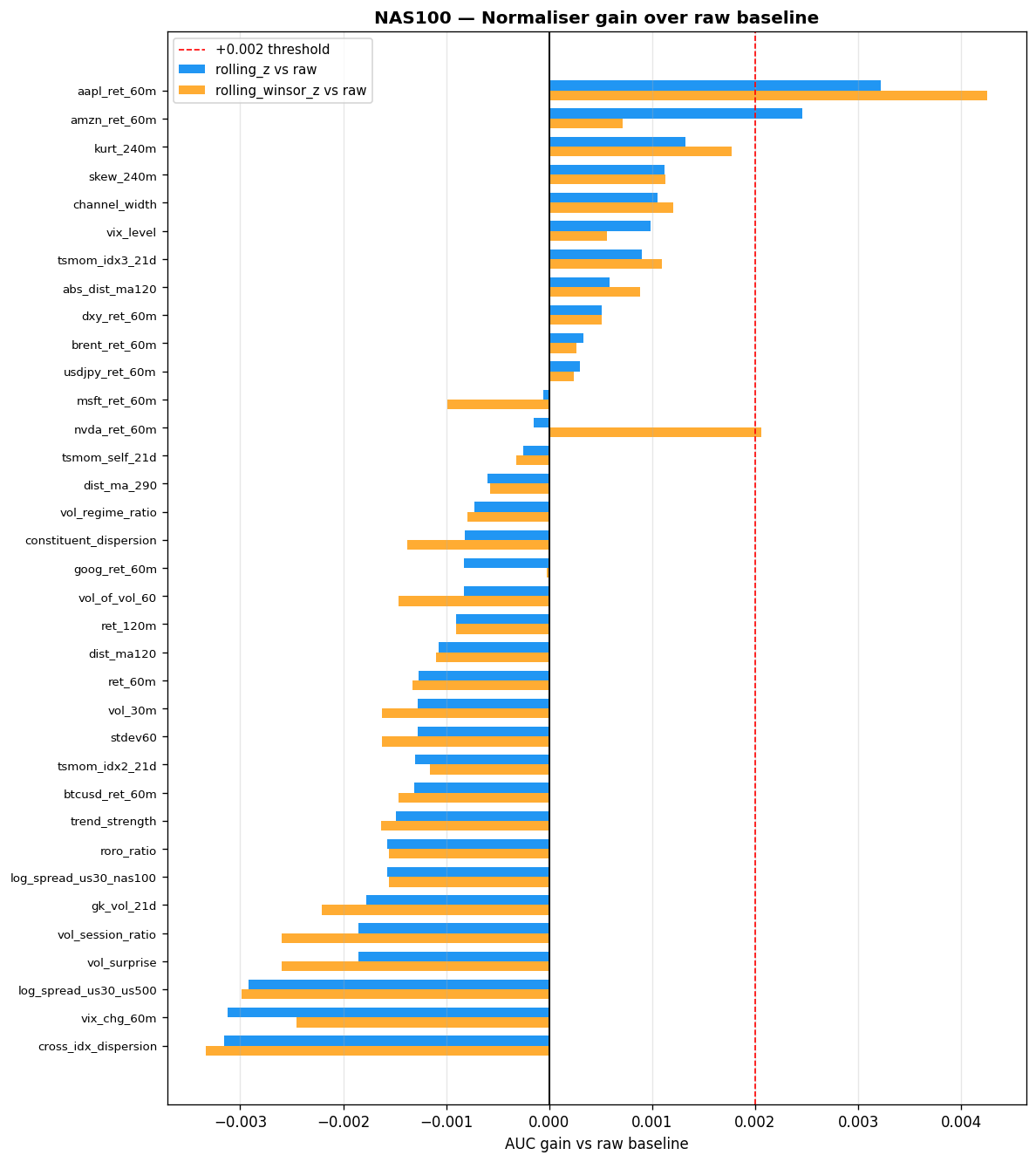

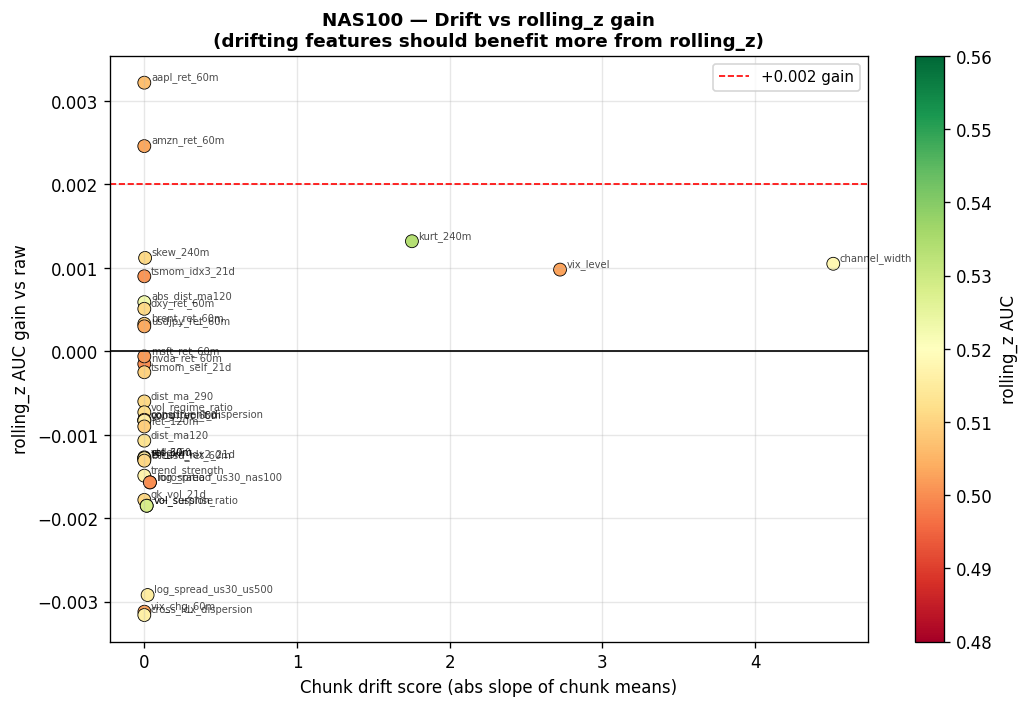

Cross-Instrument Results

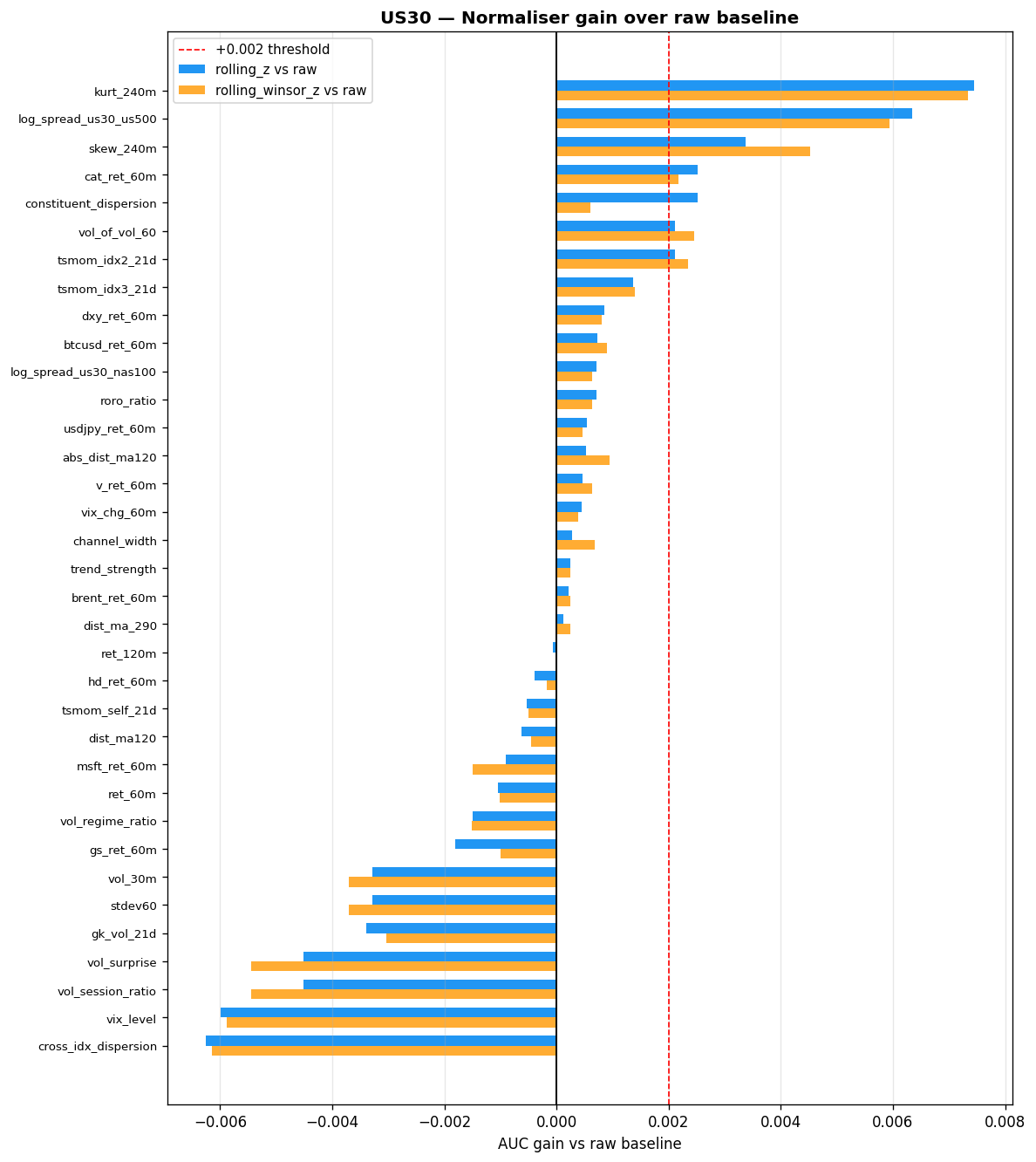

The following tables summarise AUC gains from rolling_z versus raw on each index. A positive value means normalisation helped; a negative value means the raw scale carried predictive information that z-scoring destroyed.

Features where rolling_z helps most (AUC gain $> 0.002$ on at least one index):

| Feature | US30 $\Delta$AUC | NAS100 $\Delta$AUC | US500 $\Delta$AUC | Drift Score |

|---|---|---|---|---|

| kurt_240m | +0.0074 | +0.0018 | — | 1.67 / 1.75 |

| log_spread_us30_us500 | +0.0063 | — | — | — |

| skew_240m | +0.0045 | — | — | — |

| aapl_ret_60m | — | +0.0043 | — | — |

| constituent_dispersion | — | — | +0.0042 | — |

| vix_chg_60m | — | — | +0.0036 | — |

| tsmom_self_21d | — | — | +0.0026 | — |

| amzn_ret_60m | — | +0.0025 | — | — |

Features where rolling_z hurts most (raw scale carries predictive information):

| Feature | US30 $\Delta$AUC | NAS100 $\Delta$AUC | US500 $\Delta$AUC |

|---|---|---|---|

| cross_idx_dispersion | -0.0061 | -0.0032 | — |

| vix_level | -0.0059 | — | -0.0063 |

| vol_session_ratio | -0.0045 | — | — |

| vol_surprise | -0.0045 | — | — |

| vol_30m | -0.0033 | — | — |

| stdev60 | -0.0033 | — | — |

Final Decision

| Normaliser | Count |

|---|---|

| passthrough | 17 (9 bounded + 8 scale-dependent) |

| rolling_z | 28 |

| Total | 45 |

VIX note: VIX has the highest drift (2.72) but is passthrough. If training instability is observed, $\log(\text{VIX})$ is a fallback that is more stationary while preserving regime information.

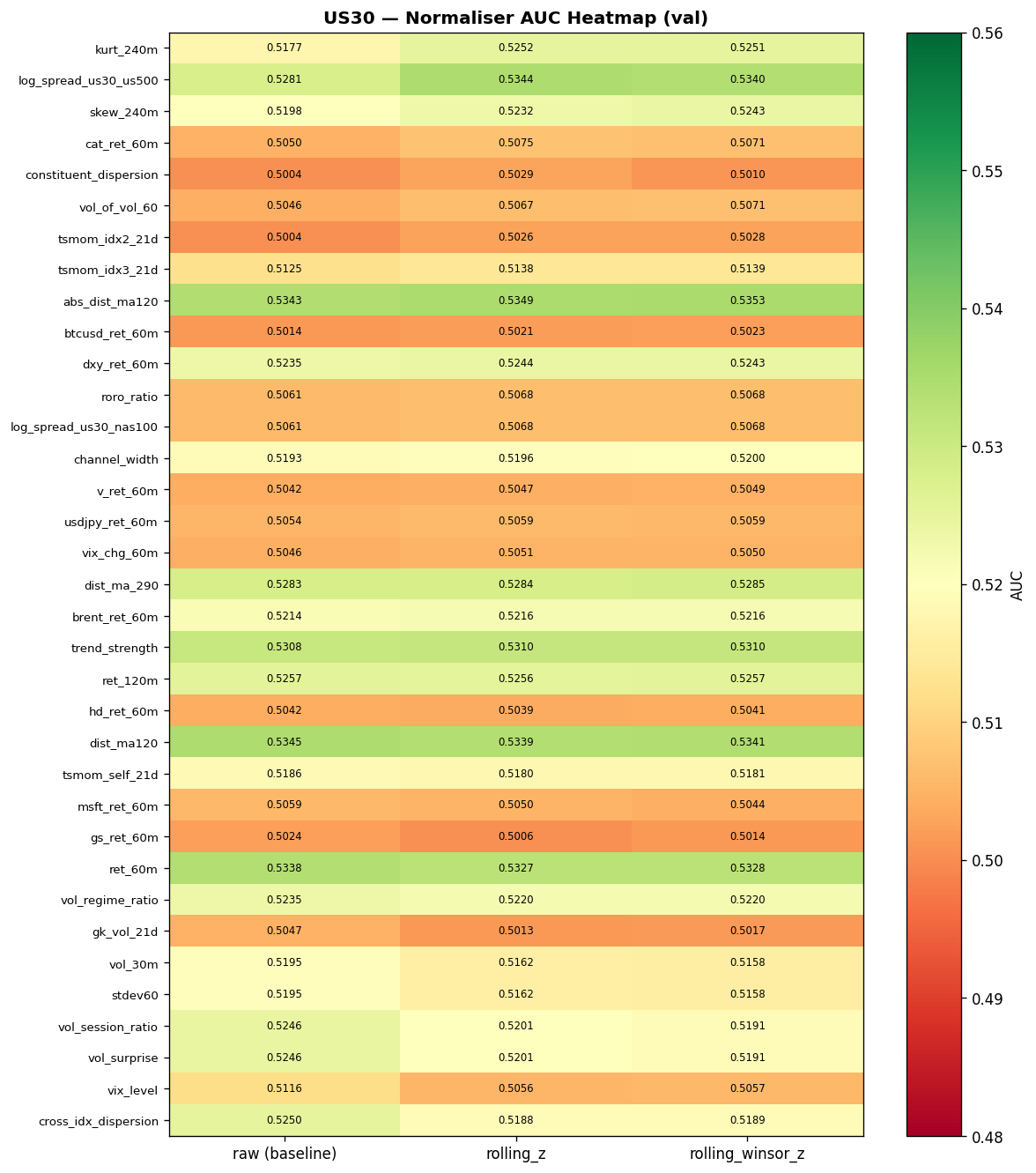

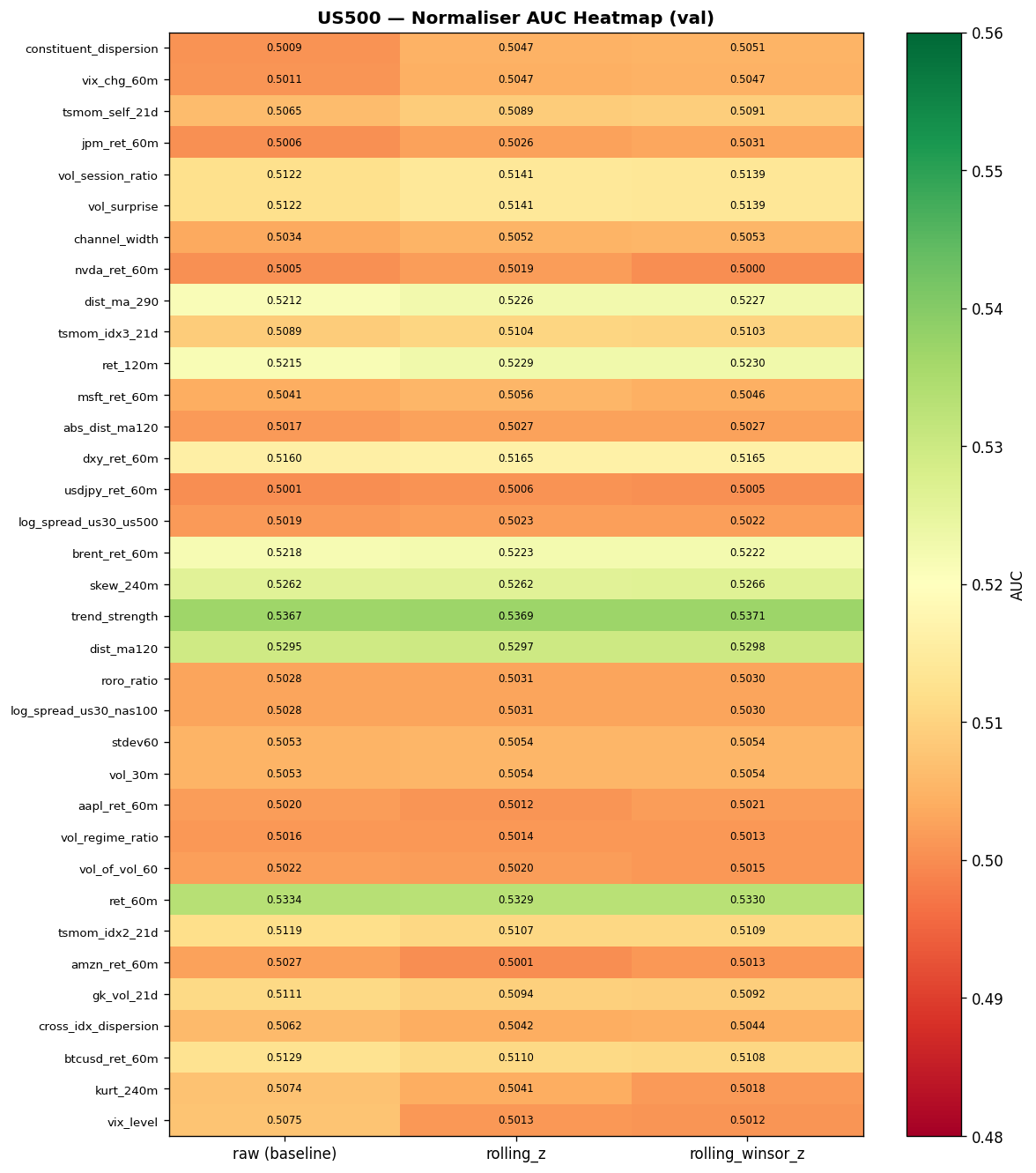

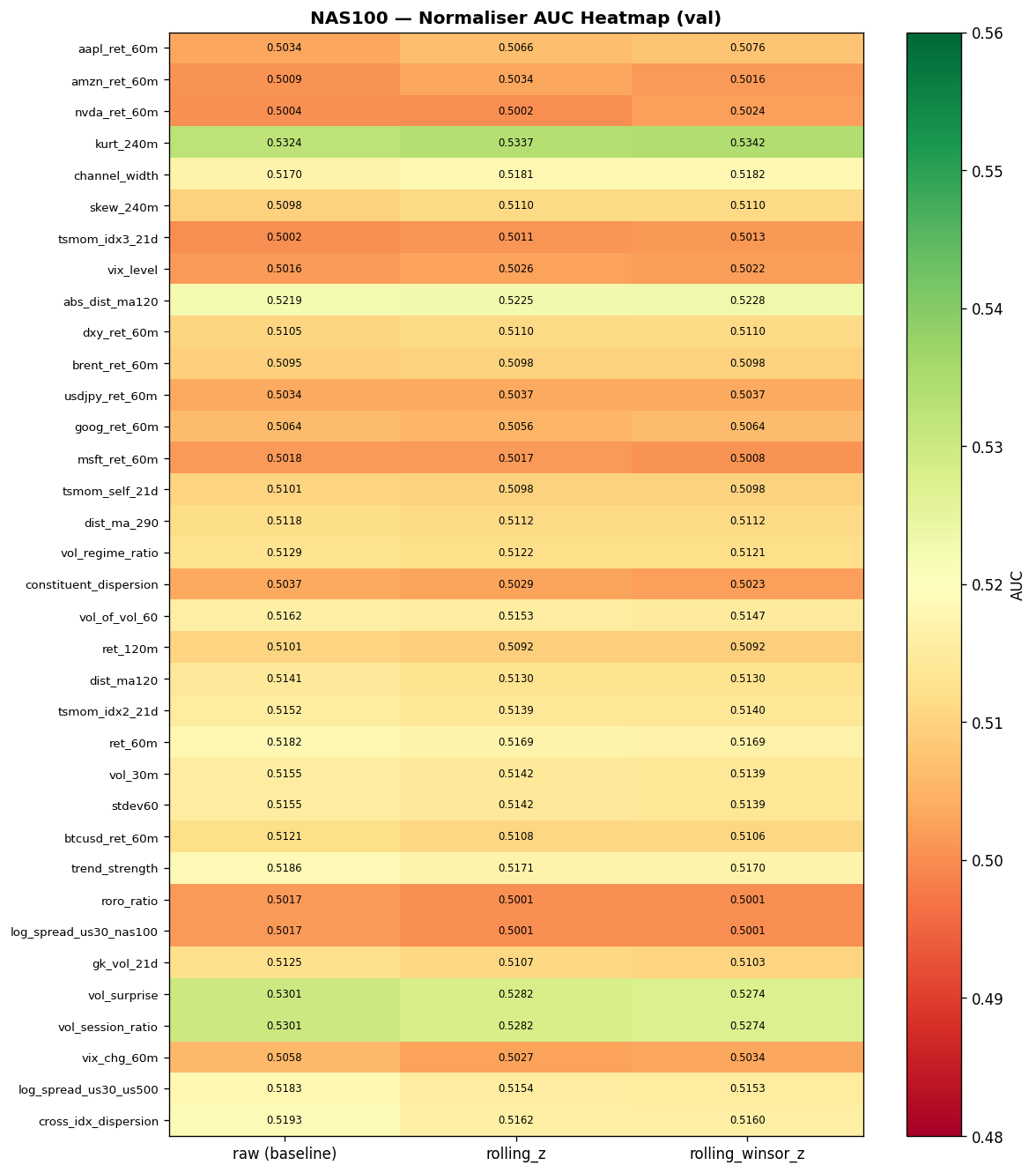

Normaliser AUC Heatmaps

The following heatmaps show directional AUC (one-vs-rest classifier on the double-barrier label) for each feature under each normalisation strategy. Green cells indicate AUC above baseline (0.5); darker shading indicates stronger signal.

Expand: US500 and NAS100 normaliser heatmaps

AUC Improvement from Rolling Z-Score

Bar charts showing the per-feature AUC change when switching from raw to rolling_z. Positive bars (green) indicate features that benefit from normalisation; negative bars (red) indicate features where the raw scale carries signal.

Expand: US500 and NAS100 AUC improvement charts

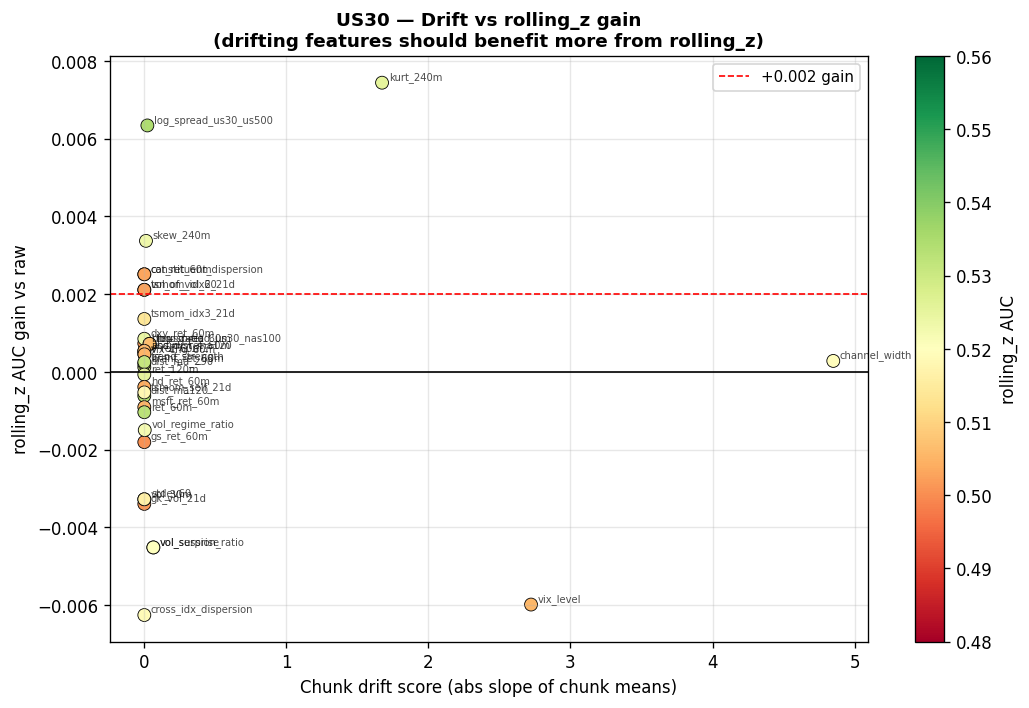

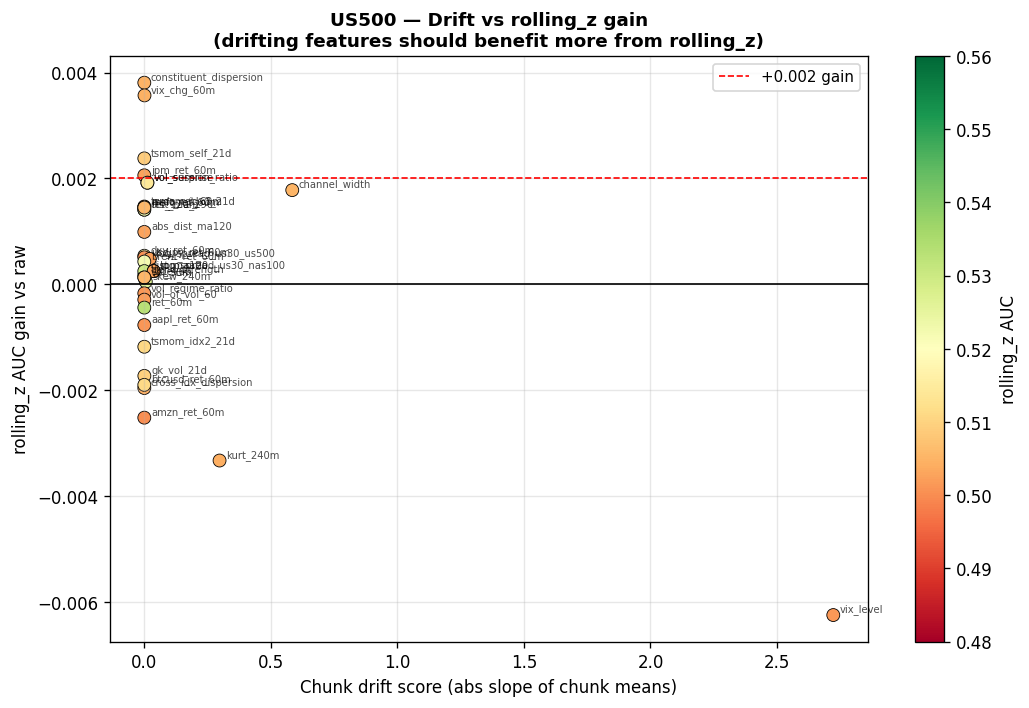

Drift Score vs. Normalisation AUC Gain